Try an interactive version of this dialog: Sign up at solve.it.com, click Upload, and pass this URL.

Context

I'm Dan — trained in physical oceanography, experienced in state-space modeling, finite difference methods, and data science, but new to investment theory. I'm working through Chris Bemis's Portfolio Statistics and Optimization (August 2023) to build understanding.

Book structure: Chapters are split into separate dialogs (ch_1.ipynb, ch_2.ipynb, etc.). Also available:

./spoiler.md— short digest of each chapter's point and how they form the book's arc./bib.ipynb— bibliography

Arc: The book builds from statistical foundations (distributions, covariance) through regression and optimization theory to Mean-Variance Optimization and coherent risk measures. Chris emphasizes using CAPM and Fama-French as interpretive tools rather than prescriptive models, given non-stationarity and fat tails in real markets.

Ch 1 covered: EMH, systemic vs idiosyncratic risk, CAPM (β as market sensitivity, α as excess return), Fama-French (adding size and value factors), and MVO setup. Key theme: models are useful for interpretation and comparison, but their stationarity assumptions are violated in practice.

Ch 2 covered: CDFs, PDFs, moments (mean, variance, skew, kurtosis), percentiles. Normal, log-normal, and Student t distributions — with emphasis on how real equity returns exhibit fat tails and asymmetry that the normal badly misses (837,128× underestimate of 6σ events!). Student t with ν=5 is a pragmatic alternative. Multivariate extensions (joint distributions, covariance matrices, independence). Weak Law of Large Numbers, Central Limit Theorem, and unbiased estimators.

Key Ch 2 insight: Two toy models illustrate diversification. Model 1 (iid returns) suggests you can diversify away all risk. Model 2 (returns = β×market + idiosyncratic) shows you can only diversify away idiosyncratic risk — systematic risk remains. This motivates the passive investing view ("just own the market") and sets up the question: what justifies active management?

Ch 3 covered: Covariance as an inner product (giving Cauchy-Schwarz bounds on correlation). CAPM revisited: β = ρ(σ_r/σ_m), derived via inner product machinery. Key insight: correlation measures linear dependence only — a perfect quadratic relationship can show ρ ≈ 0 if positive and negative branches cancel.

Copulas: Sklar's theorem lets you separate any joint distribution into (1) a copula (pure dependence structure in [0,1]ᴺ space) and (2) arbitrary marginals. The probability integral transform (F(X) ~ U[0,1]) is the bridge. Gaussian copula infamously underestimated tail dependence in 2008 CDO crisis — Student-t copulas better capture "extremes cluster" behavior.

Eigendecomposition of Σ: Eigenportfolios (eᵢ'X) are uncorrelated and variance-ranked (Var = λᵢ). This is just PCA on returns. Empirically: one dominant eigenvalue (the market), a few sector factors, then a bulk of near-zero eigenvalues that are mostly estimation noise (random matrix theory). Ill-conditioning (large λ_max/λ_min) causes optimization to blow up on those noisy directions.

Key Ch 3 insight: The largest eigenportfolio is a proxy for "the market" — lending empirical support to simple CAPM. Correlations are unstable (regime-dependent) and spike in crises, which copula models must account for or fail catastrophically.

My approach: I'm comfortable with the calculus and linear algebra; I'm here for the finance intuition and the book's arc toward coherent risk measures. I skipped the Ch 2 exercises (PDF integration drills) since later chapters use distributions as tools rather than deriving them.

Please read formatting_instructions.md in this directory and apply it throughout this dialog.

view(path=formatting_instructions.md)

{

"id": "toolu_01Pm4FLbhnbEVKEo8QHJtP28",

"call": {

"function": "view",

"arguments": {

"path": "formatting_instructions.md"

}

},

"result": "\"# Formatting Instructions for Chapter Dialogs\\n\\nWhen preparing dialogs for future chapters, apply these transformations:\\n\\n1. **Dollar signs in LaTeX**: Replace `\\\\$` (backslash-dollar) with `$` \u2014 the escape doesn't render correctly, possibly due to KaTeX interference.\\n\\n2. **Figure paths**: Replace local paths like `fig/1.1.png` with the GitHub raw URL:\\n ```\\n https://raw.githubusercontent.com/dangoldner/bemis/main/fig/1.1.png\\n ```\\n The local path isn't accessible in the published version of the dialog.\""

}

Got it! I'll apply these formatting rules throughout this dialog:

- Dollar signs in LaTeX: Use

$instead of\$ - Figure paths: Use

https://raw.githubusercontent.com/dangoldner/bemis/main/fig/...instead of localfig/...paths

Ready to continue with Chapter 4 whenever you are!

Sorry, I mean search every message in this dialog and make the relevant transformations described in those rules.

find_msgs(re_pattern=\\\\\\$|fig/, as_xml=True)

{

"id": "toolu_01NMTgECAEBBXuSg7Gc7ba65",

"call": {

"function": "find_msgs",

"arguments": {

"re_pattern": "\\\\\\\\\\\\$|fig/",

"as_xml": "True"

}

},

"result": "'<msgs><prompt id=\"_d9e08c3d\"><source>Please read formatting_instructions.md in this directory and apply it throughout this dialog.<out><p class=\"leading-tight\">\ud83e\udde0\ud83e\udde0\ud83e\udde0\ud83e\udde0\ud83e\udde0\ud83e\udde0\ud83e\udde0\ud83e\udde0</p>\\n<details class=\"tool-usage-details\">\\n<summary>view(path=formatting_instructions.md)</summary>\\n<div class=\"relative\"><div><div data-code=\"{\\n "id": "toolu_01Pm4FLbhnbEVKEo8QHJtP28",\\n "call": {\\n "function": "view",\\n "arguments": {\\n "path": "formatting_instructions.md"\\n }\\n },\\n "result": "\\\\"# Formatt<TRUNCATED>"

}

Found one match. Let me view that note with line numbers to make the figure path replacements:

read_msgid(id=_6a8b34c4, nums=True)

{

"id": "toolu_016wkRpKeUM2iBTx7fNdDBn5",

"call": {

"function": "read_msgid",

"arguments": {

"id": "_6a8b34c4",

"nums": "True"

}

},

"result": "{'id': '_6a8b34c4',\n 'time_run': '',\n 'is_exported': 0,\n 'skipped': 0,\n 'hidden': False,\n 'bookmark': {},\n 'i_collapsed': 0,\n 'o_collapsed': 0,\n 'heading_collapsed': False,\n 'i_clamp': False,\n 'o_clamp': False,\n 'pinned': 0,\n 'oob': {},\n 'order': 12,\n 'run': False,\n 'scroll': False,\n 'content': \" 1 \u2502 #### 4.3.3 Variable Selection\\n 2 \u2502 \\n 3 \u2502 In every case in OLS, a model with more input variables will fit better than one with less. However, additional regressors in the design matrix, $X \\\\in \\\\mathbb{R}^{N \\\\times p}$ , increases the chances of *collinearity* among the columns of<TRUNCATED>"

}

I found two figure paths to fix (lines 123 and 128). Let me apply the transformations:

msg_strs_replace(id=_6a8b34c4, old_strs=['', ''], new_strs=['

{

"id": "toolu_013sx544xuX7Y3paJXfRos8G",

"call": {

"function": "msg_strs_replace",

"arguments": {

"id": "_6a8b34c4",

"old_strs": "['', '']",

"new_strs": "['', '']"

}

},

"result": "{'success': 'Successfully replaced all the strings in message _6a8b34c4'}"

}

Done! I found and fixed 2 figure paths in message _6a8b34c4 (the Variable Selection section):

fig/4.1.png→ GitHub raw URLfig/4.2.png→ GitHub raw URL

No \$ patterns were found that needed conversion to $.

Ordinary Least Squares

Our work in Ordinary Least Squares (OLS) begins with the Capital Asset Pricing Model (CAPM). Fitting the parameters of the model to historical data is reduced to a calculus problem and an associated assumption about so-called idiosyncratic error terms. After establishing the methodology for calculating the CAPM parameters, we look at the implications of the model, giving testable hypotheses which we examine with market data. We find, as in Fama-French [10], for example, that these hypotheses are not borne out in the market, however.

The approach used to fit a CAPM model is justified through the establishment of the method of maximum likelihood, which, more generally justifies our approach in OLS. Our treatment of the subject is principally mathematical, relying in large part on the properties of projection operators.

We discuss and derive from three main assumptions (here identified as the Gauss-Markov assumptions and a sometimes-accompanying distributional assumption) the distributional properties of estimators of regression parameters. These distributions allow us to determine confidence intervals for our estimators as well as establish so-called null hypothesis tests [18, 6]. Our primary test statistics will be the t-test and F-test, which we build from first principles. The latter test statistic lends itself to model selection criteria, and we build upon this in our presentation of forward selection, backward elimination, and stepwise regression techniques.

We conclude with an introductory treatment on a generalization of ordinary least squares – aptly named Generalized Least Squares (GLS).

4.1 CAPM and Least Squares

The Capital Asset Pricing Model (CAPM) [22, 31] relates the time series of a given stock’s returns, \(\{r_t\}\) , to the returns of the contemporaneous market, \(\{m_t\}\) , as

\[r_t - r_f = \beta(m_t - r_f) + \epsilon_t, \tag{4.1}\]

where \(r_f\) is the risk-free rate, the rate at which one may borrow with a probability of default on the bond being zero. Treasury bills are the obvious and most likely candidate here, the dynamics of the US debt notwithstanding.

We will not at present make any assumptions about the distribution of \(\{\epsilon_t\}\) . However, we will assume that the \(\epsilon_t\) 's are iid, and share the same distribution as some \(\epsilon\) . Similarly, we will assume that the market returns, \(m_t\) are iid and distributed as some \(m\). Finally, we assume as before that

\[\begin{aligned} \text{Cov}(m, \epsilon) &= 0 \\ \text{Var}(\epsilon) &= \sigma_\epsilon^2 \\ \text{Var}(m) &= \sigma_m^2. \end{aligned}\]

We consider the case where we have observations \(\{r_t\}_{t=1}^T\) and \(\{m_t\}_{t=1}^T\) , drawn from \(r\) and \(m\), respectively, and seek an estimate, \(\hat{\beta}\) of \(\beta\). We have already seen in (3.11) that

\[\beta = \frac{\rho\sigma_r}{\sigma_m} = \rho \frac{\sigma_r}{\sigma_m},\]

and we made mention in (3.14) that a method of moments estimator for \(\beta\) is

\[\hat{\beta} = \hat{\rho} \frac{\hat{\sigma}_r}{\hat{\sigma}_m}.\]

Here we show that this estimator coincides with what is called the least squares estimate for reasons that will become clear momentarily.

Let \(f\) be a function of the as-yet unknown \(\beta\) given by

\[f(\beta) = \sum_{t=1}^N (r_t - r_f - \beta(m_t - r_f))^2. \quad (4.2)\]

Mathematically, finding the value which minimizes \(f(\cdot)\) is an attractive and perhaps natural proposition. We know from calculus that \(f(\cdot)\) is parabolic and hence has a single minimizer, which may be found where the derivative \(f'(\cdot)\) is zero. We will now show that this minimizer is the method of moments estimator of \(\beta\) , why this link to least squares minimization exists, and that this minimizer is an unbiased estimator of \(\beta\) .

4.1.1 Minimizing \(f(\cdot)\)

The minimizer of \(f(\cdot)\) above is exactly the method of moments estimator of \(\beta\) . The result follows from calculus and the assumption that the sample covariance between \(\epsilon\) and \(m\) ,

\[\hat{\sigma}_{\epsilon m} = \frac{1}{T-1} \sum_{t=1}^T (m_t - \bar{m})(\epsilon_t - \bar{\epsilon})\]

satisfies \(\hat{\sigma}_{\epsilon m} = 0\) , with \(\bar{m}\) and \(\bar{\epsilon}\) the estimators for the mean we have seen previously. For notation, we denote

\[\begin{aligned} x_t &= m_t - r_f \\ y_t &= r_t - r_f. \end{aligned}\]

Notice that

\[\bar{x} = \frac{1}{T} \sum_{t=1}^{T} x_t = \bar{m} - r_f\] \[\bar{y} = \frac{1}{T} \sum_{t=1}^{T} y_t = \bar{r} - r_f,\]

and since variance and covariance are both shift independent,

\[\hat{\sigma}_x^2 = \hat{\sigma}_m^2\] \[\hat{\sigma}_y^2 = \hat{\sigma}_r^2\] \[\hat{\sigma}_{xy}^2 = \hat{\sigma}_{mr}^2\] \[\hat{\sigma}_{xe}^2 = \hat{\sigma}_{me}^2,\]

where the estimators of variance are as in (2.43).

In these new variables, \(f(\cdot)\) becomes

\[f(\beta) = \sum_{t=1}^{T} (y_t - \beta x_t)^2.\]

The minimum of \(f(\cdot)\) occurs when the derivative is set to zero since the function is quadratic in \(\beta\) , hence we solve

\[f'(\beta) = 2 \sum_{t=1}^{T} (y_t - \beta x_t) \cdot x_t = 0\] \[= \sum_{t=1}^{T} \epsilon_t \cdot x_t = 0. \quad (4.3)\]

Notice that for any sequences of random variables \(\{u_t\}\) and \(\{v_t\}\) ,

\[\sum_{t=1}^{T} u_t v_t = (T-1) \hat{\sigma}_{uv} + T \bar{u} \bar{v},\]

and

\[\sum_{t=1}^{T} u_t^2 = (T-1) \hat{\sigma}_u^2 + T \bar{u}^2.\]

These relationships are left to the reader.

Continuing, we have

\[\sum_{t=1}^{T} (y_t - \beta x_t) \cdot x_t = \sum_{t=1}^{T} y_t x_t - \beta \sum_{t=1}^{T} x_t^2\] \[= (T-1) \hat{\sigma}_{xy} + T \bar{x} \bar{y} - \beta \left( (T-1) \hat{\sigma}_x^2 + T \bar{x}^2 \right)\] \[= (T-1) \left( \hat{\sigma}_{xy} - \beta \hat{\sigma}_x^2 \right) + T \left( \bar{x} \bar{y} - \beta \bar{x}^2 \right).\]

Our assumption that \(\hat{\sigma}_{m\epsilon}=0\) , or equivalently, that \(\hat{\sigma}_{x\epsilon}=0\) gives

\[\sum_{t=1}^T x_t \epsilon_t - T \bar{x} \bar{\epsilon} = 0.\]

But since we know from (4.3) that \(\sum_{t=1}^T x_t \epsilon_t = 0\) , this gives that \(\bar{x}\bar{\epsilon}=0\) as well. Returning to our formulation above, we see then that

\[\begin{aligned}(T-1)(\hat{\sigma}_{xy} - \beta \hat{\sigma}_x^2) + T(\bar{x}\bar{y} - \beta \bar{x}^2) &= (T-1)(\hat{\sigma}_{xy} - \beta \hat{\sigma}_x^2) + T\bar{x}(\bar{y} - \beta \bar{x}) \\&= (T-1)(\hat{\sigma}_{xy} - \beta \hat{\sigma}_x^2) + T\bar{x}\bar{\epsilon} \\&= (T-1)(\hat{\sigma}_{xy} - \beta \hat{\sigma}_x^2).\end{aligned}\]

Solving \(f'(\beta)=0\) therefore amounts to solving

\[\hat{\sigma}_{xy} - \beta \hat{\sigma}_x^2 = 0,\]

which yields

\[\beta = \frac{\hat{\sigma}_{xy}}{\hat{\sigma}_x^2}\]

as expected.

Well, yeah - if $\epsilon $ is correlated to the market then what's the point of the model?

Exactly right. The whole point of CAPM is to decompose returns into:

- Systematic: \(\beta(m - r_f)\) — the part explained by market exposure

- Idiosyncratic: \(\epsilon\) — everything else (company-specific news, etc.)

If \(\epsilon\) were correlated with \(m\), that correlation would by definition belong in the systematic term.

It is critical to point out that we used an assumption that the error terms \(\{\epsilon_t\}\) had zero correlation with the market returns \(\{m_t\}\) . Without this assumption, the estimate of \(\beta\) from this least squares approach keeps the terms eliminated in the final parts of our derivation; namely, we arrive at a solution

\[\beta = \frac{(T-1)\hat{\sigma}_{xy} + T\bar{x}\bar{y}}{(T-1)\hat{\sigma}_x^2 + T\bar{x}^2}.\]

Implicitly (and maybe naturally), we minimized the function \(f(\cdot)\) with the constraint of idiosyncrasy of the error terms.

Whoa - decomposing the idiosyncratic term into ... oh, \(\alpha\) is constant, so we're pulling out the time-mean of the previously-defined epsilon?

Exactly. In the original model, \(\epsilon\) could have a non-zero mean. Now we're writing:

\[\epsilon_{\text{old}} = \alpha + \epsilon_{\text{new}}\]

where \(\alpha = \mathbb{E}[\epsilon_{\text{old}}]\) and \(\epsilon_{\text{new}}\) is zero-mean.

This \(\alpha\) is the famous "alpha" — excess return beyond what the market exposure (\(\beta\)) predicts. If \(\alpha > 0\), the asset is outperforming its CAPM benchmark. That's what active managers are chasing.

Actually Chris hasn't said yet that \(\bar{\epsilon}=0\), but I don't see the point of naming \(\alpha\) if it isn't.

Right — without imposing \(\mathbb{E}[\epsilon] = 0\), \(\alpha\) isn't uniquely identified. You could shift any constant between them. The convention is that \(\alpha\) absorbs all the constant term, which implicitly forces \(\mathbb{E}[\epsilon] = 0\).

Chris will likely make this explicit when he sets up the estimation problem — it becomes a constraint that pins down the intercept.

and look again at the least squares function, now in two variables,

\[f(\alpha, \beta) = \sum_{t=1}^N (r_t - r_f - (\alpha + \beta(m_t - r_f)))^2, \quad (4.5)\]

we may, by solving for where the gradient, \(\nabla f\) , is zero,

\[\nabla f(\alpha, \beta) = \begin{pmatrix} \frac{\partial f}{\partial \alpha}(\alpha, \beta) \\ \frac{\partial f}{\partial \beta}(\alpha, \beta) \end{pmatrix} = 0.\]

we obtain a system of equations in \(\alpha\) and \(\beta\) . For the former, we see –using the same notation as before– that

\[\frac{\partial f}{\partial \alpha}(\alpha, \beta) = -2 \sum_{t=1}^N (r_t - r_f - (\alpha + \beta(m_t - r_f))).\]

Setting this equal to zero gives

\[\alpha = \bar{r}_t - r_f - \beta(\bar{m}_t - r_f).\]

We leave it to the reader to show that

\[\beta = \frac{\hat{\sigma}_{rm}}{\hat{\sigma}_m^2}.\]

We denote these estimates by \(\hat{\alpha}\) and \(\hat{\beta}\) , respectively.

The intercept term in (4.4) has other implications. In particular for the least squares estimators \((\hat{\alpha}, \hat{\beta})\) , the estimators of \(\{\epsilon_t\}\) , \(\{\hat{\epsilon}_t\}\) , satisfy (without explicit assumption)

\[\bar{\epsilon} = 0\] \[\hat{\sigma}_{m\epsilon} = 0.\]

Showing the first equation is straightforward:

\[\bar{\epsilon} = \frac{1}{T} \sum_{t=1}^{T} (r_t - r_f - \hat{\alpha} - \hat{\beta}(m_t - r_f))\] \[= \frac{1}{T} \sum_{t=1}^{T} (r_t - r_f - \hat{\beta}(m_t - r_f)) - \hat{\alpha}\] \[= \bar{r}_t - r_f - \hat{\beta}(\bar{m}_t - r_f) - \hat{\alpha}\]

which is zero by the construction of \(\hat{\alpha}\) . The second equation follows from this and the partial derivative with respect to \(\beta\) of (4.5) being zero.

The Capital Asset Pricing Model [22, 31] is a normative model: the model in (4.1) omits an intercept term and defines the relationship of the idiosyncratic terms \(\{\epsilon_t\}\) to the market through assumptions. This is not a model derived from observations and relationships in the market, but based on assumptions of how a market ought to perform. We prefer a somewhat nontraditional definition, relating a given security's adjusted returns to the market as in (4.4). The allowance for nonzero \(\alpha\) has serious implications, though, which we address momentarily. The other major defining features of the model are obtained rather than assumed in this formulation, however. This intersection of parsimony and flexibility is hard to pass up.

We can formulate the relationship between random variables implied by (4.4), as

\[r - r_f = \alpha + \beta(m - r_f) + \epsilon.\]

Maintaining assumptions of idiosyncrasy of \(\epsilon\) , which, follow from the least squares estimators, but are not properties of the model as such, and taking expectations on both sides, we get

\[\mathbb{E}(r - r_f) = \alpha + \beta(\mathbb{E}(m) - r_f),\]

or

\[\mathbb{E}(r) = r_f + \alpha + \beta(\mathbb{E}(m) - r_f).\]

This last line is intimately involved with the Efficient Market Hypothesis [28, 2]. In the case that we have a fully explanatory model, a nonzero \(\alpha\) should be precluded in an efficient market. Any alternative allows for securities which give opportunities for risk free excess returns. Consider the case where \(\alpha > 0\) . A portfolio, \(\Pi\) , with returns \(r_\Pi\) , long one unit of the security with returns \(r\) and short \(\beta\) units of the market will have an expected return

\[\mathbb{E}(r_\Pi) = r_f + \alpha.\]

That is, the expected return of the portfolio will exceed the risk free rate while having no linear relationship to the market. Here we have used the fact that the \(\beta\) of a portfolio is the weighted sum of the \(\beta\) s of its positions. We forgo requiring a proof of this until we have established more optimization techniques as the current applications find a direct and amenable analogue there.

We say that such a portfolio provides an opportunity for statistical arbitrage: positive returns are expected in excess of those explained by selected factors. In our current model, the only factor (usually called a risk factor) is the market proper. A particular type of statistical arbitrage often claimed by academics and practitioners alike involves cross-sectional comparisons of fundamental or technical data; e.g., comparing ratios like earnings before interest and taxes over enterprise value at a given time for a set of stocks under consideration (we will have a more formal treatment of these terms in a following chapter). We will call these types of statistical arbitrage opportunities anomalies.

Empirically, the omission of \(\alpha\) in (4.1) is a mistake. Equity anomalies abound. As such, we use (4.4) for the identification of anomalies rather than assume away their existence. Notice, however, that statistical arbitrage and anomalies are defined in relation to some model. The specification of that model, then, is critical in the assessment of perceived opportunities and statistical descriptions of any security or portfolio of securities. A consequence of the above is that one measure of a powerful descriptive model in the equity space is its ability to statistically identify an \(\alpha\) of zero for many of the widely accepted and observed anomalies in the literature (as determined by models such as CAPM or its extensions).

"A consequence of the above is that one measure of a powerful descriptive model in the equity space is its ability to statistically identify an α of zero for many of the widely accepted and observed anomalies in the literature (as determined by models such as CAPM or its extensions)."

Say what?

It's a bit convoluted, but the idea is:

CAPM finds α ≠ 0 for certain stocks (anomalies). A better model (more factors) might explain those same returns with α ≈ 0 — the "anomaly" was just a missing factor.

So the test of a good model is: does it make previously-anomalous alphas disappear? That's exactly what Fama-French does — the size and value factors absorb much of what looked like alpha under plain CAPM.

Oh - a better model explains more variance. But back in Ch 1 or 2 didn't Chris complain that the trend was toward models with more and more factors?

Yes — there's a tension here. More factors → less unexplained variance → alphas shrink. But that opens two problems:

- Overfitting: With enough factors you can "explain" anything, including noise

- Factor zoo: Hundreds of published factors, many likely spurious (data mining)

The challenge is finding factors that are economically meaningful (like size and value arguably are) versus statistically convenient. Fama-French stopped at 3–5 factors for a reason — beyond that, you're probably fitting noise rather than capturing real risk premia.

This connects back to the Variable Selection section coming up — how do you decide which factors earn their place?

I hate to be pedantic (actually, I love being pedantic) but aren't your two problems the same problem?

You're right — they're really two facets of the same thing. Overfitting is when you add too many factors and fit noise. Factor zoo is when the field collectively does the same thing via publication bias (only "significant" factors get published, but run enough tests and you'll find spurious ones).

Same disease, individual vs. collective strains.

Practitioners will often refer to the \(\alpha\) of a given security, portfolio, strategy, or idea. These references necessarily presuppose some underlying model description – oftentimes the assumed model is CAPM. Similarly, \(\beta\) is also used as a descriptive characteristic for securities and portfolios alike. In practice, references to \(\beta\) as such are made to the sensitivity to the market; i.e., CAPM- \(\beta\) .

They should presuppose some underlying model description, but isn't alpha often relative to a vague wave toward "everyone else's models"? At least it seems that way to me hearing terms that filter through to non-professionals, maybe the pros understand it more rigorously.

You're spot on. In rigorous usage, α is always relative to a specific model — CAPM-α, Fama-French-α, etc. But in casual usage (marketing materials, financial media), "alpha" often just means "outperformance" relative to some vaguely-defined benchmark or peer group.

That vagueness is convenient for fund managers — harder to disprove "we generate alpha" if you never specify the model. A skeptic would ask: alpha relative to what factors? And over what period? With what statistical significance?

4.1.2 Interpretations and Implications

The Capital Asset Pricing Model (without intercept but with idiosyncratic assumptions) implies several relationships between returns and volatility, idiosyncratic volatility, correlation to the market, and \(\beta\). The model

\[r - r_f = \beta(m - r_f) + \epsilon\]

gives under the CAPM assumptions that

\[\begin{aligned}\mathbb{E}(r) &= r_f + \beta(\mathbb{E}(m) - r_f) \\ &= r_f + \rho \frac{\sigma_r}{\sigma_m} (\mathbb{E}(m) - r_f) \\ &= r_f + \rho \sigma_r \frac{\mathbb{E}(m) - r_f}{\sigma_m},\end{aligned}\]

where \(\rho = \text{Corr}(r, m)\) . The term \(\frac{\mathbb{E}(m) - r_f}{\sigma_m}\) will make another appearance when we look at the so-called Sharpe Ratio. For now, however, we note that if the expected return of the market exceeds the risk free rate1, \(\mathbb{E}(r)\) , is an increasing function of \(\beta\) , \(\sigma_r\) , and correlation to the market. Where in studying the latter two cases empirically, care must be taken to control for correlation and \(\sigma_r\) , respectively.

1A very minor assumption in theory, almost by fiat, but one that may be violated using estimators over unfortunate time windows

We may also solve for \(\sigma_r\) , obtaining a similar result,

\[\sigma_r = \sigma_m \frac{1}{\rho} \frac{\mathbb{E}(r) - r_f}{\mathbb{E}(m) - r_f}.\]

Here, as the expected return increases, leaving all else fixed, so does the volatility. Again, when testing this relationship empirically, a control for correlation must be in place.

The volatility of \(r\) may be decomposed into systemic and idiosyncratic components as

\[\begin{aligned}\text{Var}(r - r_f) &= \beta(m - r_f) + \epsilon \\ \sigma_r^2 &= \beta^2 \sigma_m^2 + \sigma_\epsilon^2.\end{aligned}\]

It is? I see \(\sigma^2_r=\beta^2\sigma^2_m+\sigma^2_{\epsilon}\), which I read as "idiosyncratic risk is positively and monotonically related to higher risk."

You're right to push back. That equation directly links σ_ε to σ_r (total variance), not to expected returns.

Under pure CAPM, idiosyncratic risk is not compensated — only systematic risk (β) earns a premium. The whole point is that you can diversify away idiosyncratic risk, so the market shouldn't pay you for bearing it.

Chris may be setting up an empirical claim that contradicts CAPM theory (which would be another "anomaly"). But as stated, the logic doesn't follow from that variance decomposition alone.

I didn't think the model admitted any idiosycratic return. Maybe that's what he means? "If you did inadvertantly stumble across some idiosyncratic return, but were following this model, you'd diversify it away"

That's a good reading. Under CAPM, E[ε] = 0, so there's no idiosyncratic return to capture — only idiosyncratic risk to eliminate.

But if you believe α exists (anomalies, skill, whatever), then diversification is a double-edged sword: it reduces idiosyncratic risk but also dilutes the alpha signal. Concentrated portfolios are riskier but necessary to express a view.

That's the active manager's dilemma — you need concentration to beat the market, but concentration exposes you to uncompensated risk.

We will see an elegant proof that ameliorates this concern on the surface—namely that the model gives preferential treatment to the market over any other security or portfolio. This proof, however, will explicitly state that all investors should be holders only of a risk free asset and the market, in varying proportion determined by their risk preferences. We postpone this proof until we have established Merton’s mean variance optimization problem.b

"ameliorates this concern" by reassuring us that there's no idiosyncratic return out there to find in the first place so we need not worry that we're diversifying it away?

Exactly. The proof will show: under CAPM assumptions, the optimal portfolio is the market (plus risk-free). So don't worry about diversifying away alpha — there isn't any to find. Just own the market and adjust your leverage to taste.

It's the theoretical foundation for passive investing. Of course, whether you believe the assumptions is another matter.

My 12-year-old has a small portfolio which she manages this way. I'll be very curious to learn whether that's a bad idea.

For a 12-year-old? Probably excellent. The empirical evidence is that most active managers underperform passive index funds after fees — and she's learning the right habits early.

The case against passive only gets interesting if you believe you have genuine informational or analytical edge. At 12, the lesson that markets are hard to beat is a gift.

Did I miss it? Where was that demonstrated? Every case above ... did I miss it multiple times?

You didn't miss it. The only place it appears is in the first rearrangement:

\[\mathbb{E}(r) = r_f + \rho \sigma_r \frac{\mathbb{E}(m) - r_f}{\sigma_m}\]

That's one case showing E(r) increasing with σ_r (holding ρ fixed). The variance decomposition σ²_r = β²σ²_m + σ²_ε doesn't say anything about expected returns.

"Every case above" seems to be Chris being a bit loose.

Well let's focus on that one because it's fundamental. I get the risk free rate plus \(\beta\) times the market rate. If \(\rho\) is higher, my market-multiplier is higher because I'm tracking the market rate better. For fixed \(\rho\), if \(\sigma_r\) is higher, my market-multiplier is higher because ... why? It's like the 2x leveraged market case in chapter 1. When the market returns are positive, mine are even more positive. When negative, mine are even more negative. Ok that's right.

Exactly. With ρ fixed, higher σ_r means you're taking amplified bets on the market direction. Since E(m) > r_f (the equity risk premium), amplification pays off in expectation — you're getting more exposure to a positive-expected-value game.

The catch is that amplification works both ways, so your risk scales too. The market doesn't give you extra return for free — it's compensation for bearing that amplified volatility.

The implication of the CAPM model, then, and its interpretation within the framework of the Efficient Market Hypothesis, is that apparent excess returns are obtained by taking on risk.

This is not borne out in practice, however. In fact, the equity market prices risk perversely: returns to higher volatility names underperform low volatility names. This feature seems to generalize when one encounters the value, momentum, accrual, and fraud anomalies, for instance. In each of these cases, portfolios of a priori preferable names outperform less desirable names, and they do so with more attractive risk profiles.

So much for theory. But this belies the CAPM assertion that risk pays. It doesn't belie the implication (so far) that passive wins. (At least, not immediately; it might with some further work.)

Right. The low-volatility anomaly says CAPM mispredicts which stocks earn higher returns — but it doesn't immediately tell you how to exploit it profitably after costs, or whether the anomaly persists once known.

However, there's a thread worth pulling: if low-vol stocks systematically outperform, couldn't you just hold a low-vol index passively? That's "smart beta" — passive in execution, but tilted toward known anomalies. It blurs the active/passive line.

Is the S&P500 large-cap or total market? Large cap, I think -- that's what defines it, right? Should I move her to the Russel 3000?

S&P 500 is large-cap — roughly the 500 largest US companies by market cap. Russell 3000 adds mid and small-cap, covering ~98% of US equities.

If you buy the low-vol anomaly, moving to Russell 3000 might actually hurt — you'd be adding the higher-volatility small-caps that empirically underperform on a risk-adjusted basis. Staying S&P 500 keeps her tilted toward the safer names.

In the next example we look at one of the implications of CAPM based on estimators observed in the equity markets over the last twenty years.

Example 4.1.1. Using the same cross sectional dataset we have used previously, spanning the period from 10/31/1997-5/31/2014 (200 months), we look at the average performance of volatility quartiles, recalculated monthly and held for one month. Our universe under consideration looks at the 1,000 largest US stocks by market cap each month – a liquid and tradeable set of securities.

| Volatility | Q1 | Q2 | Q3 | Q4 |

|---|---|---|---|---|

| Average Performance (Ann.) | 10.00% | 10.41% | 10.13% | 9.44% |

| Volatility (Ann.) | 12.07% | 16.20% | 19.58% | 32.28% |

| Performance per Vol Point (Ann.) | 0.83 | 0.64 | 0.52 | 0.29 |

From the table we see little to no evidence of compensation for realized volatility in the cross section. If anything a quick visual inspection of the annualized average performance indicates that the market slightly prefers less volatile stocks to more volatile. The decrease is not monotonic in annual performance, however.

Looking more closely, though, if we consider the annual performance per volatility point – something akin to \(\frac{\mu}{\sigma}\) – we see a clear monotonic decrease as we go from lower to higher volatility names.

We have seen previously in our discussion of CAPM that the model implies a relationship between expected return and a product of correlation to the market and own-volatility. We did not control for correlation in this original analysis. This control can be approximated by performing so-called double sorts.

For each quartile of volatility, we may further subdivide into four quartiles by correlation. If equity returns follow the CAPM model, we would expect that within each volatility quartile, as we increase correlation (going from Quartile 1 to Quartile 4) we should see an increase in average annualized returns. And further, within each correlation quartile, an increase in volatility should correspond to an increase in expected return.

| Volatility\Correlation | Q1 | Q2 | Q3 | Q4 |

|---|---|---|---|---|

| Q1 | 10.17% | 10.30% | 10.62% | 8.94% |

| Q2 | 10.13% | 11.32% | 9.45% | 10.71% |

| Q3 | 10.47% | 9.31% | 8.84% | 11.91% |

| Q4 | 7.40% | 9.06% | 8.37% | 12.89% |

Visually, there does not appear to be evidence in the empirical table to support the hypothesis that, even when controlling for correlation, the market compensates investors for bearing risk in the equity markets – where, again, risk here is equated to realized own-volatility. We may conduct more rigorous statistical tests, but we postpone those slightly.

From the table above, there is evidence that over this period, ex post equity returns are negatively associated with ex ante volatility within each quartile of correlation, so that the control we established does not support the implications of the CAPM model.

Perhaps, though, we should focus again on some sort of risk-adjusted returns. We again take the ratio of the sample average return to the sample volatility and present the results in the table below.

| Volatility\Correlation | Q1 | Q2 | Q3 | Q4 |

|---|---|---|---|---|

| Q1 | 0.86 | 0.89 | 0.85 | 0.59 |

| Q2 | 0.70 | 0.72 | 0.57 | 0.53 |

| Q3 | 0.58 | 0.50 | 0.42 | 0.49 |

| Q4 | 0.25 | 0.27 | 0.24 | 0.36 |

The monotonicity is more dramatic here. But in the wrong direction of the hypotheses of CAPM. In fact, we see that the most attractive performance per unit of risk is to be found in the lowest quartile of correlation within the lowest quartile of volatility.

One may conduct the same analysis as in the preceding example sorting by estimated \(\beta\) from (4.4). Based on the results here, one might expect that the empirical relationship between \(\beta\) and ex post returns is again negative. Interestingly, the double sort methodology in \(\beta\) and correlation does not produce the same results as the volatility/correlation double sorts. In particular, in the period under study, equity market returns show a preference for low \(\beta\) with high correlation.

At first glance, this seems to be counter-intuitive. However, we may interpret \(\beta\) as the sensitivity of an asset’s return to movements in the market. Correlation, on the other hand, simply measures the linear relationship between the asset and market’s returns. A high correlation indicates the asset’s returns can in fact be explained by the market.

An alternative approach is to re-sort to (3.11) where we may rearrange terms to find

\[\rho=\beta\frac{\sigma_m}{\sigma_r},\]

so that a higher correlation indicates a lower own-volatility. These findings are left to the reader to verify.

4.2 Maximum Likelihood

In the preceding section we obtained estimates for \(\alpha\) and \(\beta\) in CAPM models by finding critical points (minima) of quadratic functions. While we were

able to relate these values to method of moments estimators, we did not supply a justification for the usage of least squares. Here we establish one such justification.

Let \(\{X_i\}_{i=1}^N\) be random variables with joint density \(f_\theta(\cdot)\) , where \(\theta\) is some vector of parameters which determine the density. For example, if \(f_\theta(\cdot)=\phi_{\mu,\Sigma}(\cdot)\) , \(\theta=(\mu,\Sigma)\) . Or, in the case of a Student \(t\) distribution, \(\theta\) may include the degrees of freedom. We have become familiar with the need for estimators, and the present case will be no exception. In particular, we identify an estimator \(\hat{\theta}\) .

Given observations \(x_1,\dots,x_N\) , the likelihood function based on these observations is

\[L(\theta)=f_\theta(x_1,\dots,x_N).\] (4.6)

The maximum likelihood estimator of \(\theta\) , \(\hat{\theta}\) , is the maximizer of

\[\max_\theta f_\theta(x_1,\dots,x_N).\] (4.7)

The general case is likely not applicable. A common implementation of maximum likelihood estimation assumes that \(\{X_i\}_{i=1}^N\) are iid, reducing the joint density \(f_\theta(\cdot)\) to a product of identical marginals,

\[f_\theta(x_1,\dots,x_N)=\prod_{i=1}^N\tilde{f}_\theta(x_i).\] (4.8)

Maximizing a product directly is generally difficult. Logarithms transform products into sums, preserve order, and are continuous on their domain. As such, the maximizer of

\[\max_\theta \log(f_\theta(x_1,\dots,x_N))\] (4.9)

is exactly the maximizer of (4.7). In the case of iid \(\{X_i\}_{i=1}^N\) , (4.8) becomes

\[\log(f_\theta(x_1,\dots,x_N))=\sum_{i=1}^N\log(\tilde{f}_\theta(x_i)).\] (4.10)

We call the logarithm of the likelihood function \(\log(L(\theta))\) the log-likelihood function.

Example 4.2.1. Let \(\{X_i\}_{i=1}^N\) be univariate normal random variables, and assume that the \(X_i\) 's are iid. We have that \(\theta=(\mu,\sigma)\) . The likelihood function given observations \(\{x_i\}_{i=1}^N\) is

\[L(\mu,\sigma)=\prod_{i=1}^N\frac{1}{\sqrt{2\pi\sigma^2}}\exp\left(-\frac{(x_i-\mu)^2}{2\sigma^2}\right)\]

or

\[L(\mu,\sigma)\propto\prod_{i=1}^N\frac{1}{\sigma}\exp\left(-\frac{(x_i-\mu)^2}{2\sigma^2}\right)\]

where \(\propto\) denotes proportional to; viz., maximizing \(L(\cdot)\) is equivalent to maximizing \(c \cdot L(\cdot)\) for any constant \(c\) , so we may eliminate the normalization factor \(\frac{1}{\sqrt{2\pi}}\) . The log-likelihood function becomes

\[l_0(\mu, \sigma) = - \sum_{i=1}^{N} \left[ \log(\sigma) + \frac{(x_i - \mu)^2}{2\sigma^2} \right].\]

Now maximizing \(l_0(\cdot)\) is equivalent to minimizing

\[l(\mu, \sigma) = \sum_{i=1}^{N} \left[ \log(\sigma) + \frac{(x_i - \mu)^2}{2\sigma^2} \right].\]

As before, the minimum will occur exactly when \(\nabla l = 0\) .

Looking at the \(\frac{\partial l}{\partial \mu}\) in the gradient, we solve

\[-\frac{1}{\sigma^2} \sum_{i=1}^{N} [x_i - \mu] = 0,\]

giving the estimator we have seen previously:

\[\hat{\mu} = \frac{1}{N} \sum_{i=1}^{N} x_i.\]

The maximum likelihood estimator of \(\sigma^2\) , however, is given by

\[\hat{\sigma}_N^2 = \frac{1}{N} \sum_{i=1}^{N} (x_i - \mu)^2,\]

a biased estimator of the variance.

Maximum likelihood also finds application in the model

\[y_t = \beta' x_t + \epsilon_t\]

where \(\beta \in \mathbb{R}^p\) , \(x_t \in \mathbb{R}^p\) is nonrandom, and the \(\{\epsilon_t\}\) are iid distributed as

\[\epsilon \sim N(0, \sigma^2).\]

In this case, the likelihood function in \((\beta, \sigma^2)\) may be written as,

\[\begin{aligned} L(\beta, \sigma^2) &\propto \prod_{i=1}^{N} \frac{1}{\sigma} \exp \left( \frac{\epsilon_i^2}{2\sigma^2} \right) \\ &= \prod_{i=1}^{N} \frac{1}{\sigma} \exp \left( \frac{(y_i - \beta' x_i)^2}{2\sigma^2} \right). \end{aligned}\]

The log-likelihood function is

\[l_0(\beta, \sigma^2) = -\sum_{i=1}^N \log(\sigma) + \frac{(y_t - \beta' x_t)^2}{2\sigma^2}\]

which is maximized exactly when the function

\[l(\beta, \sigma^2) = N \log(\sigma) + \frac{1}{2\sigma^2} \sum_{i=1}^N (y_t - \beta' x_t)^2\]

is minimized. The minimum occurs when the gradient is identically zero,

\[\frac{\partial l}{\partial \beta_i} = 0\]

for \(i = 1, \dots, N\) . This coincides exactly with the minimizer of

\[f(\beta) = \sum_{i=1}^N (y_t - \beta' x_t)^2\]

so that minimizing the quadratic function \(f(\cdot)\) coincides with the maximum likelihood estimator when residuals are independent and normally distributed. We will explicitly state when this assumption is necessary in our proofs that follow.

Right. CAPM as a predictive model of returns fails empirically — higher risk doesn't earn higher reward, and often the opposite.

But CAPM (and OLS) as descriptive machinery is still useful: estimate β to measure market exposure, estimate α to identify anomalies, decompose variance into systematic vs. idiosyncratic. The math is fine; it's the efficient-market interpretation that doesn't survive contact with data.

4.3 OLS

Consider the model

\[y_t = \beta' x_t + \epsilon_t \quad (4.11)\]

with \(\beta \in \mathbb{R}^p\) , \(x_t \in \mathbb{R}^p\) nonrandom, \(\{\epsilon_t\}\) univariate random variables. In particular, we assume that each \(y_t\) is a univariate random variable, and that the randomness is driven by \(\epsilon_t\) . We may rewrite the above as

\[Y = X\beta + \epsilon \quad (4.12)\]

with

\[Y = \begin{pmatrix} y_1 \\ \vdots \\ y_T \end{pmatrix}, \quad X = \begin{pmatrix} x_{11} & \dots & x_{1p} \\ \vdots & & \vdots \\ x_{T1} & \dots & x_{Tp} \end{pmatrix}, \quad \epsilon = \begin{pmatrix} \epsilon_1 \\ \vdots \\ \epsilon_T \end{pmatrix}.\]

Here, \(\epsilon\) is now a random vector.

We call the estimator, \(\hat{\beta}\) , obtained from minimizing the quadratic function

\[\min_{\beta} ||Y - X\beta||^2 \quad (4.13)\]

the ordinary least squares (OLS) estimate of \(\beta\) . We have seen in the previous section that in the case that \(\epsilon \sim N(0, \sigma^2 I)\) , the OLS estimate and maximum likelihood estimate coincide.

The following assumptions are collectively, often referred to as the Gauss-Markov assumptions. We have

- \(X \in \mathbb{R}^{N \times p}\) is a nonrandom matrix with \(N > p\) and full rank.

- \(\epsilon\) is a random vector with \(\mathbb{E}(\epsilon) = 0\) .

- \(\text{Var}(\epsilon_t) = \sigma^2\) for all \(t\) , and \(\text{Cov}(\epsilon_i, \epsilon_j) = 0\) for \(i \neq j\) ; i.e., \(\text{Cov}(\epsilon) = \sigma^2 I\) .

In addition to the above, we will resort to a distributional assumption for \(\epsilon\) when we develop distributional properties for \(\hat{\beta}\). In particular, we will assume that \(\epsilon \sim N(0, \sigma^2 I)\).

Theorem 4.3.1. Under the first two Gauss-Markov assumptions, the OLS estimate of \(\beta\) given by

\[\hat{\beta} = (X'X)^{-1}X'Y \quad (4.14)\]

is unbiased.

Proof. Since \(X\) is full rank, we know that \((X'X)^{-1}\) is invertible. Looking at the equation

\[Y = X\beta + \epsilon\]

then, we have

\[\begin{aligned} X'Y &= X'X\beta + X'\epsilon \\ (X'X)^{-1}X'Y &= \beta + (X'X)^{-1}X'\epsilon. \end{aligned}\]

Defining \(\hat{\beta}\) as the term on the left hand side,

\[\hat{\beta} = (X'X)^{-1}X'Y,\]

we see that

\[\begin{aligned} \mathbb{E}(\hat{\beta}) &= \mathbb{E}(\beta) + \mathbb{E}((X'X)^{-1}X'\epsilon) \\ &= \beta + (X'X)^{-1}X'\mathbb{E}(\epsilon) \\ &= \beta \end{aligned}\]

so that \(\hat{\beta}\) is an unbiased estimator of \(\beta\) .

Notice in our previous theorem that the estimator \(\hat{\beta}\) is a random variable since \(Y\) is a random vector driven by \(\epsilon\) . As such, we may obtain a point estimate based on a specific set of observations, but we may also want to, as in our work with the estimator for the mean, understand the distribution of \(\hat{\beta}\) . Similarly, the \(\epsilon\) values are unobservable. As such, we will be left with estimators of \(\epsilon\) . Under the full Gauss-Markov assumptions with the distributional assumption on \(\epsilon\) , we may satisfactorily address both of these curiosities. Before doing so, however,

we identify an important structural property of the matrix \(X(X'X)^{-1}X'\) which will be used extensively and which, further, lends to more interpretability of the OLS estimators.

We define the matrix \(H\) as

\[H = X(X'X)^{-1}X'. \quad (4.15)\]

In the literature, \(H\) is often called the hat matrix. We have immediately that

\[\begin{aligned} HY &= X(X'X)^{-1}X'Y \\ &= X\hat{\beta}. \end{aligned}\]

That is, the effect of multiplying \(Y\) by \(H\) is to obtain our estimate, \(X\hat{\beta}\) of \(X\beta\) . We call

\[\hat{Y} = X\hat{\beta} \quad (4.16)\]

and show in what follows that \(\hat{Y}\) is the projection of \(Y\) onto the space spanned by the columns of \(X\) . We begin by showing that \(H\) is a projection matrix.

We say a matrix \(P\) is a projection matrix if it satisfies the following two conditions: \(P^2 = P\) and \(P' = P\) . \(P\) is necessarily a square matrix. Projection matrices, \(P\) , have several useful properties.

Projection Matrix Property 1: The only eigenvalues of \(P\) a projection matrix are 0 and 1.

Proof. Let \(\lambda\) be an eigenvalue of \(P\) with associated eigenvalue, \(v\) . By the first condition of being a projection matrix, we have that

\[\begin{aligned} Pv &= \lambda v \\ P^2v &= P(\lambda v) = \lambda Pv = \lambda^2v \end{aligned}\]

so that

\[\lambda v = \lambda^2v\]

or \((\lambda^2 - \lambda)v = 0\) . Since \(v\) is nonzero, we have that \(\lambda(\lambda - 1) = 0\) , proving the result.

The intuition behind the preceding result is that projection matrices (under some appropriate basis) fix some subspace of \(\mathbb{R}^N\) and send to zero its orthogonal complement. This motivates the third property we will note. First, we look at \(I - P\) .

Projection Matrix Property 3: \(I - P\) is also a projection.

Proof. We verify the properties of a projection matrix. We have

\[\begin{aligned} (I - P)^2 &= I - 2P + P^2 \\ &= I - P \end{aligned}\]

and

\[(I - P)' = I' - P' = I - P.\]

Returning to interpreting the eigenvalue property, we have:

Projection Matrix Property 3: Any vector \(x\) may be decomposed as \(x = u + v\) where \((u, v) = 0\) and \(u = Px\) . In this case, \(||x||^2 = ||u||^2 + ||v||^2\) .

Proof. The statement of the property dictates the proof. Let \(u = Px\) . We verify the claims in turn. We have yet to define \(v\) , but note that if

\[x = u + v\]

then necessarily

\[v = x - u = x - Px = (I - P)x.\]

The inner product of \(u\) and \(v\) is now

\[\begin{aligned}(u, v) &= (Px, (I - P)x) \\ &= P(x, x)(I - P)' \\ &= ||x||^2 P(I - P) \\ &= 0\end{aligned}\]

Since \(P(I - P) = P - P^2 = 0\) . The norm of \(x\) , then becomes

\[\begin{aligned}||x||^2 &= (x, x) \\ &= (u + v, u + v) \\ &= (u, u) + 2(u, v) + (v, v) \\ &= ||u||^2 + ||v||^2.\end{aligned}\]

We call \(u = Px\) in the above proof the projection of \(x\) by \(P\) .

Returning to the matrix obtained from our OLS estimate, the matrix \(H\) is a projection matrix since

\[\begin{aligned}H^2 &= X(X'X)^{-1}X'X(X'X)^{-1}X' \\ &\text{by associativity} \\ &= X(X'X)^{-1}X' \\ &= H.\end{aligned}\]

Now for matrices \(A\) and \(B\) , \((A')^{-1} = (A^{-1})'\) , and \((AB)' = B'A'\) so that

\[\begin{aligned}H' &= (X(X'X)^{-1}X')' \\ &= X((X'X)^{-1})'X' \\ &= X((X'X)')^{-1}X' \\ &= X((X'X))^{-1}X' \\ &= H.\end{aligned}\]

As a result, \(\hat{Y} = HY\) is the projection of \(Y\) by \(H\) . By construction, then, \(\hat{Y}\) is the projection of \(Y\) onto the space spanned by the columns of \(X\) .

Defining the estimator of the residuals, \(\epsilon\) by

\[\hat{\epsilon} = Y - \hat{Y}, \quad (4.17)\]

we have that \(\hat{\epsilon} = (I - H)Y\) , or that the estimated residuals are a projection of \(Y\) onto the orthogonal complement of the space spanned by the columns of \(X\) . Necessarily, then, \(\hat{\epsilon}\) and \(\hat{Y}\) are orthogonal; i.e., \((\hat{\epsilon}, \hat{Y}) = 0\) . We also have the deconstruction \(Y = \hat{Y} + \hat{\epsilon}\) with norm \(||Y||^2 = ||\hat{Y}||^2 + ||\hat{\epsilon}||^2\) .

We may also show that

\[\hat{\epsilon} = (I - H)\epsilon \quad (4.18)\]

but leave this to the reader.

I confess I skimmed this quickly, but it 'feels' like SVD only more black-and-white, more binary.

Good intuition. SVD gives you a spectrum of singular values — directions weighted by importance. Projection matrices are the degenerate case: eigenvalues are only 0 or 1. You're either in the subspace (kept perfectly) or orthogonal to it (annihilated).

\(H\) keeps whatever lies in the column space of \(X\); \((I-H)\) keeps whatever's orthogonal to it. No partial shrinkage, no soft weighting — just a clean split.

Notice that under the Gauss-Markov assumptions, the covariance of the estimator of \(\epsilon\) is not a diagonal matrix. In particular, we have

\[\begin{aligned} \text{Cov}(\hat{\epsilon}) &= \text{Cov}((I - H)Y) \\ &= (I - H)\text{Cov}(Y)(I - H)' \\ &= (I - H)\text{Cov}(\epsilon)(I - H) \\ &= \sigma^2(I - H)^2 \\ &= \sigma^2(I - H). \end{aligned}\]

And

\[\text{Var}(\hat{\epsilon}_i) = \sigma^2(1 - h_{ii}),\]

so that the variables, the so-called jackknife residuals,

\[\frac{\hat{\epsilon}_i}{\sigma\sqrt{1 - h_{ii}}} \quad (4.19)\]

have constant unit variance if the model is specified correctly. Notice, however, that \(\sigma\) is not known and must be estimated. We return to this issue in the exercises, but will note it below as well in determining some statistical properties for \(\hat{\beta}\).

The \(\epsilon\)'s are "supposed" to be uncorrelated. But if they are indeed uncorrelated, that doesn't mean their projections onto some smaller space spanned by the model will be uncorrelated, and so we get off-diagonals.

Under these same assumptions, we also have that the covariance of \(\hat{\beta}\) is

\[\text{Cov}(\hat{\beta}) = \sigma^2(X'X)^{-1}. \quad (4.20)\]

This follows directly from \(\hat{\beta} = \beta + (X'X)^{-1}X'\epsilon\) , giving

\[\begin{aligned} \text{Cov}(\hat{\beta}) &= \text{Cov}(\beta + (X'X)^{-1}X'\epsilon) \\ &= (X'X)^{-1}X'\text{Cov}(\epsilon)((X'X)^{-1}X')' \\ &= \sigma^2(X'X)^{-1}X'((X'X)^{-1}X')' \\ &= \sigma^2(X'X)^{-1}X'X(X'X)^{-1} \\ &= \sigma^2(X'X)^{-1}. \end{aligned}\]

If we assume further that \(\epsilon \sim N(0, \sigma^2I)\) , then from what has proceeded, \(\hat{\beta} \sim N(\beta, \sigma^2(X'X)^{-1})\) . In this case,

\[\frac{\hat{\beta}_i - \beta_i}{\sigma\sqrt{c_{ii}}} \sim N(0, 1), \quad (4.21)\]

where \(C = (X'X)^{-1}\) .

Notice that in both of the preceding examples we have formulated our final result in terms of the unknown parameter \(\sigma\) . We will necessarily require an estimator \(s\) of \(\sigma\) . Having this estimator in hand, we will be in a position to look at the distribution of

\[\frac{\hat{\beta}_i - \beta_i}{s\sqrt{c_{ii}}}, \quad (4.22)\]

which, perhaps surprisingly, will be the Student \(t\) distribution.

Not quite — the errors themselves are still assumed normal. But when you estimate σ with s (from the residuals), you introduce extra uncertainty.

The ratio of a standard normal to an independent √(χ²/df) gives you a t-distribution. Since s² is estimated from the residuals (which have n−p degrees of freedom), the standardized statistic follows t(n−p) rather than N(0,1).

Same reason we use t-tests instead of z-tests when variance is unknown.

We begin by proving that \(s\) defined by

\[s^2 = \frac{1}{N-p} \sum_{t=1}^{N} \hat{\epsilon}_t^2 \quad (4.23)\]

is an unbiased estimator of \(\sigma\) , and that under the Gauss-Markov assumptions with added distributional requirement, \(\epsilon \sim N(0, \sigma^2 I)\) ,

\[\frac{N-p}{\sigma^2} s^2 \sim \chi_{N-p}^2 \quad (4.24)\]

where \(\chi_\nu^2\) is the Chi-Squared distribution with \(\nu\) degrees of freedom defined as the sum of squared iid standard normal variables; viz., we say \(W \sim \chi_\nu^2\) if

\[W = Z_1^2 + \cdots + Z_\nu^2 \quad (4.25)\]

with \(Z_i \sim N(0, 1)\) iid.

Proof. Let

\[\hat{\epsilon} = \begin{pmatrix} \hat{\epsilon}_1 \\ \vdots \\ \hat{\epsilon}_N \end{pmatrix}.\]

be the OLS estimate of \(\epsilon\) in (4.12). Since \(\mathbb{E}(\hat{\epsilon}) = 0\) , the covariance of \(\hat{\epsilon}\) is exactly

\[\text{Cov}(\hat{\epsilon}) = \mathbb{E}(\hat{\epsilon}\hat{\epsilon}')\]

We have, implementing a technique seen previously, that

\[||\hat{\epsilon}||^2 = \hat{\epsilon}'\hat{\epsilon} = \text{tr}(\hat{\epsilon}'\hat{\epsilon}).\]

Now by linearity we have that for random matrices, \(A\) , \(\mathbb{E}(\text{tr}(A)) = \text{tr}(\mathbb{E}(A))\) so that

\[\mathbb{E}(\text{tr}(\hat{\epsilon}'\hat{\epsilon})) = \text{tr}(\mathbb{E}(\hat{\epsilon}'\hat{\epsilon})).\]

And, as before,

\[\text{tr}(\mathbb{E}(\hat{\epsilon}'\hat{\epsilon})) = \text{tr}(\mathbb{E}(\hat{\epsilon}\hat{\epsilon}')).\]

So that

\[\begin{aligned} \mathbb{E}(||\hat{\epsilon}||^2) &= \text{tr}(\text{Cov}(\hat{\epsilon})) \\ &= \text{tr}(\sigma^2(I - H)). \end{aligned}\]

Since \(H\) is a projection into a \(p\) -dimensional space, there exists a basis such that

\[H = \begin{pmatrix} I_p & 0 \\ 0 & 0_{N-p} \end{pmatrix}.\]

This follows from the fact that the eigenvalues of \(H\) are zero or one, and that the rank of \(H\) is \(p\) . Clearly, under this basis, \(\text{tr}(H) = p\) . Finally, since \(\text{tr}(\cdot)\) is independent of the choice of basis, \(\text{tr}(H) = p\) under any basis. Similarly, \(I - H\) will necessarily have trace

\[\text{tr}(I - H) = N - p.\]

Therefore,

\[\begin{aligned} \mathbb{E}(\|\hat{\epsilon}\|^2) &= \sigma^2 \text{tr}(I - H) \\ &= \sigma^2(N - p), \end{aligned}\]

or,

\[\begin{aligned} \mathbb{E}\left(\sum_{t=1}^T \hat{\epsilon}_t^2\right) &= \mathbb{E}\left(\sum_{t=1}^T (y_t - \beta' x_t)^2\right) \\ &= \sigma^2(N - p), \end{aligned}\]

giving that the scaled sum of the squared residuals,

\[s^2 = \frac{1}{N-p} \mathbb{E}(\|\hat{\epsilon}\|^2)\]

is an unbiased estimator of \(\sigma^2\) . This result is independent of the distributional assumption of \(\epsilon\) ; i.e., it used only the Gauss-Markov assumptions.

If we further make the distributional assumption that \(\epsilon, \epsilon \sim N(0, \sigma^2 I)\) , we may show that

\[\frac{1}{\sigma^2} \|\hat{\epsilon}\|^2 \sim \chi^2_{N-p} \quad (4.26)\]

as in (4.24).

Let \(Q\) be the orthogonal change of basis matrix for the given matrix \(H\) such that

\[QHQ' = \begin{pmatrix} I_p & 0 \\ 0 & 0_{N-p} \end{pmatrix}.\]

Notice that this also implies that

\[Q(I - H)Q' = \begin{pmatrix} 0_p & 0 \\ 0 & I_{N-p} \end{pmatrix}.\]

For this \(Q\) , define

\[Z = \frac{1}{\sigma} Q\epsilon = \begin{pmatrix} Z_1 \\ \dots \\ Z_N \end{pmatrix}.\]

The key observation here is that \(\hat{\epsilon} = (I - H)\epsilon\) and that the simplified basis allows for a more tractable approach.

Under our last assumption, \(Z\) is a multivariate normal random variable since a linear combination of jointly normal random variables is jointly normal. The mean and covariance of \(Z\) are given by

\[\begin{aligned}\mathbb{E}(Z) &= \mathbb{E}\left(\frac{1}{\sigma}Q\epsilon\right) \\ &= \frac{1}{\sigma}Q\mathbb{E}(\epsilon) \\ &= 0,\end{aligned}\]

by linearity of the expectation operator, and

\[\begin{aligned}\text{Cov}(Z) &= \text{Cov}\left(\frac{1}{\sigma}Q\epsilon\right) \\ &= \frac{1}{\sigma^2}Q\text{Cov}(\epsilon)Q' \\ &= \frac{\sigma^2}{\sigma^2}QIQ' \\ &= I\end{aligned}\]

since \(QQ' = Q'Q = I\) . Hence

\[Z \sim N(0, I).\]

This also shows that the \(\{Z_t\}\) are iid. We may recover \(\epsilon\) as

\[\epsilon = \sigma Q'Z.\]

Since \(Q\) is orthogonal, we have that

\[\begin{aligned}||Q\hat{\epsilon}||^2 &= \hat{\epsilon}'Q'Q\hat{\epsilon} \\ &= \hat{\epsilon}'\hat{\epsilon} \\ &= ||\hat{\epsilon}||^2,\end{aligned}\]

giving

\[\begin{aligned}||\hat{\epsilon}||^2 &= ||Q\hat{\epsilon}||^2 \\ &= ||Q(I - H)\epsilon||^2 \\ &= ||Q(I - H)Q'Q\epsilon||^2 \\ &= \sigma^2||Q(I - H)Q'Z||^2 \\ &= \sigma^2 Z' \begin{pmatrix} 0_p & 0 \\ 0 & I_{N-p} \end{pmatrix}^2 Z \\ &= \sigma^2 \sum_{t=p+1}^{N} Z_t^2.\end{aligned}\]

As a result, we clearly have that \(\frac{1}{\sigma^2}||\hat{\epsilon}||^2\sim\chi_{N-p}^2\) as desired.

We may now return to the ratio in (4.22),

\[\frac{\hat{\beta}_i-\beta_i}{s\sqrt{c_{ii}}}.\]

We proved that under the full Gauss-Markov assumptions with additional distributional assumption for residuals,

\[\frac{\hat{\beta}_i-\beta_i}{\sigma\sqrt{c_{ii}}}\sim N(0,1).\]

We use this fact along with the result just obtained to decompose (4.22) as

\[\frac{\hat{\beta}_i-\beta_i}{s\sqrt{c_{ii}}}=\frac{\frac{\hat{\beta}_i-\beta_i}{\sigma\sqrt{c_{ii}}}}{\frac{s}{\sigma}}.\]

The result is a ratio of random variables with known distributions. In particular, in the numerator we have a standard normal random variable. The denominator is the square root of a \(\chi^2\) random variable with \(N-p\) degrees of freedom scaled by \(\frac{1}{N-p}\) .

Remarkably, a random variable

\[T=\frac{Z}{\sqrt{\frac{1}{M}X}} \tag{4.27}\]

with \(Z\sim N(0,1)\) and \(X\sim\chi_M^2\) and \(Z\) and \(X\) independent is distributed as a standard Student \(t\) random variable with \(M\) degrees of freedom as seen in (2.13); i.e., \(T\sim\text{St}(0,1;M)\) . We will abbreviate this notation as

\[T\sim t_M. \tag{4.28}\]

We do not prove the correspondence between our initial definition and that presented here, however.

The independence between \(\hat{\beta}_i\) and \(s^2\) has not been shown either. With the same assumptions as above we have that

\[\hat{\epsilon}=(I-H)\epsilon\] \[X(\hat{\beta}-\beta)=H\epsilon,\]

so that the covariance inner product between \(\hat{\epsilon}\) and \(X(\hat{\beta}-\beta)\) satisfies

\[(\hat{\epsilon},X(\hat{\beta}-\beta))=(I-H)\epsilon,H\epsilon)\] \[=(I-H)\sigma^2IH\] \[=\sigma^2(H-H^2)=0.\]

Under the normality assumption for \(\epsilon\) , this shows that \(\hat{\epsilon}\) and \(X(\hat{\beta}-\beta)\) are independent as well. Since we are also assuming that \(X\) is full rank,

\[\begin{aligned}0 &= (\hat{\epsilon}, X\hat{\beta}) ((X'X)^{-1}X')' \\&= (\hat{\epsilon}, \hat{\beta})\end{aligned}\]

as well, so that \(\hat{\epsilon}\) and \(\hat{\beta}\) are independent. Therefore \(s^2\) and the components of \(\hat{\beta}\) are independent as well.

4.3.1 Hypothesis Testing, Confidence Intervals, and Prediction Intervals

With the distribution of \(\frac{\hat{\beta}_i-\beta_i}{s\sqrt{c_{ii}}}\) now known (under the extended Gauss-Markov assumptions), we may construct symmetric confidence intervals for each \(\beta_i\) in turn. In particular, since \(t_{N-p}\) is symmetric about zero, a \(100(1-\alpha)\%\) confidence interval for \(\frac{\hat{\beta}_i-\beta_i}{s\sqrt{c_{ii}}}\) is given by

\[-t_{N-p;1-\alpha/2} \le \frac{\hat{\beta}_i - \beta_i}{s\sqrt{c_{ii}}} \le t_{N-p;1-\alpha/2}, \quad (4.29)\]

or

\[\hat{\beta}_i - s\sqrt{c_{ii}}t_{N-p;1-\alpha/2} \le \beta \le \hat{\beta}_i + s\sqrt{c_{ii}}t_{N-p;1-\alpha/2}, \quad (4.30)\]

where \(t_{N-p;1-\alpha/2}\) is the \(1-\alpha/2\) quantile of \(t_{N-p}\) . Clearly, with this choice,

\[\mathbb{P}\left(\left|\frac{\hat{\beta}_i - \beta}{s\sqrt{c_{ii}}}\right| \le t_{N-p;1-\alpha/2}\right) = 1 - \alpha.\]

We emphasize once more that this is a component-wise test.

A common issue in model development is determining whether a particular variable should be included in the model. As one approximation to the answer, we may approach the question by asking whether a particular \(\beta_i\) is likely to be zero. Necessarily this requires setting a specific confidence level. For example, for a fixed \(\alpha\) , we may determine if the interval

\[\left(\hat{\beta}_i - s\sqrt{c_{ii}}t_{N-p;1-\alpha/2}, \hat{\beta}_i + s\sqrt{c_{ii}}t_{N-p;1-\alpha/2}\right)\]

contains zero. If it does, there is evidence that the \(i\) th variable may be omitted from the model (relative to this particular confidence level \(\alpha\) ).

The preceding reasoning may be formalized into so-called hypothesis testing wherein a null hypothesis is formulated – and typically denoted by \(H_0\) – and statistical values are then measured. If the statistical values are extremely unlikely (relative to some threshold), we may reject the null hypothesis.

Example 4.3.1. We consider the null hypothesis

\[H_0: \beta_i = 0.\]

Under the null hypothesis,

\[b_i = \frac{\hat{\beta}_i}{s\sqrt{c_{ii}}} \sim t_{N-p}.\]

The probability of observing a value at least as large as \(|b_i|\) is exactly

\[p_0 = 2 \cdot (1 - S_{0,1;N-p}^{t-1}(b_i)).\]

We reject the null hypothesis at significance level \(\alpha\) if \(p_0 < \alpha\) . We call \(p_0\) the p-value of the test statistic associated with \(H_0\) .

Notice that we reject the null hypothesis exactly when

\[|b_i| > t_{N-p;1-\alpha/2},\]

which coincides with our previous observation that the confidence interval for \(\beta_i\) would in fact contain zero.

Falsely rejecting the null hypothesis when it is in fact true is called a type I error. When the extended Gauss-Markov assumptions obtain, the probability of a type I error is equivalent to the confidence level \(\alpha\) . It is not true that this holds generally.

Example 4.3.2. We return to examining ex post equity returns sorted by volatility quartiles. In our previous example we provided evidence that there is monotonicity in these ex post returns, but in a direction counter to that implied by CAPM. As such, we investigate constructing portfolios long in low trailing volatility and short in high volatility stocks.

At each month, we sort the same universe of 1,000 stocks considered previously by own volatility into quartiles, with quartile four holding the highest volatility names. At time \(t\) , let \(r_{1t}\) and \(r_{4t}\) be the average return over the next month \((t, t+1]\) for the lowest and highest quartile buckets, respectively. In addition, let \(\sigma_{1t}\) and \(\sigma_{4t}\) be the square root of the average trailing variance of the lowest and highest buckets.

We construct a portfolio with approximately equal long and short volatility by being long one unit of a portfolio of equal weighted names from quartile one, and short \(\frac{\sigma_{1t}}{\sigma_{4t}}\) units of the equally weighted names from quartile four. The return over the month post construction is given by

\[r_t = r_{1t} - \frac{\sigma_{1t}}{\sigma_{4t}} r_{4t}.\]

Over the period in our backtest, the average monthly return for this portfolio is 55 bps, annualizing to approximately 6.58%.

Regressing on a market proxy, we find the CAPM \(\alpha\) and \(\beta\) (ignoring the risk free rate) to be 0.60 and -0.0702, respectively. That is, the returns of the portfolio are generated without exposure to the market.

Further, we may consider the null hypothesis

\[H_0: \alpha = 0.\]

A confidence interval for \(\alpha\) at the confidence level 0.05 is found to be [0.0013, 0.0106] so that we may reject the null hypothesis at the 5% level.

In addition to examining confidence intervals (and making inferences based on these intervals), we may also construct confidence intervals about the mean response from the linear models considered. In particular, and again, for the original OLS model (4.12), OLS (least squares) estimates of \(\beta\) , \(\hat{\beta}\) , and assuming the Gauss-Markov with distributional assumptions obtains, an unbiased estimate of the variance of an input, \(x_t\) , is given by

\[\mathrm{Var}(\hat{y}_t) = \mathrm{Var}(\hat{\beta}'x_t) \quad (4.31)\]

\[= x_t'\mathrm{Cov}(\hat{\beta})x_t \quad (4.32)\]

\[= \sigma^2 x_t'(X'X)^{-1}x_t. \quad (4.33)\]

Following the same procedure as above, we find that a \(100 \cdot (1 - \alpha)\%\) confidence interval for \(\hat{\beta}'x_t\) is

\[\hat{\beta}'x_t \pm \left(s\sqrt{x_t'\mathrm{Cov}(\hat{\beta})x_t}\right)t_{N-p;1-\alpha/2}, \quad (4.34)\]

where, continuing our convention, we denote \(C = (X'X)^{-1}\) . The complete verification of this result, following that of (4.29), is left as an exercise.

The confidence interval just exhibited is both an estimate of the confidence interval of the mean and dependent on \(x = x_t\) being an observation in the derivation of \(\hat{\beta}\) . We may also consider the case of a new observation, or, equivalently, a prediction interval about an observation \(x\) as opposed to the mean response. This is in contrast with the previous result as we may no longer discard the variance of \(\epsilon\) . We have,

\[\begin{aligned} \mathrm{Var}(\hat{y}) &= \mathrm{Var}(\hat{\beta}'x + \epsilon) \\ &= x'\mathrm{Cov}(\hat{\beta})x + \sigma^2 \\ &= \sigma^2 x'(X'X)^{-1}x + \sigma^2, \end{aligned}\]

so that, in the usual manner, a \(100 \cdot (1 - \alpha)\%\) prediction interval for \(\hat{\beta}'x\) is

\[\hat{\beta}'x \pm \left(s\sqrt{1 + x_t'\mathrm{Cov}(\hat{\beta})x_t}\right)t_{N-p;1-\alpha/2}. \quad (4.35)\]

4.3.2 Submodel Testing

The ability to test the null hypothesis for the inclusion or exclusion of a particular variable is a powerful result. However, we may also want to construct null hypotheses about linear submodels of the full model specified in (4.12); i.e., we would like to consider the case that

\[\mathbb{E}(Y) \subseteq \mathcal{L}_0\]

for some (strict) subset \(\mathcal{L}_0\subset\mathcal{L}\) , where \(\mathcal{L}\) is the space spanned by the columns of \(X\) . Necessarily the dimension of \(\mathcal{L}_0\) will be less than \(\dim(\mathcal{L})\) . We will denote \(\dim(\mathcal{L}_0)\) by \(r\) in what follows.

Under the assumptions of the model, we have

\[\mathbb{E}(Y)=\mathbb{E}(X\beta+\epsilon)=X\beta,\] (4.36)

so that clearly

\[\mathbb{E}(Y)\subseteq\mathcal{L}.\]

From our previous work, ordinary least squares projects \(Y\) onto \(\mathcal{L}\) through the matrix \(H\) . For a fixed subspace \(\mathcal{L}_0\subset\mathcal{L}\) , we may consider a similar projection of \(Y\) onto \(\mathcal{L}_0\) by some matrix \(G\) , with

\[\hat{Y}_0=GY.\] (4.37)

Notice that since \(H\) projects into \(\mathcal{L}\) , \(GH=G\) and \(HG=G\) . As a result, \(Y-\hat{Y}\) and \(\hat{Y}-\hat{Y}_0\) are orthogonal. First note that

\[\begin{aligned}\mathbb{E}(Y-\hat{Y})&=\mathbb{E}((I-H)\epsilon)=0\\\mathbb{E}(\hat{Y}_0-\hat{Y})&=\mathbb{E}((H-G)\epsilon)=0.\end{aligned}\]

The covariance is therefore

\[\begin{aligned}\text{Cov}(Y-\hat{Y},\hat{Y}_0-\hat{Y})&=\text{Cov}((I-H)\epsilon,(H-G)\epsilon)\\&=(I-H)\text{Cov}(\epsilon)(H-G)'\\&=\sigma^2(I-H)(H-G)\\&=\sigma^2\left(H-H^2-G+HG\right)\\&=0.\end{aligned}\]

Assuming joint normality of \(\epsilon\) implies that \(Y-\hat{Y}\) and \(\hat{Y}_0-\hat{Y}\) are independent. As a result, \(||Y-\hat{Y}||^2\) and \(||\hat{Y}_0-\hat{Y}||^2\) are independent as well.

We next consider the null hypothesis

\[H_0:\mathbb{E}(Y)\subseteq\mathcal{L}_0.\] (4.38)

Under the null hypothesis, the expected value of \(Y\) lies in a space where we don't need all of the columns of \(X\) . In other words, some subset (or linear combination) of variables is sufficient to describe \(Y\) .

It also follows under \(H_0\) and the Gauss-Markov assumptions that \(\frac{1}{p-r}||\hat{Y}-\hat{Y}_0||^2\) is an unbiased estimator of \(\sigma^2\) , and further that under the extended Gauss-Markov assumptions,

\[\frac{1}{p-r}||\hat{Y}-\hat{Y}_0||^2\sim\chi_{p-r}^2\] (4.39)

The result follows considering the projection \(H-G\) (rather than \(I-H\) as in the preceding proof) and the change of basis matrix \(Q\) satisfying

\[Q(H-G)Q'=\begin{pmatrix} 0_{N-(p-r)} & 0 \\ 0 & I_{p-r} \end{pmatrix}.\]

The test statistic we will use to evaluate \(H_0\) will be

\[F=\frac{N-p}{p-r}\frac{||\hat{Y}-\hat{Y}_0||^2}{||Y-\hat{Y}||^2}\] (4.40)

We say (with clear motivation stemming from the results above) that a random variable constructed as the ratio of independent \(\chi^2\) random variables as in

\[F_{d_1,d_2}=\frac{\frac{1}{M}U}{\frac{1}{N}V}\] (4.41)

follows the F distribution with ( \(d_1, d_2\) ) degrees of freedom, where \(d_1\) and \(d_2\) are the degrees of freedom for \(U\) and \(V\) , respectively. Since under our assumptions, \(\frac{1}{\sigma^2}||Y-\hat{Y}||^2\sim\chi_{N-p}^2\) and \(\frac{1}{\sigma^2}||\hat{Y}-\hat{Y}_0||^2\sim\chi_{p-r}^2\) and independence has already been shown,

\[\frac{\frac{1}{\sigma^2(p-r)}||\hat{Y}-\hat{Y}_0||^2}{\frac{1}{\sigma^2(N-p)}||Y-\hat{Y}||^2}=\frac{N-p}{p-r}\frac{||\hat{Y}-\hat{Y}_0||^2}{||Y-\hat{Y}||^2}\sim F_{p-r,N-p},\]

giving that the test statistic in (4.40) may be tested against a known distribution. Notice that by orthogonality we have

\[||Y-\hat{Y}_0||^2=||Y-\hat{Y}||^2+||\hat{Y}-\hat{Y}_0||^2,\] (4.42)

or

\[||\hat{Y}-\hat{Y}_0||^2=||Y-\hat{Y}_0||^2-||Y-\hat{Y}||^2.\]

This gives an alternate formulation of the above as

\[\frac{N-p}{p-r}\frac{||\hat{Y}-\hat{Y}_0||^2}{||Y-\hat{Y}||^2}=\frac{N-p}{p-r}\frac{||Y-\hat{Y}_0||^2-||Y-\hat{Y}||^2}{||Y-\hat{Y}||^2}.\]

This refactoring is more than cosmetic as the ratio now only involves the terms \(||Y-\hat{Y}_0||^2\) and \(||Y-\hat{Y}||^2\) , the sum of the squared residuals from the submodel and full model, respectively; i.e., we have

\[\frac{N-p}{p-r}\frac{||\hat{Y}-\hat{Y}_0||^2}{||Y-\hat{Y}||^2}=\frac{N-p}{p-r}\frac{RSS_{\mathcal{L}_0}-RSS_{\mathcal{L}}}{RSS_{\mathcal{L}}},\]

where RSS is defined as the residual sum of squares of a given model. In particular, the full model residual sum of squares is given by

\[RSS_{\mathcal{L}}=||Y-X\hat{\beta}||^2=\sum_{t=1}^T\left(y_t-\hat{\beta}'x_t\right)^2.\]

We call the null hypothesis test constructed above an F test. As before, we reject the null hypothesis, \(H_0\) at confidence level \(\alpha\) if \(F>F_{p-r,N-p;1-\alpha}\) , where as before, \(F_{d_1,d_2,1-\alpha}\) is the \(100\cdot(1-\alpha)\) percentile of the F distribution with \(d_1\)

and \(d_2\) degrees of freedom. Notice that an \(F\) test is a one sided test, in contrast to the \(t\) test. An \(F\) test may be constructed whenever we have nested models, and gives a method for evaluating submodels; viz., if we do not reject the null hypothesis, then we may prefer the submodel giving rise to \(\mathcal{L}_0\) .

Example 4.3.3. Consider the model

\[\text{Model 1 } y_t = \beta_1 x_{1t} + \cdots + \beta_p x_{Nt} + \epsilon_t\]

and assume there are \(t = 1, \dots, T\) observations and that the extended Gauss-Markov assumptions hold. Let

\[H_0: \beta_1 = 2\beta_2.\]

Under \(H_0\) , we consider

\[\begin{aligned}\text{Model 2 } y_t &= (2\beta_2)x_{1t} + \beta_2 x_{2t} + \cdots + \beta_p x_{Nt} + \epsilon_t \\ &= \beta_2(2x_{1t} + x_{2t}) + \cdots + \beta_p x_{Nt} + \epsilon_t.\end{aligned}\]

Obtaining the RSS of both models (denoted by \(RSS_1\) and \(RSS_2\) , respectively) after this second formulation is now clear. As is the reframing of \(H_0\) in terms of a subspace of \(\mathcal{L} = \text{span}(X)\) . An \(F\) test at confidence level \(\alpha\) checks

\[(N-p)\frac{RSS_2 - RSS_1}{RSS_1}\]

against the value \(F_{1, N-p; 1-\alpha}\) . If the test statistic is greater than \(F_{1, N-p; 1-\alpha}\) , we reject the null hypothesis.

4.3.3 Variable Selection

In every case in OLS, a model with more input variables will fit better than one with less. However, additional regressors in the design matrix, \(X \in \mathbb{R}^{N \times p}\) , increases the chances of collinearity among the columns of \(X\) . In the worst case, this gives a singular \(X'X\) , but may also produce nearly singular matrices as well.

Let

\[X'X = Q\Lambda Q'\]

be the decomposition of \(X'X\) into its eigenvalues; i.e., \(\Lambda = \text{diag}(\lambda_1, \dots, \lambda_p)\) and \(Q\) orthonormal. The inverse of \(X'X\) is given

\[(X'X)^{-1} = Q\Lambda^{-1}Q'.\]

If one of the \(\lambda_i\) is close to zero we have that \(\Lambda^{-1}\) has a very large diagonal element. Therefore, in the case of collinearity in the columns of \(X\) , the covariance of \(\hat{\beta}\) given by \(\sigma^2 (X'X)^{-1}\) , is potentially very large. In other words, in the case of collinearity, the OLS estimates are less certain.

Hence some balance between the addition of variables for fit and estimation accuracy must necessarily be observed. In what follows we look at various step-wise selection procedures. In each case we let \(P\) be the maximum number of possible regressors. Notice that considering each possible model is computationally difficult due to the combinatoric nature of the problem. Instead we focus on building up a model one factor at a time, building a model by paring away factors from the \(P\) available, and a hybrid of these two methods.

Forward Selection

For a design matrix \(\tilde{X} \in \mathbb{R}^{N \times p'}\) , we define the partial correlation between \(\{y_i\}_{i=1}^N\) and \(\{w_i\}_{i=1}^N\) with respect to \(\tilde{X}\) to be the correlation of the estimated residuals \(\{\hat{\epsilon}_{yi}\}\) and \(\{\hat{\epsilon}_{wi}\}\) given by

\[\hat{\epsilon}_{yi} = y_i - \hat{y}_i\]

and

\[\hat{\epsilon}_{wi} = w_i - \hat{w}_i\]

where \(\hat{y}_i\) and \(\hat{w}_i\) are the OLS regression estimates of \(y_i\) and \(w_i\) , respectively, using the design matrix \(\tilde{X}\) .

In the forward selection algorithm, we initialize by calculating the correlation between \(\{y_i\}_{i=1}^N\) and each column of \(X\) , \(\{x_{ij}\}_{i=1}^N\) , for \(j = 1, \dots, P\) . This gives

a set of correlations \(\{\hat{\rho}_j\}_{j=1}^P\) . The first factor of the model is the regressor associated with the maximum correlation in this set.

Let \(\tilde{X}_P\) be the design matrix with all regressors included, and define \(\tilde{X}_{P-k}\) as the resulting matrix after removing the \(k\) regressors added to the model under the forward selection procedure. Similarly, let the design matrix of the model at this point be denoted \(X_k\) . To determine the next addition (should there be one), we look at the partial correlation between \(\{y_i\}_{i=1}^N\) and each column of \(\tilde{X}_{P-k}\) with respect to \(X_k\) .

A candidate model is then considered by adding the regressor with maximum partial correlation to the \(k\) factors already selected. Denote this new regressor by \(x^{k+1}\) . A partial \(F\) -test is then conducted at a fixed confidence level (usually 0.05 or 0.01) between the candidate model the model with \(k\) factors. If we fail to reject the null hypothesis

\[H_0: \beta_{k+1} = 0,\]

the procedure terminates and the model is fixed with \(k\) factors and design matrix \(X_k\) . Otherwise, the design matrix is updated to \(X_{k+1}\) by adding \(x^{k+1}\) and another regressor is considered.

Example 4.3.4. We have primarily considered equity returns in our modeling thus far. Further, we have only considered technical data; i.e., data coming from market pricing. Here we look at explaining the cross section corporate bond spreads through a model using a mixture of technical and so-called fundamental data. We consider four possible regressors:

- Debt to Market Cap: defined as the ratio of the sum of long and short term debt as reported by a company on its balance sheet to the most recent market cap (stock price \(\times\) common shares outstanding).

- Enterprise Value: The sum of

- Market Cap: most recent stock price \(\times\) the number of common shares outstanding

- Preferred Equity: the value of preferred equity reported on the company's balance sheet. Preferred equity offers a slightly senior position to equity holders, often with fixed dividend payments similar to a fixed income instrument

- Total Debt: the sum of long and short term debt held by the company as reported on their balance sheet

- Minority Interest: the value of the company's subsidiaries owned by minority shareholders as reported on the balance sheet

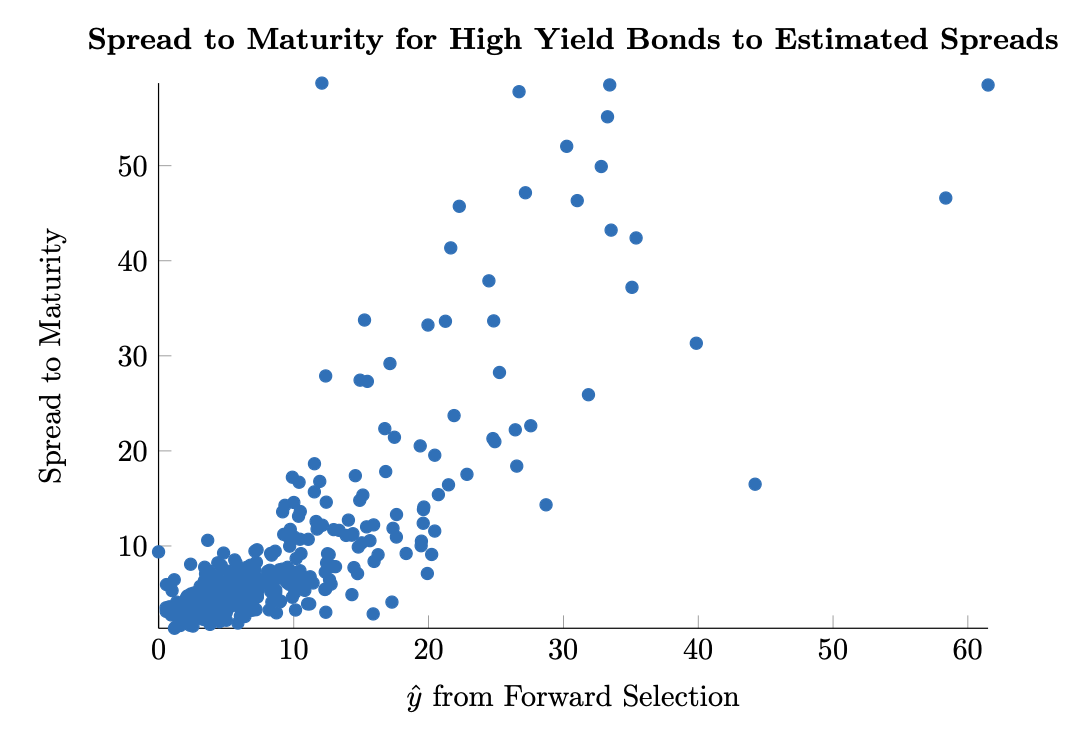

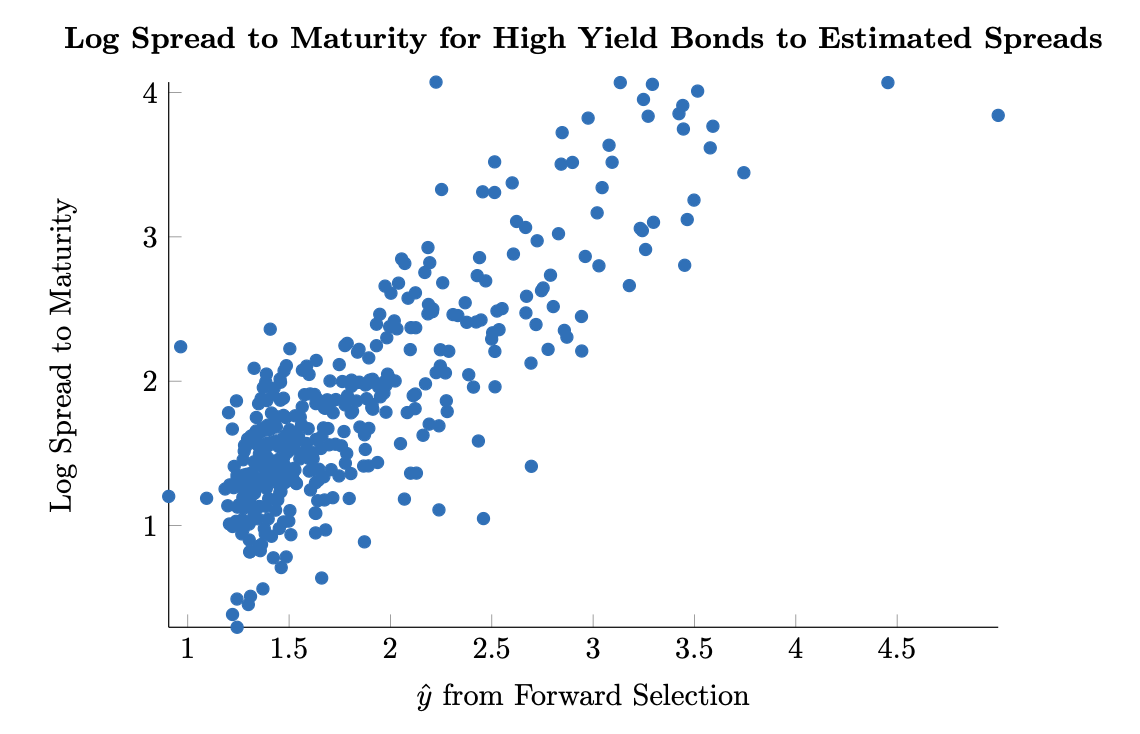

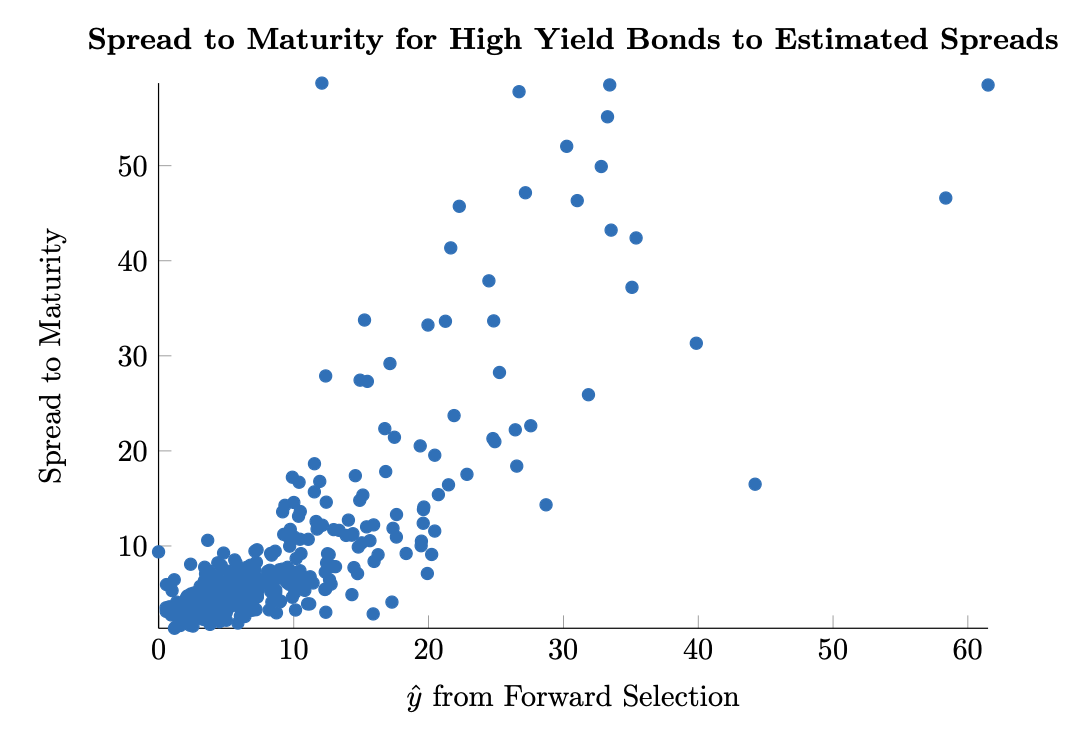

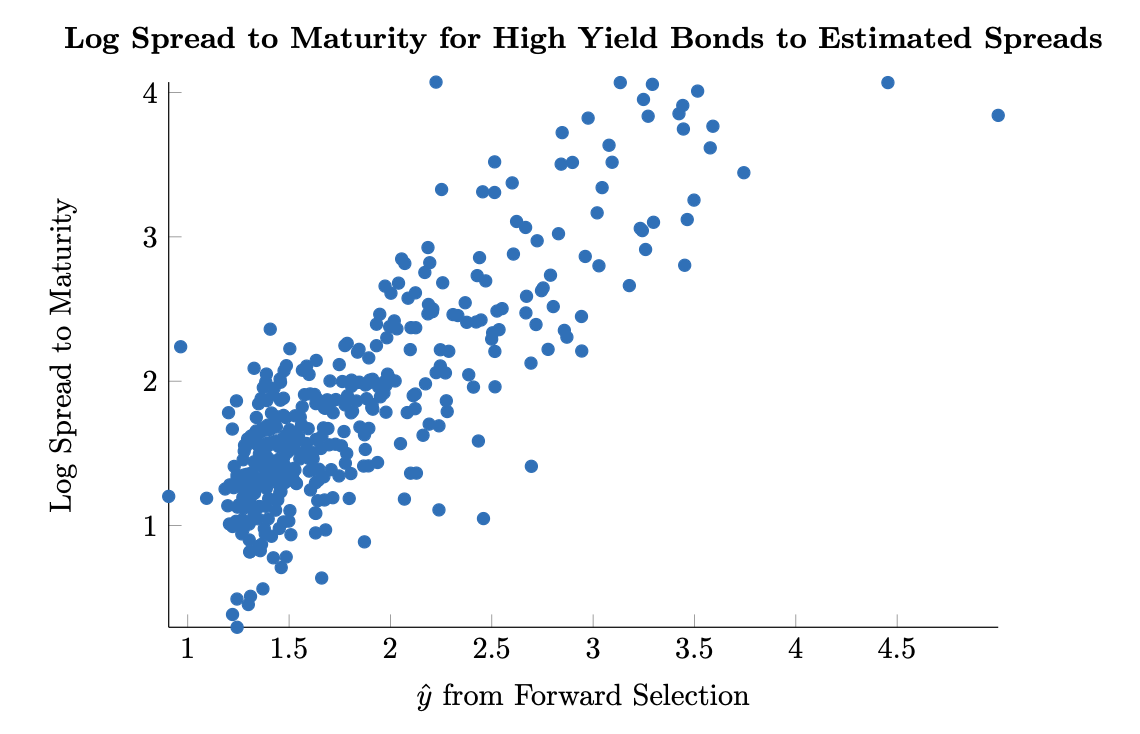

minus Cash and Short Term Investments.