Try an interactive version of this dialog: Sign up at solve.it.com, click Upload, and pass this URL.

Context

I'm Dan — trained in physical oceanography, experienced in state-space modeling, finite difference methods, and data science, but new to investment theory. I'm working through Chris Bemis's Portfolio Statistics and Optimization (August 2023) to build understanding.

Book structure: Chapters are split into separate dialogs (ch_1.ipynb, ch_2.ipynb, etc.). Also available:

./spoiler.md— short digest of each chapter's point and how they form the book's arc./bib.ipynb— bibliography

Arc: The book builds from statistical foundations (distributions, covariance) through regression and optimization theory to Mean-Variance Optimization and coherent risk measures. Chris emphasizes using CAPM and Fama-French as interpretive tools rather than prescriptive models, given non-stationarity and fat tails in real markets.

Ch 1 covered: EMH, systemic vs idiosyncratic risk, CAPM (β as market sensitivity, α as excess return), Fama-French (adding size and value factors), and MVO setup. Key theme: models are useful for interpretation and comparison, but their stationarity assumptions are violated in practice.

Please read formatting_instructions.md in this directory and apply those changes where relevant to every message in this dialog

view(path=formatting_instructions.md)

{

"id": "toolu_01K5wk6sd7RFX5USZMMZi5SY",

"call": {

"function": "view",

"arguments": {

"path": "formatting_instructions.md"

}

},

"result": "\"# Formatting Instructions for Chapter Dialogs\\n\\nWhen preparing dialogs for future chapters, apply these transformations:\\n\\n1. **Dollar signs in LaTeX**: Replace `\\\\$` (backslash-dollar) with `$` \u2014 the escape doesn't render correctly, possibly due to KaTeX interference.\\n\\n2. **Figure paths**: Replace local paths like `fig/1.1.png` with the GitHub raw URL:\\n ```\\n https://raw.githubusercontent.com/dangoldner/bemis/main/fig/1.1.png\\n ```\\n The local path isn't accessible in the published version of the dialog.\""

}

Got it. I'll scan the dialog for:

\$sequences that need to become$- Local figure paths like

fig/X.X.pngthat need the GitHub raw URL prefix

Let me check what messages exist in this dialog:

find_msgs(re_pattern=\\\\\\$|fig/, as_xml=True)

{

"id": "toolu_01W7CbHdJDTH6StEG26YwNid",

"call": {

"function": "find_msgs",

"arguments": {

"re_pattern": "\\\\\\\\\\\\$|fig/",

"as_xml": "True"

}

},

"result": "'<msgs><prompt id=\"_b4fe9faa\"><source>Please read formatting_instructions.md in this directory and apply those changes where relevant to every message in this dialog<out><p class=\"leading-tight\">\ud83e\udde0\ud83e\udde0\ud83e\udde0\ud83e\udde0\ud83e\udde0\ud83e\udde0\ud83e\udde0\ud83e\udde0</p>\\n<details class=\"tool-usage-details\">\\n<summary>view(path=formatting_instructions.md)</summary>\\n<div class=\"relative\"><div><div data-code=\"{\\n "id": "toolu_01K5wk6sd7RFX5USZMMZi5SY",\\n "call": {\\n "function": "view",\\n "arguments": {\\n "path": "formatting_instructions.md"\\n }\\n },\\n "res<TRUNCATED>"

}

Found 3 notes with content needing changes. I'll update them now:

msg_str_replace(id=_6e3585c7, old_str=",

"new_str": ""

}

},

"result": "{'success': 'Replaced text in message _6e3585c7'}"

}

msg_str_replace(id=_5f2d6027, old_str=",

"new_str": ""

}

},

"result": "{'success': 'Replaced text in message _5f2d6027'}"

}

msg_str_replace(id=_5f2d6027, old_str=",

"new_str": ""

}

},

"result": "{'success': 'Replaced text in message _5f2d6027'}"

}

msg_str_replace(id=_bc8176f9, old_str=",

"new_str": ""

}

},

"result": "{'success': 'Replaced text in message _bc8176f9'}"

}

Done! I updated 4 figure paths across 3 notes to use the GitHub raw URLs. I didn't find any \$ (backslash-dollar) sequences needing the $ replacement — the dollar signs in this dialog are all standard LaTeX math delimiters that render correctly.

Chapter 2

Distributions and Summary Statistics

The normal distribution (and its close cousin the log-normal distribution) dominate much of the financial landscape. One feature we believe should be front and center in understanding these objects is that in any discussion where investors make decisions solely on expected return and variance, there is a belief that other statistical shape measures aren't of concern. In this chapter, we work with density functions to better understand a few distributions that are useful for our future work, common in industry and the literature, and which may be useful in avoiding a myopia of expectation and variance as sole features for inspection.

We begin by briefly discussing random variables, and develop the mathematics of cumulative and probability density functions with examples [6]. We look at various statistics including the mean, variance, and percentiles of a cumulative density function, esp. the median, and then continue to example distributions. In so doing, we will compare estimated probabilities based on various density functions to empirical frequencies observed in equity returns, and note many so-called stylized features of equity returns as discussed by Rama Cont [8]; e.g., fat-tails, asymmetry of extreme gains and losses

Afterwards, we will show the impact of modifying a random variable on its density function as well, and prove the weak law of large numbers. Finally, we will work with multivariate random variables and generalize previous results as needed. The concepts of independence and correlation are also developed.

2.1 Univariate Distributions

We focus on real valued probability spaces, \(\mathbb{P}\) , and begin with the univariate case

\[(\mathbb{R}, \mathcal{B}, \mathbb{P})\]

with \(\mathcal{B}\) the Borel \(\sigma\) algebra on \(\mathbb{R}\) , the real line. While the formalism of the statement is necessary, the usage will be limited, with the focus instead on methods that are much closer to calculus and real analysis.

I have only ever used methods closer to calculus and real analysis. Do I need to know what "the Borel \(\sigma\) algebra" means?

No — the author is essentially telling you exactly that. The sentence "the formalism of the statement is necessary, the usage will be limited" is a polite way of saying: "I'm stating this for completeness and rigor, but we won't actually use measure theory machinery going forward."

For practical purposes, the Borel σ-algebra is just "all the subsets of ℝ that you'd reasonably want to assign probabilities to" — intervals, unions of intervals, their complements, etc. It rules out pathological sets that can't be measured, but you'll never encounter those in applied work.

Given your background in state-space modeling and finite differences, you're already comfortable with the calculus-based approach to probability (PDFs, CDFs, integration). That's what this chapter will use.

Next, let \(X\) be a random variable on \((\mathbb{R}, \mathcal{B}, \mathbb{P})\) , then the cumulative distribution function of \(X\) is given by

\[F(x) = \mathbb{P}(X \in (-\infty, x]). \tag{2.1}\]

We will assume that there are no point masses for \(F\) . The cumulative distribution function captures all of the distributional information about the random variable \(X\) ; e.g.,

\[\begin{aligned}\mathbb{P}(X \in (a, b]) &= \mathbb{P}(X \le b) - \mathbb{P}(X \le a) = F(b) - F(a), \\ \mathbb{P}(X > a) &= 1 - \mathbb{P}(X \le a) = 1 - F(a),\end{aligned}\]

and if \(X\) is symmetric about 0, for instance, and \(a > 0\) , \(\mathbb{P}(|x| > a) = 2 \cdot F(-a)\) . In this case we also have

\[\begin{aligned}F(0) &= \frac{1}{2}, \\ F(x) &= 1 - F(-x).\end{aligned}\]

Notice, too, that we may consider the impact of shift and scale on the cumulative distribution function; e.g., let \(Y = aX + b\) for \(a > 0\) and \(b \in \mathbb{R}\) , and let \(F_Y\) and \(F_X\) be the cumulative distribution functions of \(Y\) and \(X\) , respectively, then

\[\begin{aligned}F_Y(y) &= \mathbb{P}(Y < y) \\ &= \mathbb{P}(aX + b < y) \\ &= \mathbb{P}\left(X < \frac{y-b}{a}\right) \\ &= F_X\left(\frac{y-b}{a}\right).\end{aligned}\]

The case with \(a < 0\) is treated similarly.

We say that \(X\) has a probability density function, \(f: \mathbb{R} \to \mathbb{R}_{\ge 0}\) , if

\[F(x) = \int_{-\infty}^{x} f(s) ds. \tag{2.2}\]

At times we will simply refer to \(f\) as the density function or pdf of \(X\) . We note immediately that for \(f\) to be a density function, it is necessary that \(f\) is nonnegative, integrable, and satisfies

\[\int_{-\infty}^{\infty} f(s) ds = 1.\]

For \(X\) with a probability density function, the expected value of \(X\) , denoted \(\mathbb{E}(X)\) , is given by

\[\mathbb{E}(X) = \int_{-\infty}^{\infty} sf(s)ds. \quad (2.3)\]

Notice that we have not precluded infinite expectations so that \(\mathbb{E}(X) \in \mathbb{R} \cup \infty\) . We will often call the expected value of \(X\) the mean and denote it by \(\mu\) .

The variance of \(X\) , \(\text{Var}(X)\) , is found by integrating the squared distance from the mean according to the density \(f\) . That is,

\[\text{Var}(X) = \int_{-\infty}^{\infty} (s - \mu)^2 f(s)ds \quad (2.4)\]

if \(\mu\) is finite, otherwise \(\text{Var}(X)\) is infinite as well. Here again, then, we have not disallowed infinite variances. For reasons that will become clear shortly, we routinely denote the \(\text{Var}(X)\) as \(\sigma^2\) .

We may also define variance without resort to the density function of \(X\) , via

\[\text{Var}(X) = \mathbb{E}((X - \mu)^2), \quad (2.5)\]

which gives, after some manipulation, that

\[\text{Var}(X) = \mathbb{E}(X^2) - \mu^2.\]

Variance indicates some level of dispersion of the random variable \(X\) . The units of the mean and variance of \(X\) are not the same, however. Oftentimes we will want to construct statistics relating the mean to a dispersion metric. In this vein, the standard deviation, or volatility is defined as the square root of variance, \(\sigma\) .

The mean and standard deviation are often described as position and dispersion parameters, respectively. They are also known as the first and second moments of the distribution.

Considering once more the impact of shift and dilation to the expected value and variance of a random variable, \(X\) , we have that for any scalars \(a\) and \(b\) ,

\[\mathbb{E}(aX + b) = a\mathbb{E}(X) + b \quad (2.6)\]

\[\text{Var}(aX + b) = a^2 \text{Var}(X). \quad (2.7)\]

Each of these results may be quickly derived using the probability density function approach (the exercise is left to the reader). We shall see later that the expectation operator is linear in combinations of random variables, and the generalization of variance, covariance, is a bilinear form. Here we simply note that the mean is scale and shift sensitive, while variance is independent of changes in position.

We noted that both the mean and variance of a random variable, \(X\) , can be infinite. For a practical application, this is a disappointing feature. Especially, as we will see later, if we intend to understand risk via such metrics. An alternate approach is to look at the percentiles of the distribution of \(X\) . The (100 ·

\(p\) )th percentile of a random variable with cumulative density function \(F\) is the smallest number \(\pi_p\in\mathbb{R}\) satisfying \(F(\pi_p)=p\) . That is, \(\pi_p\) satisfies

\[\pi_p=\min\{\pi|F(\pi)=p\}.\] (2.8)

where we take the minimum of the set of all values satisfying our criterion. If \(F\) is invertible in a neighborhood about \(\pi_p\) , then we may solve for the \(p\) th percentile by solving \(\pi_p=F^{-1}(p)\) .

Percentiles of various distributions have gained prominence over the years as asymmetry of returns and so-called fat tails have become less surprising and more characteristic. Founding theory in the field leaned toward symmetric distributions with well behaved extrema. This yielded the volatility-as-risk paradigm which we will encounter later. Percentile based risk measures allow a much more flexible approach to risk modeling, but have their own trappings as well. At this point we lay the groundwork for future study by pointing out a few specific examples.

The median of a random variable \(X\) is defined as its 50th percentile. This value may be defined (that is, not infinite) even when the mean may not be. In many cases that follow, for example any symmetrical distribution, the median and mean coincide, but it is by no means necessary.

When considering a random variable representing the loss of a portfolio, say, we might be concerned with documenting rare events. One method of doing this would be to report (assuming loss is a positive number) the 95th, 99th, or other extreme percentiles. This metric is the so-called Value at Risk (VaR) of the portfolio, and has found a place in institutional investing and in regulatory requirements. The methodology of constructing rare events and loss distributions is sometimes prescribed, but may be left to the practitioner’s discretion as well. There are features of VaR that are not appealing in some lights, and an extension named Conditional Value at Risk (CVaR) ameliorates these issues in large part. Without defining this quantity exactly, the idea behind CVaR as a risk metric is to look at the average loss past the VaR threshold – that is, the center of mass of the area under the curve to the right of VaR. These ideas will be developed fully in later chapters, but they invariably have a place in early discussions about percentiles due to their conceptual simplicity, and practical applications.

Alternative extensions to better capture the empirical distribution of returns have looked at higher moments. In particular, for a random variable \(X\) with mean \(\mu\) and variance \(\sigma^2\) , skew is defined as

\[\mathbb{E}\left(\frac{(X-\mu)^3}{\sigma^3}\right),\] (2.9)

and kurtosis is defined by

\[\mathbb{E}\left(\frac{(X-\mu)^4}{\sigma^4}\right).\] (2.10)

We will denote skew and kurtosis by \(\gamma\) and \(\kappa\) , respectively.

For symmetric \(X\) , skew is zero (consider the oddness of the integrand if a density is given). Generalizing this observation, skew measures a degree of asymmetry in the distribution of \(X\) ; viz., if the left tail of \(X\) has more (less) weight than the right, \(X\) has negative (positive) skew.

Kurtosis is a measure to compare tail densities across random variables. It is theoretically deficient in its usual application which often takes kurtosis as a measure of ‘tailedness.’ Even so, it provides a metric which accounts for extreme observations. This will be especially important when comparing empirical distributions to the densities that have wide application in theory and practice.

We proceed to a few of these example densities.

2.1.1 Normal Distribution

The normal density, or the density function for a normal random variable, is a two parameter function \(\phi_{\mu,\sigma^2}(\cdot)\) given by

\[\phi_{\mu,\sigma^2}(x) = \frac{1}{\sqrt{2\pi\sigma^2}} \exp\left(\frac{-(x-\mu)^2}{2\sigma^2}\right). \quad (2.11)\]

If a random variable, \(X\) , has the above density, we say that it is normal, and denote this by \(X \sim N(\mu, \sigma^2)\) . The cumulative distribution of a normal random variable will be denoted \(\Phi_{\mu,\sigma^2}\) .

The usage of \(\mu\) and \(\sigma\) above is not a coincidence.

Example 2.1.1. The expected value of \(X\) with density \(\phi_{\mu,\sigma^2}\) is \(\mu\) .

We apply the integral in (2.3) directly to see

\[\mathbb{E}(X) = \frac{1}{\sqrt{2\pi\sigma^2}} \int_{-\infty}^{\infty} x \exp\left(\frac{-(x-\mu)^2}{2\sigma^2}\right) dx,\]

a change of variables using \(u = \frac{x-\mu}{\sigma}\) gives

\[\begin{aligned} \mathbb{E}(X) &= \frac{1}{\sqrt{2\pi\sigma^2}} \int_{-\infty}^{\infty} (\sigma^2 u \exp(-u^2/2) + \sigma\mu \exp(-u^2/2)) du \\ &= \frac{1}{\sqrt{2\pi\sigma^2}} \left( \int_{-\infty}^{\infty} \sigma^2 u \exp(-u^2/2) du + \int_{-\infty}^{\infty} \sigma\mu \exp(-u^2/2) du \right). \end{aligned}\]

Now, the first integrand is odd, and hence integrates to zero over \(\mathbb{R}\) . Therefore

\[\begin{aligned} \mathbb{E}(X) &= \frac{1}{\sqrt{2\pi\sigma^2}} \int_{-\infty}^{\infty} \sigma\mu \exp(-u^2/2) du \\ &= \mu \cdot \frac{1}{\sqrt{2\pi}} \int_{-\infty}^{\infty} \exp(-u^2/2) du. \end{aligned}\]

We know from calculus that a change of variables to polar coordinates is all it takes to show that

\[\int_{-\infty}^{\infty} \exp(-u^2/2) du = \sqrt{2\pi},\]

so that finally we arrive at

\[\mathbb{E}(X) = \mu.\]

The variance is found to be \(\sigma^2\) using integration by parts, and is left as an exercise.

The normal distribution is clearly symmetric about \(\mu\) , and hence the median is also \(\mu\) . When \((\mu, \sigma^2) = (0, 1)\) , we say that \(X\) is the standard normal; we will denote the probability density and cumulative distribution functions of the standard normal by \(\phi\) and \(\Phi\) , respectively, dropping the subscripts of the general case.

Example 2.1.2. Consider the random variable \(Y\) defined by

\[Y = \sigma X + \mu,\]

with \(X\) a standard normal random variable. Then \(Y\) is a normal random variable with mean \(\mu\) , and standard deviation \(\sigma\) .

We know immediately from (2.6) and (2.7) that \(\mathbb{E}(Y) = \mu\) and \(\text{Var}(Y) = \sigma^2\) . We should also verify that the cumulative density function of \(Y\) is in fact the normal cumulative density. We have

\[\begin{aligned}F_Y(y) &= \mathbb{P}(Y < y) \\&= \mathbb{P}(\sigma X + \mu < y) \\&= \mathbb{P}\left(X < \frac{y - \mu}{\sigma}\right) \\&= F_X\left(\frac{y - \mu}{\sigma}\right).\end{aligned}\]

Or that \(F_Y(y) = \Phi\left(\frac{y - \mu}{\sigma}\right)\) . This is actually sufficient to show that \(Y\) is normally distributed, but we utilize the density function, \(\phi\) , to make this more evident. We have that

\[\begin{aligned}F_Y(y) &= \Phi\left(\frac{y - \mu}{\sigma}\right) \\&= \frac{1}{\sqrt{2\pi}} \int_{-\infty}^{\frac{y - \mu}{\sigma}} \exp\left(\frac{-s^2}{2}\right) ds \\&= \left(\text{changing variables } x = \sigma s + \mu, \frac{1}{\sigma} dx = ds\right) \\&= \frac{1}{\sqrt{2\pi\sigma^2}} \int_{-\infty}^{y} \exp\left(\frac{-(x - \mu)^2}{2\sigma^2}\right) dx,\end{aligned}\]

where the density function is now clearly seen to be \(\phi_{\mu, \sigma^2}\) , and so \(Y \sim N(\mu, \sigma^2)\) .

The above technique is not restricted to normal random variables. For a random variables with finite mean and variance the transformation

\[X \mapsto \frac{X - \mu}{\sigma},\]

| Range | Probability |

|---|---|

| \(x \in [\mu - \sigma, \mu + \sigma]\) | 0.6827 |

| \(x \in [\mu - 2\sigma, \mu + 2\sigma]\) | 0.9545 |

| \(x \in [\mu - 3\sigma, \mu + 3\sigma]\) | 0.9973 |

| \(x \in [\mu - 4\sigma, \mu + 4\sigma]\) | 0.9999 |

Table 2.1: Probability table for various standard deviation ranges for a normally distributed random variable

will always produce a zero mean and unit standard deviation random variable. Such normalization is often employed to create scaled variables in modeling.

The skew and kurtosis of a normal random variable are 0, and 3 respectively. The somewhat odd appearance of the number 3 in a math text leads some authors to define kurtosis as \(\kappa - 3\) . With this modification, a normal random variable has zero kurtosis. We do not adopt this convention.

The normal distribution is (for many reasons) the de facto reference distribution. And if not the normal distribution, its close cousin the log-normal distribution. This is in spite of its lack of applicability in some scenarios. In particular, if we recall David Viniar, the CFO of Goldman Sachs during the Quant Crisis from the introduction [15], we may ask ourselves what the likelihood of moves observed during that particular crisis would be under the assumption of normality.

In the case of normally distributed variables, we need only look at the number of standard deviations from the mean to determine likelihood. This is evidenced in the exponential in the probability density function in (2.3) where, in fact, the motivation for normalization is straightforward. Table 2.1.1 shows various probabilities obtained by calculating \(\Phi(k\sigma) - \Phi(-k\sigma)\) for various levels of \(k\) .

We may interpret some of this data in the following way: for a normally distributed random variable, seeing a four standard deviation observation is a 1 in 10,000 event. Looking at the table, it is clear that these probabilities are not linear in \(\sigma\) . A six sigma event, for instance, is a one in five hundred million event. Put in perspective, a six sigma event would occur one day every 1,388,455 million years. Five times longer than the appearance of anatomically modern humans in Africa.

Now, what about a 25 sigma event? The probability of such an event is \(6.10 \times 10^{-138}\) . In terms of years, this would occur one day in \(4.49 \times 10^{132}\) years. Here’s the thing: the universe is only 13.82 billion years old, so that you shouldn’t see this type of event but once across \(3.2 \times 10^{125}\) universes [16, 17].

Put another way, the surface area of the earth is 510 trillion \(\text{m}^2\) . Approximating a human hand at \(0.01 \text{ m}^2\) , it would be \(3.2 \times 10^{118}\) times more likely to catch a random ball dropped from space in the palm of your hand than to see a twenty five sigma event.

Returning to the cosmological scale, an upper bound for the number of particles in the universe is \(1.0e85\) . Thus you’d be more likely to pick a single

particle from the universe than to witness a 25 sigma event. Much, much, much more likely.

We mentioned in the first chapter that during the Quant Crisis, David Viniar, the CFO of Goldman Sachs, was quoted as saying they were observing 25-standard deviation moves, several days in a row. Connecting this statement to the probabilities just calculated is not done in a pejorative sense. More, it is likely that they had very carefully constructed a very stable and attractive product that was blown out by overcrowding and liquidations. Unfortunately we: 1) may find ourselves inherently referring to the normal distribution and its shortcuts of standard deviation explaining everything even when this is inappropriate; 2) tend to think linearly so that a 25 standard deviation feels like six times a four standard deviation move via some heuristics; and 3) do not fully understand the dynamics of equity returns, which are subject to crises and other exogenous effects.

The normal distribution, even with the above in mind, has extremely nice properties, not the least of which is that it is overwhelmingly the most likely distribution to assume if you are only given the mean and standard deviation of a random variable. The analogy would be that the normal distribution acts as our first linear approximation to the underlying distributions that we may never know. This does not preclude its use as a tool, but should give us a moment to reflect, especially if leverage is determined via risk estimates based on its use.

2.1.2 Log-normal Distribution

The density function for a log-normal random variable, is again a two parameter function \(ln_{\mu,\sigma^2}(\cdot)\) given by

\[ln_{\mu,\sigma^2}(x) = \frac{1}{\sqrt{2\pi\sigma^2}} \frac{1}{x} \exp\left(-\frac{(\log(x) - \mu)^2}{2\sigma^2}\right), \quad (2.12)\]

and is clearly only defined on \(\mathbb{R}_+\) . A log-normal random variable with parameters \(\mu\) and \(\sigma^2\) is such that its logarithm is distributed as \(N(\mu, \sigma^2)\) , and we will denote it by \(LN(\mu, \sigma^2)\) . That is, \(Y\) is log-normal if \(\log(Y)\) is normal.

Example 2.1.3. In a manner similar to before we may obtain the cumulative distribution function for \(Y \sim LN(\mu, \sigma^2)\) ; viz.,

\[\begin{aligned} F_Y(y) &= \mathbb{P}(Y < y) \\ &= \mathbb{P}(\exp(X) < y) \\ &= \mathbb{P}(X < \log(y)) \\ &= F_X(\ln(y)), \end{aligned}\]

so that \(F_Y(y) = \Phi_{\mu,\sigma^2}(\ln(y)) = \Phi\left(\frac{\log(y)-\mu}{\sigma}\right)\) .

From here, we may also derive the density function for \(Y \sim LN(\mu, \sigma^2)\) as

\[\begin{aligned}F_Y(y) &= \Phi\left(\frac{\log(y) - \mu}{\sigma}\right) \\&= \frac{1}{\sqrt{2\pi}} \int_{-\infty}^{\frac{\log(y) - \mu}{\sigma}} \exp\left(-\frac{s^2}{2}\right) ds \\&= \left(\text{changing variables } s = \frac{\log(x) - \mu}{\sigma}, \frac{1}{\sigma \cdot x} dx = ds\right) \\&= \frac{1}{\sqrt{2\pi\sigma^2}} \int_0^y \frac{1}{x} \exp\left(-\frac{(\log(x) - \mu)^2}{2\sigma^2}\right) dx,\end{aligned}\]

which is exactly the density given in (2.12), as desired.

We shall see that the above technique generalizes, allowing us to derive density functions of random variables constructed from functions of random variables. We will call this a change of variables theorem – perhaps not surprisingly given the repeated application above.

The mean and variance of \(Y \sim LN(\mu, \sigma^2)\) are given by

\[\begin{aligned}\mathbb{E}(Y) &= \exp\left(\mu + \frac{1}{2}\sigma^2\right) \\Var(Y) &= \exp\left(2\mu + \sigma^2\right) \left(\exp\left(\sigma^2\right) - 1\right),\end{aligned}\]

and their derivations are left as exercises.

The log-normal distribution is terribly important, due in large part to the Black-Scholes option pricing formula which relies on the assumption of log-normality of future stock prices over any interval. For a stock price, a small increment in time \(\delta\) , and initial and terminal times \(t\) and \(T = t + \delta\) , respectively, \(\ln(S_T)\) is distributed as

\[\ln(S_{t+\delta}) \sim N\left(\ln(S_t) + \left(\mu - \frac{\sigma^2}{2}\right)\delta, \sigma^2\delta\right).\]

A related result is that so-called log returns,

\[r_t = \ln\left(\frac{S_{t+\delta}}{S_t}\right)\]

are normally distributed as

\[r_t \sim N\left(\left(\mu - \frac{\sigma^2}{2}\right)\delta, \sigma^2\delta\right).\]

The above relationship is not empirical as we shall soon see. It is, however, interpretive, as market participants calculate volatilities, \(\sigma\) , implied by market prices for various derivative contracts. Interestingly, the drift term, \(\mu\) , is inconsequential in derivatives pricing as the drift may be hedged away in a frictionless world, but this is beyond the scope of the current work.

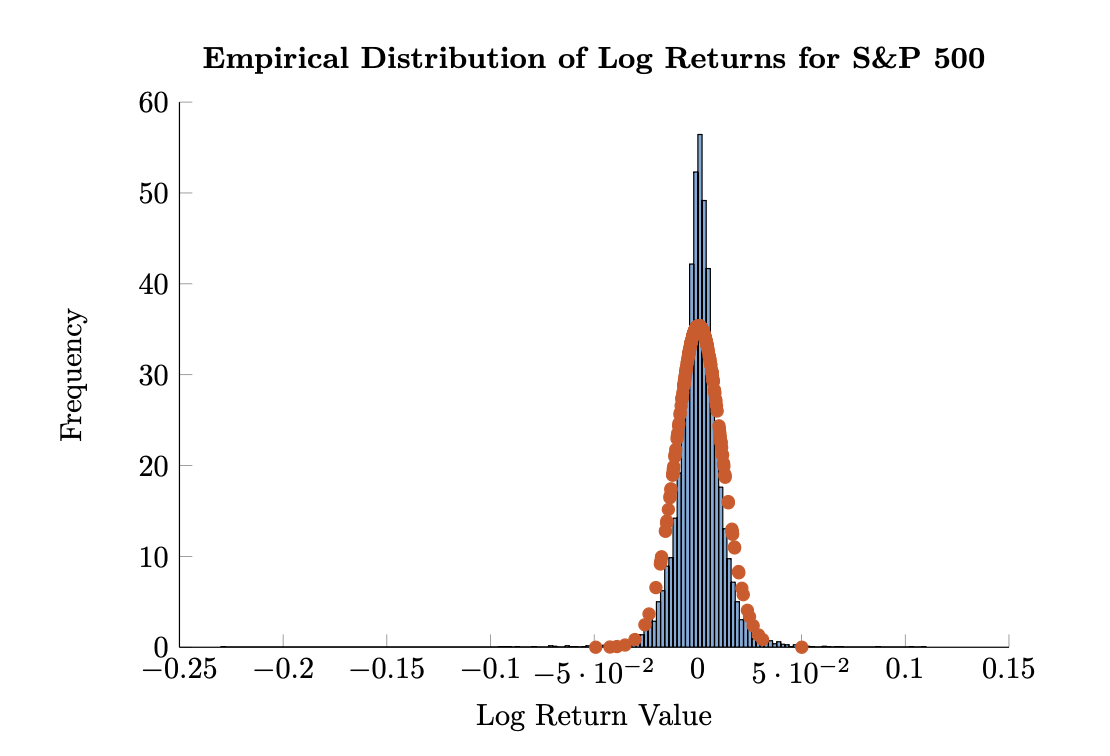

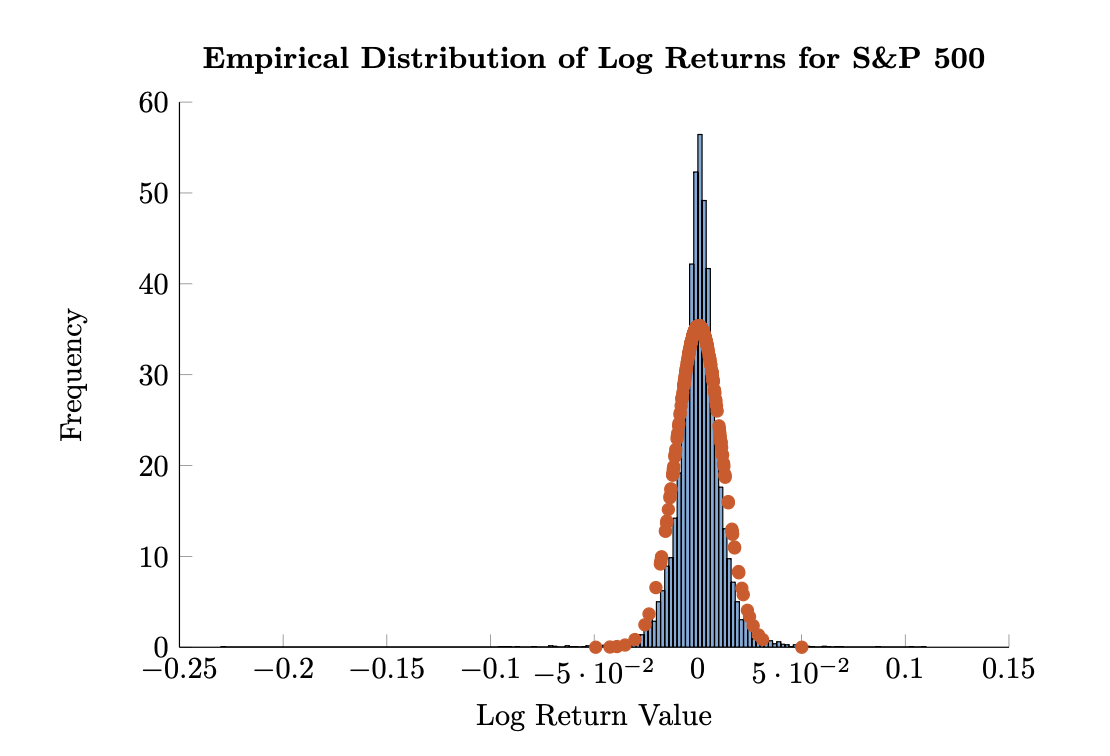

Figure 2.1: Empirical distribution of log returns of the S&P 500 from 1980 through 2015 with a fitted normal distribution in blue and red, respectively.

Figure 2.1: Empirical distribution of log returns of the S&P 500 from 1980 through 2015 with a fitted normal distribution in blue and red, respectively.

What we can look at is the empirical distribution of daily log returns for the S&P 500 Index over a long horizon, in this case from 1980 through 2015. Figure 2.1 shows a normalized histogram along with a fitted normal distribution. For the present, we will not be concerned about how to estimate \(\mu\) and \(\sigma\) from data, even as there is likely ample familiarity with calculating both. Instead, we take as granted that a ‘good’ estimate of the mean and standard deviation are possible to obtain. We will have cause to revisit this notion when we notice that these estimates—even while they have good theoretical backing—are not stable through time in markets.

Figure 2.1 clearly demonstrates ‘peakedness’, with a considerable amount of the mass of the empirical distribution exceeding the fit (blue versus red) for values near zero. In addition, we also see extreme behavior in the market returns, with the largest moves falling far outside the tails implied by the fit. This occurs on both sides of the mean; that is, for positive and negative returns, but the former are smaller in magnitude than the latter. We see, then, an example of the asymmetry of returns, with downside risk being greater than upside gains. The market attempts to price this phenomenon in the options market.

In addition to optics obtained from the figure, we may also calculate em-

| Range | Empirical Frequency | Ratio of Empirical Extreme to Normal |

|---|---|---|

| \(x \in [\mu - \sigma, \mu + \sigma]\) | 0.7889 | 0.665 |

| \(x \in [\mu - 2\sigma, \mu + 2\sigma]\) | 0.9534 | 1.02 |

| \(x \in [\mu - 3\sigma, \mu + 3\sigma]\) | 0.9859 | 5.22 |

| \(x \in [\mu - 4\sigma, \mu + 4\sigma]\) | 0.9942 | 92.14 |

| \(x \in [\mu - 5\sigma, \mu + 5\sigma]\) | 0.9974 | 4,609 |

| \(x \in [\mu - 6\sigma, \mu + 6\sigma]\) | 0.9983 | 837,128 |

Table 2.2: Empirical frequency table for daily log returns of the S&P 500 Index from 1980 through 2015. In addition to frequencies, we also calculate the ratio of the empirical frequency of moves outside \(\mu \pm k\sigma\) to the fit probability of such moves.

Empirical probabilities and compare those to the theoretical. To wit, we look at the percentage of observations within \(\mu \pm k\sigma\) for various values of \(k\) . We also calculate how much more likely returns outside of \(k\) standard deviations from the mean are relative to the fit normal density. The results are in Table 2.1.2.

The table clearly exhibits our previous observations. Namely, there are many more observations within one standard deviation of the mean than implied by a normal fit. Two standard deviations seems to be about right, but as we go out to four, five, and six standard deviations, the empirical distribution completely loses track with the fit. For example, six- \(\sigma\) events are 837,128 were more likely to occur historically than would occur under a best fit normal distribution. This echoes our previous analysis regarding 25- \(\sigma\) events. But in the present case we are establishing what are termed stylized features of returns. In addition to the likelihood analysis, we may also look at the empirical skew and kurtosis of the S&P log returns. We find that the estimated skew is -1.151, indicating that there is more mass to the left of the mean than in a centered distribution; this is only indicative, however, as 51.07% of the log returns are positive, the result being influenced by the extreme moves in the left tail. The sample kurtosis is 29.43, a far-cry from the value of 3 obtained from the normal distribution. The implication of the large sample kurtosis is that the empirical distribution has many outliers, or more extreme outliers (relative to a normal density). This is indeed the case.

2.1.3 Student \(t\) Distribution

The Student \(t\) distribution found application (but not derivation) from William Gosset, working for Guinness Brewery in a paper in 1908 [13]. His paper describes the distribution as the "frequency distribution of standard deviations of samples drawn from a normal population." The distribution was later popularized by Robert Fisher. We will find widespread application of the Student \(t\) distribution when we encounter it again (somewhat more naturally) in our regression work when describing the distribution of estimation parameters. For now, we begin with the somewhat obtuse probability density function.

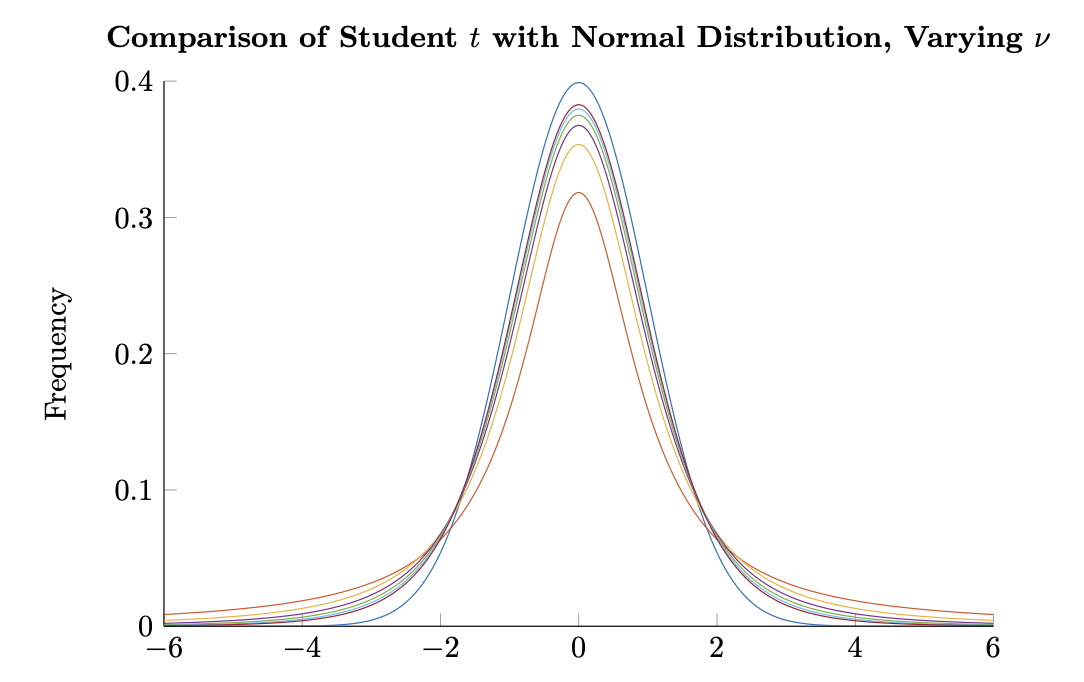

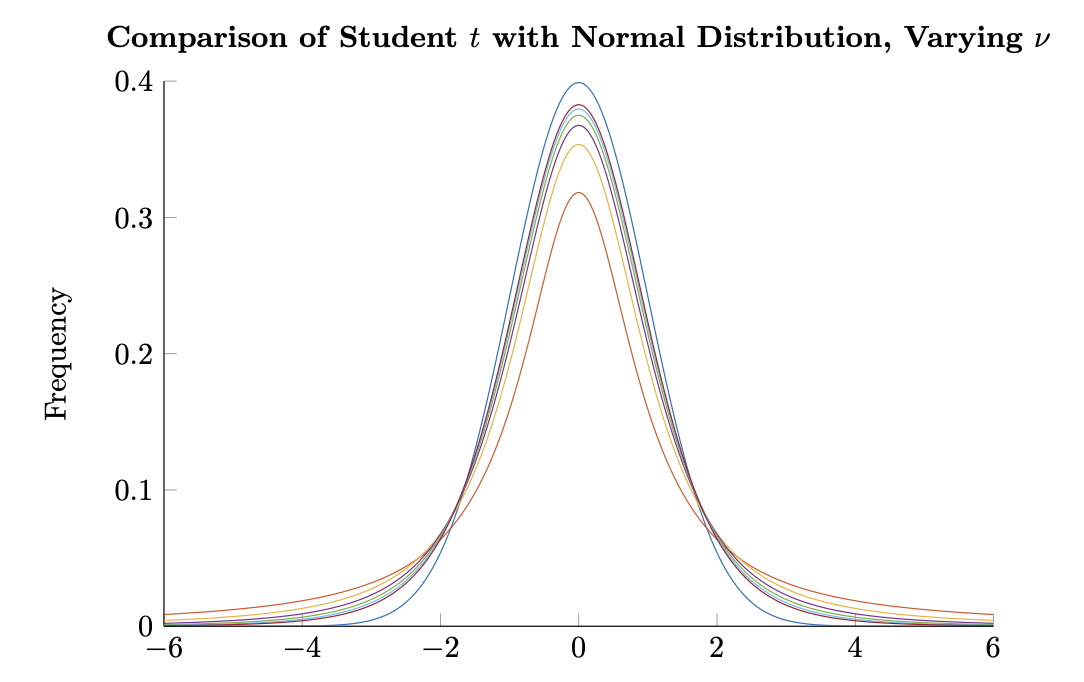

Figure 2.2: Comparison of the Student \(t\) density for \(\nu = 1, 2, \dots, 6\) with the standard normal density. The density with the lowest peak at \(x = 0\) belongs to \(\nu = 1\), with \(\nu\) increasing with the height of the peak; the highest belonging to the normal distribution.

Figure 2.2: Comparison of the Student \(t\) density for \(\nu = 1, 2, \dots, 6\) with the standard normal density. The density with the lowest peak at \(x = 0\) belongs to \(\nu = 1\), with \(\nu\) increasing with the height of the peak; the highest belonging to the normal distribution.

The Student \(t\) density is a three parameter function \(\text{st}_{\mu,\sigma^2;\nu}(\cdot)\) with \(\nu \in \mathbb{N}_+\) given by

\[\text{st}_{\mu,\sigma^2;\nu}(x) = \frac{\Gamma\left(\frac{\nu+1}{2}\right)}{\sqrt{\nu\pi}\sigma^2\Gamma\left(\frac{\nu}{2}\right)} \left(1 + \frac{(x-\mu)^2}{\nu\sigma^2}\right)^{-\frac{\nu+1}{2}}, \quad (2.13)\]

where \(\nu\) is referred to as the degrees of freedom of the distribution, and \(\Gamma(\cdot)\) is the gamma function (whose values at \(n \in \mathbb{N}_+\) give \(\Gamma(n) = (n-1)!\) ).

If a random variable, \(T\) , has the above density, we say that it is Student \(t\) with \(\nu\) degrees of freedom and denote this by \(T \sim \text{St}(\mu, \sigma^2; \nu)\) . We omit the cumulative distribution function here as it is nearly as intractable written down as not, involving a function called the regularized beta function. Modern programming languages are capable of determining the values of the CDF of the Student \(t\) distribution.

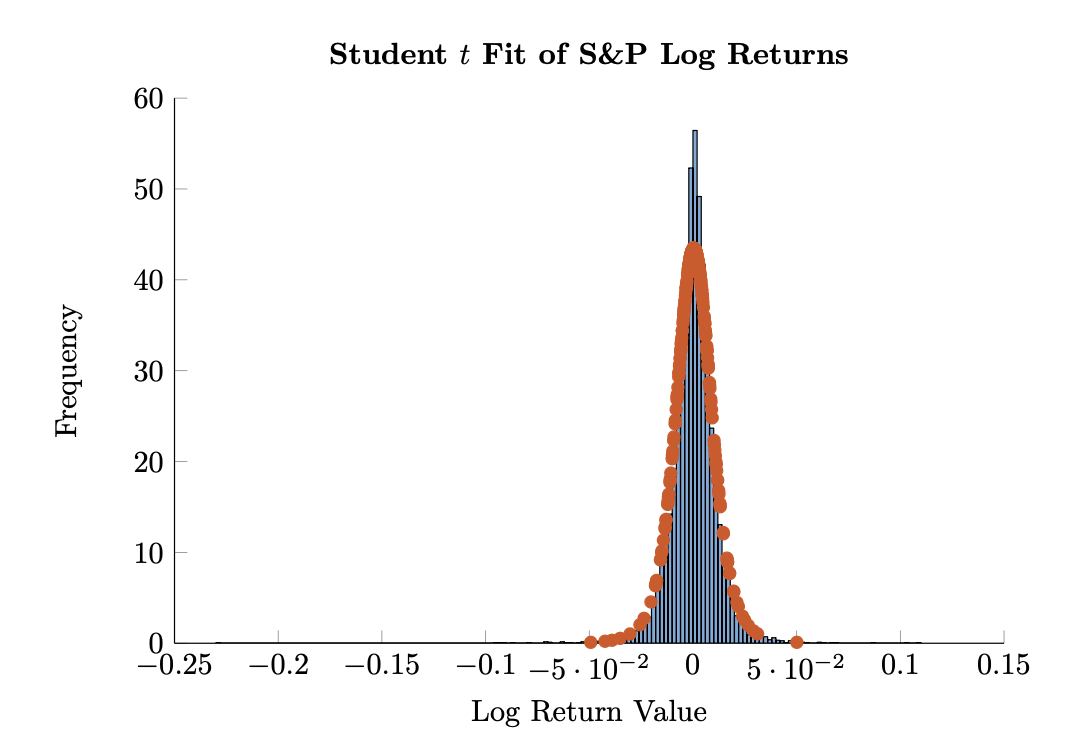

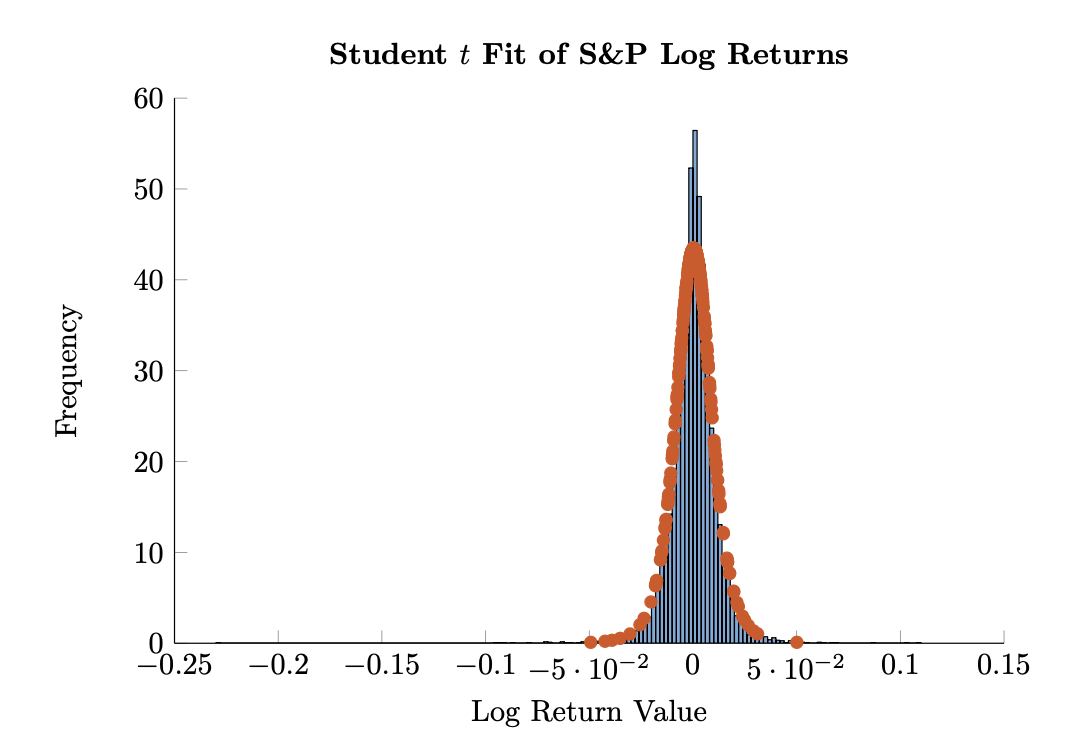

Figure 2.3: Empirical distribution of log returns of the S&P 500 from 1980 through 2015 with a fitted Student \(t\) distribution in blue and red, respectively. The Student \(t\) distribution used here has \(\nu = 5\) degrees of freedom.

Figure 2.3: Empirical distribution of log returns of the S&P 500 from 1980 through 2015 with a fitted Student \(t\) distribution in blue and red, respectively. The Student \(t\) distribution used here has \(\nu = 5\) degrees of freedom.

The \(t\) distribution with one degree of freedom is known as the Cauchy distribution, which has infinite variance and an undefined mean. It is simple to show, however, that for \(\nu > 1\) , \(T \sim \text{st}(\mu, \sigma^2; \nu)\) has expectation \(\mu\) . For \(\nu > 2, 3\), and 4, respectively, we have

\[\begin{aligned}Var(T) &= \frac{\nu}{\nu - 2}\sigma^2 \\ \gamma(T) &= 0 \\ \kappa(T) &= 3 + \frac{6}{\nu - 4},\end{aligned}\]

where in the final equation we see that the Student \(t\) distribution has kurtosis greater than three for \(\nu > 4\) , a desirable property given previous discussions. The limit as \(\nu \to \infty\) of \(\text{st}_{\mu, \sigma^2; \nu}(\cdot)\) is the normal density, \(\phi_{\mu, \sigma^2}(\cdot)\).

We compare \(T \sim \text{St}(0, 1; \nu)\) and \(X \sim N(0, 1)\) for various choices of \(\nu\) in Figure 2.1.3. As can be seen, the Student \(t\) distribution has fatter tails than the normal density. However, this comes at the expense of losing some peakedness at the mean, another stylized feature we noticed in equity returns. This is mitigated, however, by noticing that the variance of \(T \sim \text{St}(0, 1; \nu)\) is exactly \(\frac{\nu}{\nu - 2}\) for \(\nu > 2\) . That is, the peak would be higher if the variance of \(T\) had been set to a constant 1.

Meucci [23] has suggested using a parameter choice of \(\nu = 5\) in fitting a Student \(t\) distribution to log returns. We do this and show the result as before in Figure 2.1.3. Optically, we see a much better fit to the data than in the normal fit to log returns.

In addition, we may go through the same exercise as before, comparing theoretical and empirical frequencies for fit values of \(\mu\) and \(\sigma\) . This is left as an exercise for the reader. In doing this, one sees that both the center and the tails are much better explained by the Student \(t\) distribution than by the normal distribution. Extreme values are less than twice as likely to occur in our historical data set than the fit Student \(t\) density would imply as well: a vast improvement over the previous discrepancy of over 800,000.

Indeed — from 837,128× to less than 2×. That's the power of choosing a distribution that actually matches the data's fat tails.

The Student t with ν=5 is a pragmatic middle ground: finite mean and variance (needs ν>2), finite kurtosis (needs ν>4), but still heavy-tailed enough to capture real market behavior.

2.1.4 Functions of Random Variables

In our previous examples, we encountered a few examples of random variables that were functions of random variables; e.g., \(Y = e^X\) and \(Y = \mu + \sigma X\) , for \(X\) with a known distribution. In determining the cumulative distribution and density functions of these transformed random variables, we began with a manipulation of the CDF to a known distribution and proceeded to analyze the probability density function and integration bounds obtained. We then used a change of variables to determine the density of the transformed variable. Here we formalize the procedure, developing the change of variable theorem.

Theorem 2.1.1. If \(g: \mathbb{R} \to \mathbb{R}\) is continuous and monotonically increasing and \(X \in \mathbb{R}\) is a random variable, then the random variable \(Y = g(X)\) defined by setting \(y = g(x)\) for every realization of \(X\) has the cumulative distribution function

\[F_Y(y) = F_X(g^{-1}(y)), \quad (2.14)\]

and if \(X\) has a density, then the density of \(Y\) is given by

\[f_Y(y) = \frac{dg^{-1}(y)}{dy} f_X(g^{-1}(y)). \quad (2.15)\]

Proof: We know by the inverse function theorem that a local inverse of \(g\) exists, hence for any \(y\) , we may proceed as above,

\[\begin{aligned} F_Y(y) &= \mathbb{P}(Y < y) \\ &= \mathbb{P}(g(X) < y) \\ &= \mathbb{P}(X < g^{-1}(y)) \\ &= F_X(g^{-1}(y)). \end{aligned}\]

From here we may relate the density function of \(X\) to the density function of \(Y\) . We get

\[\begin{aligned} F_Y(y) &= F_X(g^{-1}(y)) \\ &= \int_{-\infty}^{g^{-1}(y)} f_X(s) ds \\ &\quad \text{(changing variables } s = g^{-1}(u), \frac{dg^{-1}(u)}{du} du = ds, \text{ by the chain rule)} \\ &= \int_{-\infty}^{y} \frac{dg^{-1}(u)}{du} f_X(g^{-1}(u)) du \end{aligned}\]

as desired. Notice that we took advantage of the monotonicity of \(g\) in our change of bounds, with \(s = g^{-1}(y)\) implying \(g^{-1}(u) = g^{-1}(y)\) , and hence \(u = y\) .

We may also relax the original condition to require only that \(g\) is monotonic (and not necessarily increasing). Working through the proof in the same manner, but allowing for monotonically decreasing \(g\) gives

\[f_Y(y) = \left| \frac{dg^{-1}(y)}{dy} \right| f_X(g^{-1}(y)). \quad (2.16)\]

2.2 Multivariate Distributions

We may generalize our work to include vectors of random variables,

\[X = \begin{pmatrix} X_1 \\ X_2 \\ \vdots \\ X_N \end{pmatrix}.\]

The cumulative distribution function then also generalizes to the joint cumulative distribution function of \(X\) , a function \(F: \mathbb{R}^N \to [0, 1]\) which satisfies

\[F(x_1, x_2, \dots, x_N) = \mathbb{P}(X_1 \le x_1, X_2 \le x_2, \dots, X_N \le x_N) \quad (2.17)\]

We say that the multivariate random variable, \(X\) , has a density,

\[f: \mathbb{R}^N \to \mathbb{R}_+,\]

if we may write

\[F(x_1, x_2, \dots, x_N) = \int_{-\infty}^{x_1} \cdots \int_{-\infty}^{x_N} f(s_1, \dots, s_N) ds_N \cdots ds_1. \quad (2.18)\]

As before, we require that \(f\) be integrable, nonnegative, and integrate to one.

The expected value of the multivariate random variable \(X\) , \(\mathbb{E}(X)\) , is given component wise

\[\mathbb{E}(X) = \begin{pmatrix} \mathbb{E}(X_1) \\ \mathbb{E}(X_2) \\ \vdots \\ \mathbb{E}(X_N) \end{pmatrix}, \quad (2.19)\]

and the generalization of variance, covariance, denoted \(\text{Cov}(X)\) is determined by

\[\text{Cov}(X) = \mathbb{E}((X - \mu)(X - \mu)'), \quad (2.20)\]

where \(X \in \mathbb{R}^N\) , \(\mu = \mathbb{E}(X)\) , and expectation is again componentwise in \(\text{Cov}(X) \in \mathbb{R}^{N \times N}\) . We will denote the \((i, j)\) component of \(\text{Cov}(X)\) as \(\sigma_{ij}\) , with \(\sigma_{ii} = \text{Var}(X_i)\) .

We will often denote the covariance matrix by \(\Sigma\) , and we will spend considerable time analyzing its properties both from a theoretical as well as empirical point of view (and oftentimes settling on the intersection of the two).

Example 2.2.1. The expectation operator is linear; viz.,

\[\mathbb{E}(w_1X_1 + w_2X_2) = w_1\mathbb{E}(X_1) + w_2\mathbb{E}(X_2). \quad (2.21)\]

for random variables, \(X_1\) and \(X_2\) , and scalars \(w_1\) and \(w_2\) . This generalizes to

\[\mathbb{E}\left(\sum_{i=1}^{N} w_i X_i\right) = \sum_{i=1}^{N} w_i \mathbb{E}(X_i).\]

We prove the result assuming that there exists a joint density function, \(f(\cdot)\) , but this is not necessary. We have in this case

\[\begin{aligned}\mathbb{E}(w_1X_1 + w_2X_2) &= \int_{-\infty}^{\infty} \int_{-\infty}^{\infty} (w_1X_1 + w_2X_2) f(x_1, x_2) dx_1 dx_2 \\ &\quad \text{(by the linearity of the integral operator)} \\ &= w_1 \int_{-\infty}^{\infty} \int_{-\infty}^{\infty} X_1 f(x_1, x_2) dx_1 dx_2 + w_2 \int_{-\infty}^{\infty} \int_{-\infty}^{\infty} X_2 f(x_1, x_2) dx_1 dx_2 \\ &= w_1 \mathbb{E}(X_1) + w_2 \mathbb{E}(X_2)\end{aligned}\]

as desired.

Example 2.2.2. The covariance matrix, \(\Sigma\) is positive semi-definite. We say that a matrix, \(A \in \mathbb{R}^{N \times N}\) , is positive semi-definite if for all \(w \in \mathbb{R}^N\) not identically zero,

\[w'Aw \ge 0, \quad (2.22)\]

with \(w'Aw = 0\) only when \(w \equiv 0\) .

Given a multivariate random variable, \(X \in \mathbb{R}^N\) , with \(\text{Cov}(X) = \Sigma\) , and a scalar vector \(w \in \mathbb{R}^N\) , the result follows by considering the variance of the random variable \(Y = w'X = \sum_{i=1}^N w_i X_i\) ; viz.,

\[\begin{aligned}\text{Var}(Y) &= \text{Var}(w'X) \\ &= \mathbb{E}\left((w'X - w'\mu)^2\right) \\ &= \mathbb{E}\left((w'(X - \mu))^2\right)\end{aligned}\]

where \(\mu = \mathbb{E}(X)\) . Continuing, we have by the linearity of the expectation operator that

\[\begin{aligned}\text{Var}(Y) &= \mathbb{E}(w'(X - \mu)(X - \mu)'w) \\ &= w'\mathbb{E}((X - \mu)(X - \mu)')w \\ &= w'\Sigma w.\end{aligned}\]

Now, we know from the definition of variance that \(\text{Var}(Y) \ge 0\) . The above relationship then implies \(w'\Sigma w \ge 0\) as well, proving the result.

We will make stronger claims about the covariance matrix, \(\Sigma\) , in future chapters, refining our assumptions about the composition of \(X\) to ensure that

\(\Sigma\) is positive definite; that is, inner products with nonzero vectors are strictly nonzero. The proof will be identical, with only a few comments added.

We may also consider linear combinations of the form \(BX\) for a multivariate random variable \(X\in\mathbb{R}^N\) and scalar matrix \(B\in\mathbb{R}^{M\times N}\) . We maintain linearity in expectation; viz.,

\[\mathbb{E}(BX)=B\mathbb{E}(X). \tag{2.23}\]

This can be shown directly since

\[BX=\begin{pmatrix} \sum_{i=1}^N b_{1i}X_i \\ \vdots \\ \sum_{i=1}^N b_{Mi}X_i \end{pmatrix}\]

so that

\[\mathbb{E}(BX)=\begin{pmatrix} \mathbb{E}\left(\sum_{i=1}^N b_{1i}X_i\right) \\ \vdots \\ \mathbb{E}\left(\sum_{i=1}^N b_{Mi}X_i\right) \end{pmatrix}=\begin{pmatrix} \sum_{i=1}^N b_{1i}\mathbb{E}\left(X_i\right) \\ \vdots \\ \sum_{i=1}^N b_{Mi}\mathbb{E}\left(X_i\right) \end{pmatrix}=B\mathbb{E}(X).\]

Similarly, \(\mathbb{E}(XB)=\mathbb{E}(X)B\) for scalar \(B\) with appropriate dimensions.

The covariance of a \(BX\) as above, \(Cov(BX)\) , is given by

\[Cov(BX)=BCov(X)B'. \tag{2.24}\]

This follows from the linearity of expectation since

\[\begin{aligned} Cov(BX) &= \mathbb{E}\left(\left(BX-\mathbb{E}(BX)\right)\left(BX-\mathbb{E}(BX)\right)'\right) \\ &= \mathbb{E}\left(B\left(X-\mathbb{E}(X)\right)\left(X-\mathbb{E}(X)\right)'B'\right) \\ &= B\mathbb{E}\left(\left(X-\mathbb{E}(X)\right)\left(X-\mathbb{E}(X)\right)'\right)B' \\ &= BCov(X)B'. \end{aligned}\]

Finally, if \(X\) and \(Y\) are univariate random variables taking their values in \(\mathbb{R}\) , we define

\[Cov(X,Y)=\mathbb{E}\left((X-\mu_X)(Y-\mu_Y)\right). \tag{2.25}\]

In terms of previous notation, then, if \(X\in\mathbb{R}^N\) , \(Cov(X_i,X_j)=\sigma_{ij}\) .

We may also consider the effect of arbitrary (continuous and injective) transformations on the distribution of a random variable, generalizing the change of variable theorem to the multivariate case.

Theorem 2.2.1. Let \(X\) be a multivariate random variable with \(X\in\mathbb{R}^N\) , and let

\[g:\mathbb{R}^N\rightarrow\mathbb{R}^N\]

be a one-to-one and continuous function. If \(f_X(\cdot)\) is the density of \(X\) , then the density of

\[Y=g(X)\]

is

\[f_Y(y) = f_X(g^{-1}(y)) \cdot \det(\nabla g^{-1}(y)) \quad (2.26)\]

where \(\nabla h\) denotes the Jacobian of a function \(h\) , and \(\det(\cdot)\) is the determinant function. The Jacobian, determinant, and change of variables in multivariate integration are discussed in the appendix.

The proof is exactly as before, accounting for the fact that we are working in \(\mathbb{R}^N\) and hence encounter the Jacobian rather than the derivative of the inverse of \(g\) .

The marginal distribution of \(X_j\) is denoted as in the univariate case as

\[F_j(x_j) = \mathbb{P}(X_j \le x_j)\]

and is given by

\[F(-\infty, \dots, x_j, \dots, \infty), \quad (2.27)\]

or in the case of densities, all upper bounds of integration are infinite except that related to the \(j\) th component, which is just \(x_j\) . When a density exists,

\[f_j(x) = \int_{-\infty}^{\infty} \cdots \int_{-\infty}^{\infty} f(s_1, \dots, s_{j-1}, x, s_{j+1}, \dots, s_N) ds_N \cdots ds_{j+1} ds_{j-1} \cdots ds_1 \quad (2.28)\]

is the \(j\) th marginal density.

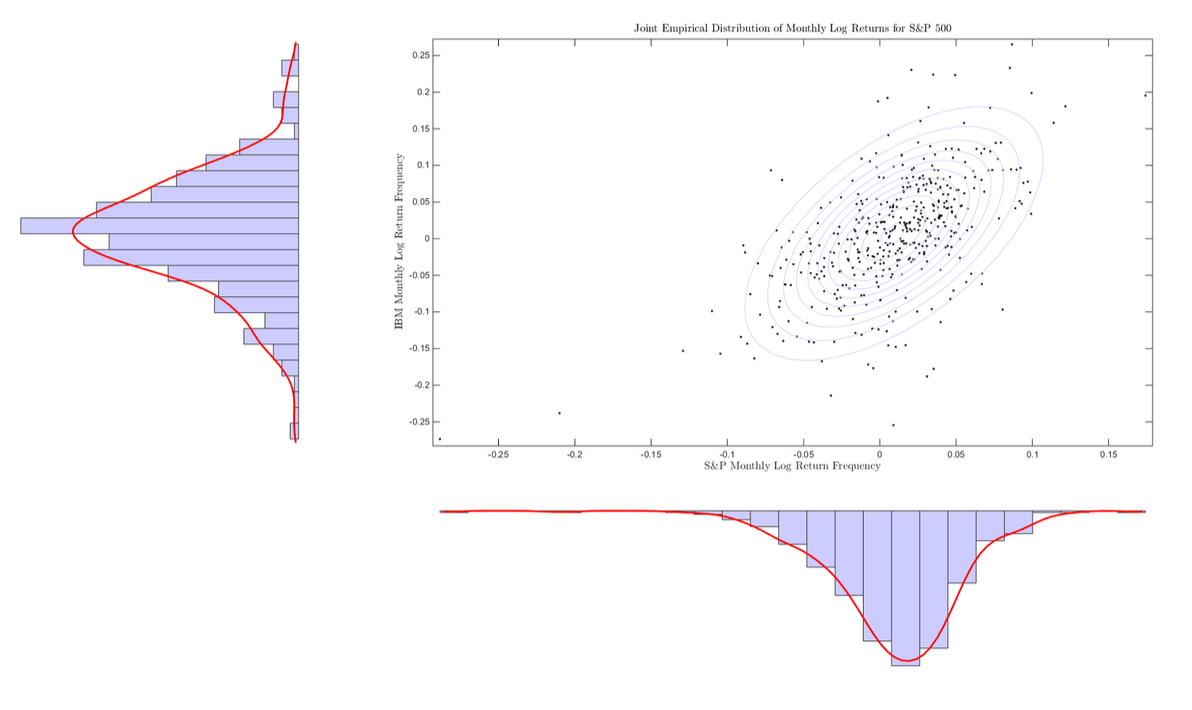

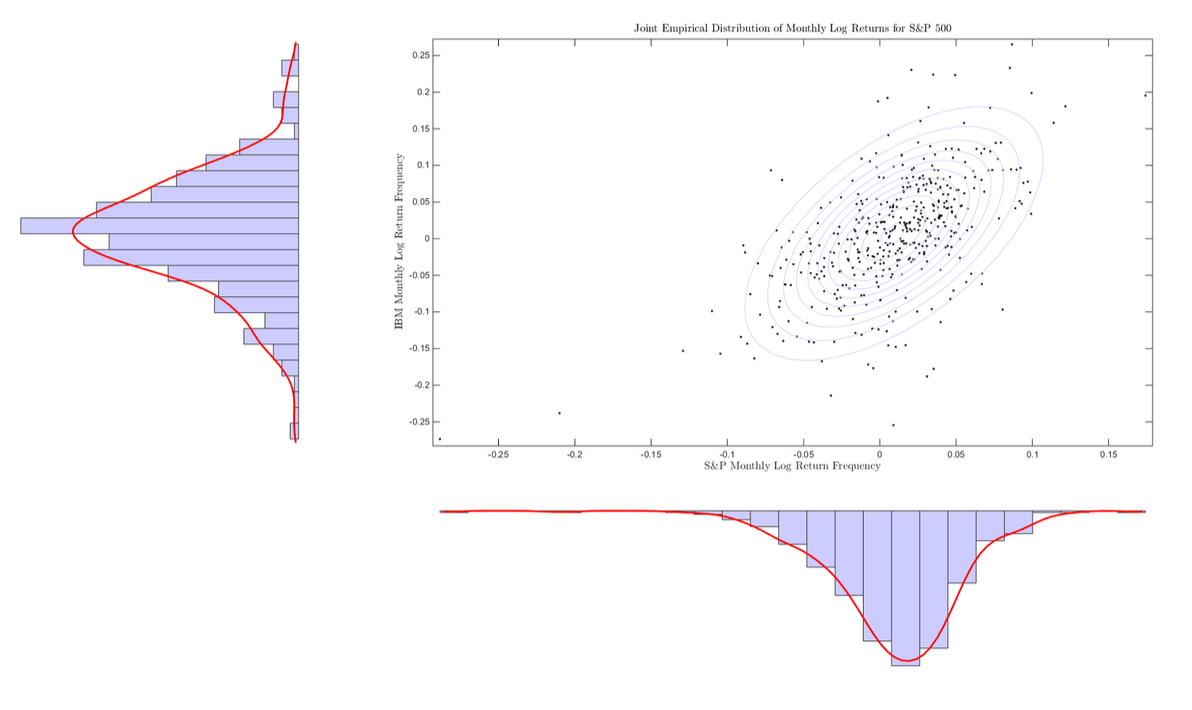

Example 2.2.3. Figure 2.2 shows an example joint distribution using empirical data. Here, monthly log returns for both the S&P 500 and IBM are shown in a scatterplot. Contour lines are shown for the best fit multivariate normal density, and marginal distributions are given outside the scatterplot. We may make a few observations:

- The jointly normal distribution assumption seems to be a poor fit overall, but there seems to be evidence that an ellipsoidal distribution is a decent approximation for the joint distribution.

- There seems to be a fairly strong linear relationship between the monthly returns of IBM and the S&P 500 [11, 25].

- Extreme events seem more tightly clustered on the downside.

We will return to the second observation when we establish the Capital Asset Pricing Model. At this point, we can say something to the effect of ‘IBM looks a lot like the market, with some random noise that oftentimes looks to be normally distributed,’ and leave it at that.

Two random variables, \(X_1\) and \(X_2\) , are said to be independent if

\[\mathbb{P}(X_1 \le x_1, X_2 \le x_2) = \mathbb{P}(X_1 \le x_1)\mathbb{P}(X_2 \le x_2).\]

Or equivalently,

\[F(x_1, x_2) = F_1(x_1) \cdot F_2(x_2), \quad (2.29)\]

Figure 2.4: Empirical joint distribution of log returns of the S&P 500 and IBM from 1980 through 2015, including marginal distributions. The scatterplot includes contour lines of a best fit multivariate normal density.

Figure 2.4: Empirical joint distribution of log returns of the S&P 500 and IBM from 1980 through 2015, including marginal distributions. The scatterplot includes contour lines of a best fit multivariate normal density.

where \(F_i(\cdot)\) is the marginal CDF. Independence, then, captures the notion that the probability of independent events is the product of their marginal probabilities, the outcome of one does not impact the others. In terms of densities (should the joint cumulative distribution function allow a density), we have that independent random variables have density

\[f(x_1, x_2) = f_1(x_1) \cdot f_2(x_2), \quad (2.30)\]

where again \(f_i(\cdot)\) is the marginal density of \(X_i\) .

Example 2.2.4. Returning to the return data used to construct Figure 2.4, we can verify that the empirical frequencies do not give an indication of independence between the two random variables. Our belief that these series should not be independent stems from our previous observation of the apparent relationship between the two. In particular, the ellipsoidal contour lines in Figure 2.4 bolster this claim.

We aren't in need of much theory, however: a little verification is all we need, utilizing Equation (2.30). We may look at the joint probability

\[\mathbb{P}(r_{IBM} < r_\tau, r_{S\&P} < r_\tau)\]

and compare this to

\[\mathbb{P}(r_{IBM} < r_\tau)\mathbb{P}(r_{S\&P} < r_\tau)\]

for various values of \(r_\tau\) .

Looking at \(r_\tau = 0\) , for instance,

\[\begin{aligned}\mathbb{P}(r_{IBM} < r_\tau, r_{S\&P} < r_\tau) &= 0.333 \\ \mathbb{P}(r_{IBM} < r_\tau)\mathbb{P}(r_{S\&P} < r_\tau) &= 0.225,\end{aligned}\]

giving evidence from the empirical distribution that these are not independent, as expected. Notice that in this example independence will underweight the probability of joint downward movements.

A corollary to this observation is that risk metrics should account for joint as well as marginal distributions. The covariance matrix, \(\Sigma\) , is one method of capturing these joint dynamics, but as we see in the figure, the assumption of joint normality is likely not accurate. Extensions have been made to incorporate various joint distributions given marginal distributions as input. This is often done with copula functions which are a popular and flexible tool which we shall encounter later on.

The variance of the sum of univariate random variables \(X\) and \(Y\) with \(\mathbb{E}(X) = \mu_X\) and \(\mathbb{E}(Y) = \mu_Y\) is,

\[\begin{aligned}\mathrm{Var}(X+Y) &= \mathbb{E}\left((X+Y-\mu_X-\mu_Y)^2\right) \\ &= \mathbb{E}\left((X-\mu_X)^2\right) + \mathbb{E}\left((Y-\mu_Y)^2\right) + 2\mathbb{E}\left((X-\mu_X)(Y-\mu_Y)\right) \\ &= \sigma_X^2 + \sigma_Y^2 + 2\sigma_{XY}.\end{aligned}\]

In the case of independent events the cross term, \(\sigma_{XY}\) is zero, thus if \(X\) and \(Y\) are independent \(\mathrm{Var}(X+Y) = \mathrm{Var}(X) + \mathrm{Var}(Y)\) . This is left to the reader as an exercise.

This generalizes to the case of \(N\) jointly independent random variables, where, for instance the joint probability density function may be written as

\[f(x_1, \dots, x_N) = \prod_{i=1}^N f_i(x_i), \tag{2.31}\]

the joint distribution function as

\[F(x_1, \dots, x_N) = \prod_{i=1}^N F_i(x_i), \tag{2.32}\]

and joint probabilities as

\[\mathbb{P}(X_1 \le x_1, \dots, X_N \le x_N) = \prod_{i=1}^N \mathbb{P}(X_i \le x_i) \tag{2.33}\]

with the same notation used previously. Intuitively, it is this final formulation that might resonate with the non-technical usage of independence: when one thinks of multiple coin flips, one instinctively multiplies probabilities to determine outcomes. Sequential coin flips are the prototypical example of independent and identically distributed random variables.

We say that a sequence of random variables \(\{X_i\}_{i=1}^N\) are independent and identically distributed, or iid, if each \(X_i\) has the same distribution and all pairs, \((X_i, X_j)\) are pairwise independent.

2.2.1 Multivariate Normal Distribution

The multivariate normal density, or the density function for a multivariate normal random variable, is a two parameter function, \(\phi_{\mu,\Sigma}(\cdot)\) , given by

\[\phi_{\mu,\Sigma}(x)=\frac{1}{(2\pi)^{\frac{N}{2}}}\frac{1}{\det(\Sigma)^{\frac{1}{2}}}\exp\left(-\frac{1}{2}(x-\mu)'\Sigma^{-1}(x-\mu)\right) \quad (2.34)\]

for \(x\in\mathbb{R}^N\) .

As before, if a random variable, \(X\) , has the above density, we say that it is multivariate normal, and denote this by \(X\sim N(\mu,\Sigma)\) . The cumulative distribution of a normal random variable will be denoted as before by \(\Phi_{\mu,\Sigma}\) . We state without proof that in such a case

\[\begin{aligned}\mathbb{E}(X) &= \mu \\ \text{Cov}(X) &= \Sigma.\end{aligned}\]

Implicit in the definition is that \(\Sigma\) is invertible, and therefore, since \(\Sigma\) is a covariance matrix and hence positive semidefinite, we must have that \(\Sigma\) is positive definite.

We also note that linear combinations of jointly normal random variables are again normal. In the context of portfolio management, if the log returns, say, of various assets are assumed to be jointly normal, then a portfolio (that is a weighted sum) of these log returns will also be normal, with tractable mean and variance. Some care is being taken here to say ‘jointly normal.’ This is due to the fact that it is possible for marginal distributions to be normal without the joint distribution being multivariate normal. We are precluding this possibility.

We have in the general case the if \(X\) is multivariate normal with \(X\sim N(0,I)\) , for \(I\) the identity matrix, \(0\) a vector of zeros, and \(X\in\mathbb{R}^N\) , then for \(a\in\mathbb{R}^N\) and nonsingular \(B\in\mathbb{R}^{N\times N}\) , the random variable \(Y=a+BX\) is distributed as \(Y\sim N(a,BB')\) . We may employ the change of variables theorem for the multivariate case, but we choose to follow the same method as was seen in the univariate case. We have

\[\begin{aligned}F_Y(y) &= \mathbb{P}(Y < y) \\ &= \mathbb{P}(a+BX < y) \\ &= \mathbb{P}\left(X < B^{-1}(y-a)\right) \\ &= F_X\left(X < B^{-1}(y-a)\right).\end{aligned}\]

This gives that \(F_Y(y) = \Phi_{0,I}(B^{-1}(y-a))\) . Looking now at the formulation in terms of the density of \(X\) , we have

\[\begin{aligned}F_Y(y) &= \Phi_{0,I}(B^{-1}(y-a)) \\&= \frac{1}{(2\pi)^{\frac{N}{2}}} \int_{s \le B^{-1}(y-a)} \exp\left(-\frac{1}{2}s's\right) ds \\&= \text{(changing variables } x = Bs + a, \frac{1}{\det(B)}dx = ds) \\&= \frac{1}{(2\pi)^{\frac{N}{2}}} \frac{1}{\det(B)} \int_{x \le y} \exp\left(-\frac{1}{2}(B^{-1}(x-a))'(B^{-1}(x-a))\right) dx \\&= \frac{1}{(2\pi)^{\frac{N}{2}}} \frac{1}{\det(B)} \int_{x \le y} \exp\left(-\frac{1}{2}(x-a)'(B^{-1})'B^{-1}(x-a)\right) dx \\&= \frac{1}{(2\pi)^{\frac{N}{2}}} \frac{1}{\det(B)} \int_{x \le y} \exp\left(-\frac{1}{2}(x-a)'(BB')^{-1}(x-a)\right) dx\end{aligned}\]

where the density function is now clearly seen to be \(\phi_{a,BB'}\) , and so \(Y \sim N(a, BB')\) .

This example, too, indicates how we may simulate multivariate normal random variables. Given an arbitrary covariance matrix, \(\Sigma\) , and vector, \(\mu\) , if one can write \(\Sigma = \Lambda\Lambda'\) , then \(Y = \mu + \Lambda X\) , with \(X \sim N(0, I)\) is distributed as \(Y \sim N(\mu, \Sigma)\) .

In our proof, we required that \(B\) was invertible. A more general proof is available, resorting to so-called moment generating functions, but we do not cover these here. Suffice to say that the proof generalizes to the case \(Y = a + BX\) for \(a \in \mathbb{R}^M\) , \(B \in \mathbb{R}^{M \times N}\) , and \(X\) multivariate normal of dimension \(N\) .

Example 2.2.5. Many paragraphs have already been spent discussing the potential lack of applicability of the normal distribution for equity returns. We continue this arc, again looking at figure 2.2, where an estimated \(\hat{\mu}\) and \(\hat{\Sigma}\) are used to draw the contour lines of the multivariate normal density best fitting the data. To do this, contour lines are simply determined by

\[\mathcal{I}_c = \left\{x \mid (x - \hat{\mu})'\hat{\Sigma}^{-1}(x - \hat{\mu}) = c\right\} \quad (2.35)\]

for various values of \(c\) . The fact that these sets fix \(\phi_{\hat{\mu}, \hat{\Sigma}}\) is evident from the definition in (2.34).

Clearly there is, as in the univariate case, some reasonable fit, but extreme values and peakedness seem to still dominate, nonetheless.

2.2.2 Multivariate Log-Normal Distribution

The multivariate log-normal distribution is defined as before. We say that \(Y\) is a multivariate log-normal random variable if for some \(X \sim N(\mu, \Sigma)\) ,

\[Y = \exp(X)\]

or, equivalently, \(Y\) is log-normal if its logarithm is normal. In the above, exponentiation and logarithms are componentwise; viz., for \(X \in \mathbb{R}^N\) ,

\[\exp(X) = \begin{pmatrix} \exp(X_1) \\ \vdots \\ \exp(X_N) \end{pmatrix}.\]

The multivariate log-normal distribution is again described by two parameters, \(\mu\) , and \(\Sigma\) , and denoted \(Y \sim LN(\mu, \Sigma)\) . The density function for lognormal \(Y\) taking values in \(\mathbb{R}^N\) is given by

\[l_{\mu, \Sigma}(x) = \tag{2.36}\]

\[\frac{1}{(2\pi)^{\frac{N}{2}} \det(\Sigma)^{-\frac{1}{2}}} \exp\left(-\frac{1}{2}(\log(x) - \mu)' \Sigma^{-1} (\log(x) - \mu)\right) \cdot \prod_{i=1}^{N} \frac{1}{x_i}\]

for \(x \in \mathbb{R}_+^N\) .

Example 2.2.6. We may derive the density \(l_{\mu, \Sigma}\) by use of the change of variable theorem. For \(Y = \exp(X) = g(X)\) , with \(X\) having density \(\phi_{\mu, \Sigma}(\cdot)\) , the inverse of \(g\) is given by

\[g^{-1}(Y) = \begin{pmatrix} \log(Y_1) \\ \vdots \\ \log(Y_N) \end{pmatrix} = \begin{pmatrix} g_1^{-1}(Y_1) \\ \vdots \\ g_N^{-1}(Y_N) \end{pmatrix}.\]

To determine the Jacobian of \(g^{-1}(\cdot)\) , we must calculate the partial derivatives \(\frac{\partial g_i^{-1}}{\partial y_j}\) . From the above, these are exactly given by

\[\frac{\partial g_i^{-1}}{\partial y_j} = \begin{cases} \frac{1}{y_j} & \text{if } i = j \\ 0 & \text{otherwise.} \end{cases}\]

The Jacobian, \(\nabla g^{-1}\) , is therefore a diagonal matrix, whose determinant is found simply to be

\[\prod_{i=1}^{N} \frac{1}{y_i}.\]

We are now ready to derive the density function for \(Y\) :

\[\begin{aligned} l_{\mu, \Sigma}(y) &= \phi_{\mu, \Sigma}(g^{-1}(y)) |\det(\nabla g^{-1}(y))| \\ &= \phi_{\mu, \Sigma}(\log(y)) \prod_{i=1}^{N} \frac{1}{y_i} \end{aligned}\]

which is exactly (2.36).

The mean and covariance of \(Y \sim LN(\mu, \Sigma)\) are given as

\[\begin{aligned}\mathbb{E}(Y)_i &= \exp\left(\mu_i + \frac{1}{2}\sigma_i^2\right) \\ \text{Cov}(Y)_{ij} &= \exp\left(\mu_i + \mu_j + \frac{1}{2}(\sigma_i^2 + \sigma_j^2)\right) \exp(\sigma_{ij} - 1)\end{aligned}\]

where \(\sigma_{ij}\) denotes the \((i, j)\) component of the matrix, \(\Sigma\) as usual. We do not prove this result.

2.2.3 Multivariate Student \(t\) Distribution

The multivariate Student \(t\) distribution follows the same method of extension as the multivariate normal density. We say \(T\) is distributed as a multivariate Student \(t\) distribution with \(\nu \in \mathbb{N}_+\) degrees of freedom if \(T\) has density

\[\text{st}_{\mu, \Sigma; \nu}(x) = \frac{\Gamma\left(\frac{\nu+N}{2}\right)}{\Gamma\left(\frac{\nu}{2}\right)(\nu\pi)^{N/2} \det(\Sigma)^{1/2}} \left(1 + \frac{1}{\nu}(x - \mu)'\Sigma^{-1}(x - \mu)\right)^{-\frac{\nu+N}{2}}, \quad (2.37)\]

where \(\Gamma(\cdot)\) is the gamma function.

It may be shown that the marginals of the multivariate Student \(t\) are themselves univariate Student \(t\) with density \(\text{st}_{\mu_i, \sigma_i^2; \nu}(x)\) . As a result, when \(\nu > 1\) , then, \(\mathbb{E}(T) = \mu\) . Finally, for \(\nu > 2\) , \(\text{Cov}(T) = \frac{\nu}{\nu-2}\Sigma\) .

Example 2.2.7. Like the multivariate normal distribution, we may show that an affine transformation of \(X \in \mathbb{R}^N\) with \(X \sim St(\mu, \Sigma; \nu)\) ,

\[a + BX,\]

is again distributed as a Student \(t\) with \(\nu\) degrees of freedom, with

\[a + BX \sim St(a + B\mu, B\Sigma B'; \nu).\]

The proof follows the multivariate normal example, and the proof for nonsingular \(B\) is left to the reader as an exercise.

Much as in the case for multivariate normals, then, we have that portfolios of Student \(t\) distributed random variables with \(\nu\) degrees of freedom are again distributed as Student \(t\) with \(\nu\) degrees of freedom. Further, the isocontours of the Student \(t\) distribution are similarly defined.

2.3 Convergence Results and Estimators

Many are familiar with calculating the sample mean from observations \(\{x_i\}_{i=1}^N\) as

\[\hat{\mu} = \frac{1}{N} \sum_{i=1}^{N} x_i. \quad (2.38)\]

The case in which this is unassailably correct, however, is when each \(x_i\) is drawn from a distribution \(X_i\) , and \(\{X_i\}_{i=1}^N\) are iid. But the above is really an instance of a random variable; namely

\[M_N = \frac{1}{N} \sum_{i=1}^N X_i.\]

If the \(\{X_i\}_{i=1}^N\) are iid, with mean, \(\mu\) , and standard deviation, \(\sigma\) , we know from (2.21) that

\[\begin{aligned}\mathbb{E}(M_N) &= \mathbb{E}\left(\frac{1}{N} \sum_{i=1}^N X_i\right) \\ &= \frac{1}{N} \sum_{i=1}^N \mathbb{E}(X_i) \\ &= \mu.\end{aligned}\]

What we don't know—yet—is how \(M_N\) is distributed. That is, we know that the expected value is what we'd hope it to be, but we don't have an indication of just how big \(N\) should be to have a reasonable estimate of the mean, say.

In fact, \(N\) disappeared in our calculation above. What we would hope is that as \(N\) gets large, \(M_N\) is distributed more 'tightly' around \(\mu\) . Or, put another way, we hope that the probability that \(M_N\) is too far away from \(\mu\) as \(N\) gets large gets arbitrarily small. This second formulation is at the heart of the Weak Law of Large Numbers, and is descriptive of convergence in probability.

We say that a sequence of real valued random variables, \(\{X_i\}\) converges in probability to \(X\) if for any \(\epsilon > 0\) ,

\[\lim_{N \to \infty} \mathbb{P}(|X_N - X| > \epsilon) = 0. \quad (2.39)\]

Theorem 2.3.1. (Weak Law of Large Numbers) Let \(\{X_i\}\) be iid random variables taking values in \(\mathbb{R}\) , with \(\mathbb{E}(X_i) = \mu\) , and \(\text{Var}(X_i) = \sigma^2\) for each \(i\) . Then

\[M_N = \frac{1}{N} \sum_{i=1}^N X_i\]

converges in probability to \(\mu\) .

The proof resorts to two lemmas: Markov's Inequality and Chebyshev's Inequality. We prove each in turn and then return to prove 2.3.1.

Lemma 2.3.1. (Markov's Inequality) Let \(X\) be a nonnegative random variable, and \(a\) some positive constant. Then

\[\mathbb{P}(X \ge a) \le \frac{1}{a} \mathbb{E}(X).\]

Proof. We begin by introducing the indicator random variable

\[1_A(\omega) = \begin{cases} 1 & \text{if } \omega \in A \\ 0 & \text{otherwise.} \end{cases}\]

Since \(X\) is a positive random variable, we have

\[\begin{aligned} \mathbb{E}(X) &\ge \mathbb{E}(X \cdot 1_{X \ge a}) \\ &\ge a\mathbb{E}(1_{X \ge a}) \\ &= a\mathbb{P}(X \ge a). \end{aligned}\]

A geometric interpretation can help orient these steps. Recall that for \(f(x)\) the density of \(X\) , \(f(x) \ge 0\) for all \(x\) , and

\[\begin{aligned} \mathbb{E}(X) &= \int_0^\infty xf(x)dx \\ &= \int_0^a xf(x)dx + \int_a^\infty xf(x)dx \\ &\ge \int_a^\infty xf(x)dx \\ &\ge a \int_a^\infty f(x)dx \\ &= a\mathbb{P}(X \ge a). \end{aligned}\]

The latter proof is only shown for clarity, however, as the former is a stronger result, not requiring an assumption that \(X\) has a density, \(f\) .

Lemma 2.3.2. (Chebyshev's Inequality) Let \(X\) be a random variable taking values in \(\mathbb{R}\) with \(\mathbb{E}(X) = \mu\) and \(\text{Var}(X) = \sigma^2\) . Then

\[\mathbb{P}(|X - \mu| \ge a) \le \frac{\sigma^2}{a^2}.\]

Proof. Clearly, for positive \(a\) ,

\[\mathbb{P}(|X - \mu| \ge a) = \mathbb{P}(|X - \mu|^2 \ge a^2).\]

Now, \((X - \mu)^2\) is a nonnegative random variable, we may apply Markov's Inequality with \(\mathbb{E}((X - \mu)^2)\) and get

\[\mathbb{P}(|X - \mu|^2 \ge a^2) \le \frac{1}{a^2} \mathbb{E}((X - \mu)^2).\]

We know that \(\mathbb{E}((X - \mu)^2) = \sigma^2\) , so, putting these results together, we obtain

\[\mathbb{P}(|X - \mu| \ge a) \le \frac{\sigma^2}{a^2},\]

proving the result.

Notice that in both lemmas that proceeded, no distributional assumptions were used or needed. In fact, the existence of the mean and variance (with finiteness of the former) were the only statistical properties assumed.

We are now in a position to prove the Weak Law of Large Numbers. We again assume that \(\{X_i\}\) are a sequence of univariate iid random variables with mean \(\mu\) and variance \(\sigma^2\) . We have already shown that for

\[M_N = \frac{1}{N} \sum_{i=1}^{N} X_i\]

that \(E(M_N) = \mu\) . Using the fact that the variance of the sum of independent random variables is the sum of their variances, we also have

\[\begin{aligned} Var(M_N) &= Var\left(\frac{1}{N} \sum_{i=1}^{N} X_i\right) \\ &= \frac{1}{N^2} Var\left(\sum_{i=1}^{N} X_i\right) \\ &= \frac{1}{N^2} \sum_{i=1}^{N} Var(X_i) \\ &= \frac{1}{N^2} \sum_{i=1}^{N} \sigma^2 \\ &= \frac{1}{N} \sigma^2. \end{aligned}\]

Notice that the mean remained fixed while the variance, a measure of dispersion of \(M_N\) , scaled by \(\frac{1}{N}\) .

Using Chebyshev's Inequality, we have for any \(\epsilon > 0\) ,

\[\mathbb{P}(|M_N - \mu| \ge \epsilon) \le \frac{\sigma^2}{N} \frac{1}{\epsilon^2}.\]

For a fixed \(\epsilon\) , then, the right hand side goes to zero as \(N \to \infty\) , completing the proof.

The Weak Law of Large Numbers is presented here as a way in which to introduce the concept of estimators. Many of the quantities we take as granted (e.g., \(\mu\) and \(\Sigma\) in Modern Portfolio Theory) rely on estimated parameters in practice. As such, we are best served understanding their statistical properties. The Weak Law gives some indication about the distribution of \(\hat{\mu} = \frac{1}{N} \sum_{i=1}^{N} X_i\) , or at least gives a convergence result.

We might still want to know the actual distribution of \(M_N\) . This would of course be a stronger result, and requires convergence in distribution. We say that a sequence of real valued random variables \(\{X_i\}\) with distribution functions \(\{F_i(\cdot)\}\) , respectively, converges in distribution to \(X\) if

\[\lim_{N \to \infty} F_N(X) = F(X), \quad (2.40)\]

where \(F(\cdot)\) is the distribution function of \(X\) . Convergence in probability implies convergence in distribution in the sense that if real valued random variables \(\{X_i\}\) with distribution functions \(\{F_i(\cdot)\}\) converge in probability to \(X\) ,

\[X_N \to X\]

then

\[F_N \to F.\]

The Central Limit Theorem looks at the distribution of \(M_N\) and is a remarkable result. It states that for \(\{X_i\}\) iid real random variables with finite mean and variance, \(\mu\) and \(\sigma^2\) , respectively. Then the cumulative distribution function, \(F_N\) , of the random variable

\[Z_N = \frac{\sqrt{N}(M_N - \mu)}{\sigma}\]

satisfies

\[\lim_{N \to \infty} F_N(x) = \Phi(x). \quad (2.41)\]

That is, \(Z_N\) has a limiting normal distribution. This is regardless of the distribution of the \(X_i\) 's. The proof is outside the scope of our current work.

2.3.1 Estimators and Bias

An estimator of a given quantity is simply a rule for calculating that quantity based on observed data. If we denote the quantity being estimated as \(\theta\) and the estimator as \(\hat{\theta}\) , we define the bias of the estimator \(\hat{\theta}\) as

\[\text{Bias}(\hat{\theta}) = \mathbb{E}(\hat{\theta}) - \theta. \quad (2.42)\]

An unbiased estimator has zero bias and satisfies

\[\mathbb{E}(\hat{\theta}) = \theta.\]

We have already encountered an estimator of the mean, \(\mu\) , of a sequence of iid random variables, \(\{X_i\}\) , finding that

\[M_N = \frac{1}{N} \sum_{i=1}^{N} X_i\]

satisfied \(\mathbb{E}(M_N) = \mu\) immediately in our investigation. We now may state that the estimator \(M_N\) is an unbiased estimator of the mean.

Example 2.3.1. We next give an unbiased estimator of the variance of iid random variables, \(\{X_i\}\) , each with mean \(\mu\) and variance \(\sigma^2\) . Let

\[s_N = \frac{1}{N-1} \sum_{i=1}^{N} (X_i - \hat{\mu})^2, \quad (2.43)\]

with \(\hat{\mu} = \frac{1}{N} \sum_{i=1}^{N} X_i\) as before. Then

\[\mathbb{E}(s_N) = \sigma^2.\]

The term \(1/(N-1)\) is somewhat surprising on first pass. It will be slightly more intuitive when we encounter the estimate of variance again in ordinary least squares where degrees of freedom will be given more color as well. In the meantime, the current proof shows that we require \(N-1\) in the denominator to ensure that our estimator is unbiased.

Proof. Let \(X\) be distributed as each of the \(X_i\) , and look at

\[\mathbb{E} \left( \sum_{i=1}^{N} (X_i - \hat{\mu})^2 \right) = \sum_{i=1}^{N} \mathbb{E} \left( (X_i - \hat{\mu})^2 \right),\]

and notice that for each summand

\[\mathbb{E} \left( (X_i - \hat{\mu})^2 \right) = \mathbb{E}(X_i^2) - 2\mathbb{E}(X_i\hat{\mu}) + \mathbb{E}(\hat{\mu}^2),\]

so that the sum becomes

\[\begin{aligned} \mathbb{E} \left( \sum_{i=1}^{N} (X_i - \hat{\mu})^2 \right) &= \sum_{i=1}^{N} \mathbb{E}(X_i^2) - 2 \sum_{i=1}^{N} \mathbb{E}(X_i\hat{\mu}) + \sum_{i=1}^{N} \mathbb{E}(\hat{\mu}^2) \\ &= N\mathbb{E}(X^2) - 2 \sum_{i=1}^{N} \mathbb{E}(X_i\hat{\mu}) + N\mathbb{E}(\hat{\mu}^2) \\ &= N\mathbb{E}(X^2) - 2\mathbb{E}(N\hat{\mu}\hat{\mu}) + N\mathbb{E}(\hat{\mu}^2) \\ &= N\mathbb{E}(X^2) - 2N\mathbb{E}(\hat{\mu}^2) + N\mathbb{E}(\hat{\mu}^2) \\ &= N \left( \mathbb{E}(X^2) - \mathbb{E}(\hat{\mu}^2) \right) \end{aligned}\]

We look at \(\mathbb{E}(X^2)\) and \(\mathbb{E}(\hat{\mu}^2)\) in turn.

Now, we know that \(\text{Var}(X) = \mathbb{E}(X^2) - \mu^2\) , giving

\[\mathbb{E}(X^2) = \sigma^2 + \mu^2.\]

Looking at \(\mathbb{E}(\hat{\mu}^2)\) next, we require the variance and mean of \(\hat{\mu}^2\) and apply the same relationship. We have already established that

\[\begin{aligned} \mathbb{E}(\hat{\mu}) &= \mu \\ \text{Var}(\hat{\mu}) &= \frac{1}{N}\sigma^2. \end{aligned}\]

This gives that

\[\mathbb{E}(\hat{\mu}^2) = \frac{1}{N}\sigma^2 + \mu^2.\]

Putting the above together, we have

\[\begin{aligned}\mathbb{E}\left(\sum_{i=1}^N(X_i-\hat{\mu})^2\right) &= N\left(\mathbb{E}(X^2)-\mathbb{E}(\hat{\mu}^2)\right) \\ &= N\left(\sigma^2+\mu^2-\left(\frac{1}{N}\sigma^2+\mu^2\right)\right) \\ &= N\left(\frac{N-1}{N}\sigma^2\right) \\ &= (N-1)\sigma^2.\end{aligned}\]

Dividing both sides by \(N-1\) proves the result, and \(s_N\) is an unbiased estimator of the variance of the iid random variables \(X_i\) .

We have surreptitiously seen estimators in our work already. Whenever a fit of a distribution has been shown, we have estimated the mean and variance (and covariance in one case) of the underlying distributions using the above estimators. Implicit in this estimation is that the random variables under consideration are iid. Specifically, we repeatedly assumed, then, that daily log returns of the S&P are independent. This is actually less of a sure assumption than one might have guessed.

Amir Khandani and Andrew Lo [17] exhibit that a stock trading strategy that buys the last day’s losers and sells the last day’s winners is profitable – and their result is fairly robust within the context they study. If returns were independent, such a strategy should return something indistinguishable from zero. The fact that this is not the case implies that there is some dependence between yesterday’s returns and todays. A simple codification of the finding would be something like

\[r_t = \phi r_{t-1} + \epsilon_t\]

for the most extreme winners and losers each day in the market. The equation above is an example of an autoregressive model, and it says that far from being independent, there is a structural form between daily returns.

Before incorporating your new hedge fund in Delaware where you plan to exploit the strategy just exhibited, know this: it won’t work. Short time scale strategies that center around daily close prices are virtually impossible to execute for two reasons in particular: 1) a massive amount of each day’s trading volume is centered around the closing bell, so that achieving a fill on the exact close price is either very tricky or very expensive; and 2) a significant amount of the variation in stock prices comes between the close and the open (that is, when you can’t trade) so that waiting till the open to get your fills downgrades the strategy to random noise.

Knowing that the strategy isn’t viable in terms of execution does not negate its usefulness, however. For example, Lo and Khandani look at how this simplistic strategy did during the (wholly improbable) Quant Crisis we have alluded to previously – and it fared terribly. Performance over other periods was surprisingly stellar, and the crisis days in August 2007 were outliers for the strategy.

Their reversion strategy, then, provided a simple template to examine observations in the market through an interpretable lens.

While we won't present a solution to the apparent lack of independence of daily returns, we discuss these results to give some insight into the tension between estimation in practice, theory, and empirical findings.

2.3.2 Consequences and Toy Models: CAPM Lite

A couple observations are in order at this point. We have seen previously in examples like Figures 2.1.3 and 2.2 and their related discussions that understanding a random variable's distribution is no easy task. The Weak Law of Large Numbers and the Central Limit Theorem prove results about estimation and distribution of sequences of random variables. In the context of math finance or portfolio management, our mind wanders to an application of these powerful results.

Consider a model where each stock's returns, \(r_i\) , are iid with mean and standard deviation, \(\mu_S\) and \(\sigma_S\) , respectively. The Central Limit Theorem implies that the mean and variance of the return of an evenweighted portfolio,

\[r_{\Pi} = \frac{1}{N} \sum_{i=1}^{N} r_i\]

will, in the limit as \(N\) gets large be normal with mean \(\mu\) and standard deviation \(\frac{1}{\sqrt{N}}\sigma\) . That is, in this toy example we could maintain a fixed level of return, \(\mu\) , while reducing uncertainty as measured by volatility to nearly zero. Of course, this isn't possible in practice since all stocks aren't identical, but as a prologue, we have some motivation to consider diversification as such when constructing a portfolio.

Figure 2.4 gives the next version of a toy model to consider. We might assume that a given stock's returns are comprised of a weighted systemic piece and an idiosyncratic piece, both random variables, with the systemic and idiosyncratic pieces being pairwise independent. Something like

\[r_i = \beta_i m + \epsilon_i, \tag{2.44}\]

where in the case of the figure, we are saying that IBM's returns ( \(r\) ) are linearly related to the returns to the S&P 500 ( \(m\) ), plus some error. We see that this is really just an approximation used for insight and not a model derived from empirical validation, but the implications are interesting.

For example, codifying what we mean by systemic and idiosyncratic, we may for instance assume that

\[\begin{aligned} \mathbb{E}(\epsilon_i) &= 0 \\ \text{Var}(\epsilon_i) &= \sigma_i^2 \\ \text{Cov}(m, \epsilon_i) &= 0 \\ \text{Var}(m) &= \sigma_m^2. \end{aligned}\]

An evenweight portfolio's returns in this case look like

\[\begin{aligned}r_{\Pi} &= \frac{1}{N} \sum_{i=1}^{N} r_i \\&= \frac{1}{N} \sum_{i=1}^{N} \beta_i s + \epsilon_i \\&= \frac{s}{N} \sum_{i=1}^{N} \beta_i + \frac{1}{N} \sum_{i=1}^{N} \epsilon_i.\end{aligned}\]

The mean and variance of \(r_{\Pi}\) , using (2.21) and (2.24) along with the above assumptions, are

\[\begin{aligned}\mathbb{E}(r_{\Pi}) &= \frac{\sum_i \beta_i}{N} \mathbb{E}(m) \\Var(r_{\Pi}) &= \left( \frac{\sum_i \beta_i}{N} \right)^2 \sigma_m^2 + \frac{1}{N^2} \sum_{i=1}^{N} \sigma_i^2,\end{aligned}\]

so that if, say \(\sum_i \beta_i \approx N\) (a reasonable assumption as we shall see later) we have that the evenweight portfolio has returns similar to the market, but the idiosyncratic variance is greatly decreased (imagine all of the \(\sigma_i \approx \sigma\) for some \(\sigma\) , then the idiosyncratic variance is \(\frac{1}{N}\) what it would be holding any single name).

Of course, the attractiveness of reducing idiosyncratic noise raises the question: Why not just own the market, then? We will encounter this question again when we have more tools under our belt.

The first toy model says: if all stocks are iid, you can diversify away all the risk — variance shrinks to zero as N grows, while expected return stays fixed.

The second toy model introduces structure: each stock's return decomposes into a market piece (β_i m) and an idiosyncratic piece (ε_i). Now when you diversify:

- The idiosyncratic variance still shrinks (the 1/N² Σσ_i² term vanishes as N grows)

- But the market variance stays — that (Σβ_i / N)² σ_m² term doesn't go away

So the message is: you can diversify away idiosyncratic risk, but not systematic risk. No matter how many stocks you hold, you're still exposed to the market.

This is why the chapter ends with "why not just own the market?" — if idiosyncratic risk can be eliminated by diversification, what's left is market risk, which you'd have anyway by just holding the index.

So Vanguard, for example, stops here and says, 'There's no reason not to just own the market!' Yes? Meaning, any further development Chris does must be a search for some kind of returns to active management? (I guess I'm defining "active management" as anything at all other than owning the index.)

Exactly right. The passive investing philosophy stops here: systematic risk is unavoidable, idiosyncratic risk is diversifiable, therefore hold the market-cap-weighted index and minimize fees.

Any case for doing something else must argue one of:

- Alpha exists — some managers can generate excess returns (after fees and risk adjustment)

- Factor tilts pay off — size, value, momentum, etc. offer premiums beyond market beta (Fama-French territory from Ch 1)

- Risk isn't just variance — if you care about tail risk, drawdowns, or asymmetry, "minimize variance" isn't the right objective

- Constraints matter — liability matching, tax optimization, ESG screens, etc.

Given that Chris has already flagged fat tails, asymmetry, and the inadequacy of variance as a risk measure, I'd expect the book to build toward (3) — coherent risk measures, CVaR, and optimization objectives that go beyond mean-variance.

- Using the S&P daily log return data, \(\{r_t\}_{t=1}^N\) , and estimating the mean and standard deviation of these log returns by

\[\begin{aligned}\hat{\mu} &= \frac{1}{N} \sum_{t=1}^{N} r_t \\ \hat{\sigma}^2 &= \frac{1}{N-1} \sum_{t=1}^{N} (r_t - \hat{\mu})^2,\end{aligned}\]