Try an interactive version of this dialog: Sign up at solve.it.com, click Upload, and pass this URL.

Hi Solveit, I'm Dan. I'm trained in physical oceanography and experienced in state-space modeling, finite difference modeling, and I've been a data scientist for a number of years. But I have no training or experience in investment. Chris has written a text that I would like to work through and understand, with your help.

As we discuss, it might help for you already to have read the book. I have it split into dialogs by chapter: this is ch_1.ipynb and there are also ch_2.ipynb, ch_3.ipynb etc in this directory. There is also ./spoiler.md, a very short digest of the point of each chapter and how they form the arc of the book. You can refer to any of these as you are working with me. There is also ./bib.ipynb, the bibliography, should that become helpful.

Hi Dan! Great to meet you. Your quantitative background in state-space and finite difference modeling will serve you well here — many portfolio concepts have direct analogs in physical systems.

Let me take a quick look at the spoiler and chapter 1 to orient myself.

{

"id": "toolu_01GYJS96Cy5MYb5MtSTL9BLa",

"call": {

"function": "view",

"arguments": {

"path": "./spoiler.md"

}

},

"result": "'# Portfolio Statistics and Optimization - Chapter Summaries\\n\\n## Chapter 1: Introduction\\nChapter 1 introduces the efficient market hypothesis (EMH) and its contradictions (anomalies), then outlines the book\\'s focus on CAPM, Fama-French, and Mean-Variance Optimization as interpretive tools rather than prescriptive models, emphasizing the importance of understanding statistical assumptions and non-stationarity in financial data.\\n\\n## Chapter 2: Distributions and Summary Statistics\\nChapter 2 builds the statistical foundation: CDFs, PDFs, mean, variance, percentiles, skew, and kurtosis for u<TRUNCATED>"

}

{

"id": "toolu_01YVKNfWrG1cX1HTX5HqyN5c",

"call": {

"function": "view",

"arguments": {

"path": "./ch_1.ipynb"

}

},

"result": "'{\\n \"cells\": [\\n {\\n \"cell_type\": \"markdown\",\\n \"id\": \"9045c272\",\\n \"metadata\": {},\\n \"source\": [\\n \"\\\\n\",\\n \"\\\\n\",\\n \"# Portfolio Statistics and Optimization\\\\n\",\\n \"\\\\n\",\\n \"chris bemis\\\\n\",\\n \"\\\\n\",\\n \"August 11, 2023\\\\n\",\\n \"\\\\n\",\\n \"\"\\n ]\\n },\\n {\\n \"cell_type\": \"markdown\",\\n \"id\": \"c21004c0\",\\n \"metadata\": {\\n \"solveit_ai\": true\\n },\\n \"source\": [\\n \"Hi Solveit, I\\'m Dan. I\\'m trained in physical oceanography and experienced in state-space modeling, finite difference modeling, and I\\'ve <TRUNCATED>"

}

Good context. I've read the chapter summary and Chapter 1.

The arc of the book builds from statistical foundations (distributions, covariance) through regression and optimization theory, to Mean-Variance Optimization and coherent risk measures, ending with practical covariance estimation techniques.

Chapter 1 sets the stage: it introduces the Efficient Market Hypothesis, then CAPM (β as exposure to market), Fama-French (adding size and value factors), and MVO — but with a healthy skepticism. Chris emphasizes using these as interpretive tools rather than gospel, given non-stationarity and fat tails in real markets.

With your state-space and modeling background, the covariance estimation issues (Ch 3, 10) and the optimization machinery (Ch 5-7) will likely feel familiar. The finance-specific concepts — β, risk factors, efficient frontier — will be the new vocabulary layered on top.

Where would you like to start? We could:

- Discuss anything from Ch 1 that's unclear

- Dig into the EMH/CAPM intuition

- Move on to Ch 2's statistical foundations

Introduction

Equity anomalies are abundant. This statement is both terribly, and purposely, imprecise. What defines an equity anomaly? In what sense are they anomalous? And just how abundant is abundant?

Eugene Fama in 1970 wrote Efficient Capital Markets [9], a pronouncement that the stock market efficiently disseminates every piece of information available about every stock that is traded into their respective prices. Asserting that no amount of analysis can separate future winners from losers, for, all available information has already been incorporated into the current price.

Broadly speaking, the above is the efficient market hypothesis (EMH). In the EMH world, no amount of fundamental analysis (reading balance sheets, income statements, and cash flow statements) or technical analysis (identifying patterns, real or perceived) will generate excess returns in the long run. Any contradiction to this is an anomaly. Again we are left with an imprecise statement as to what is meant by excess returns.

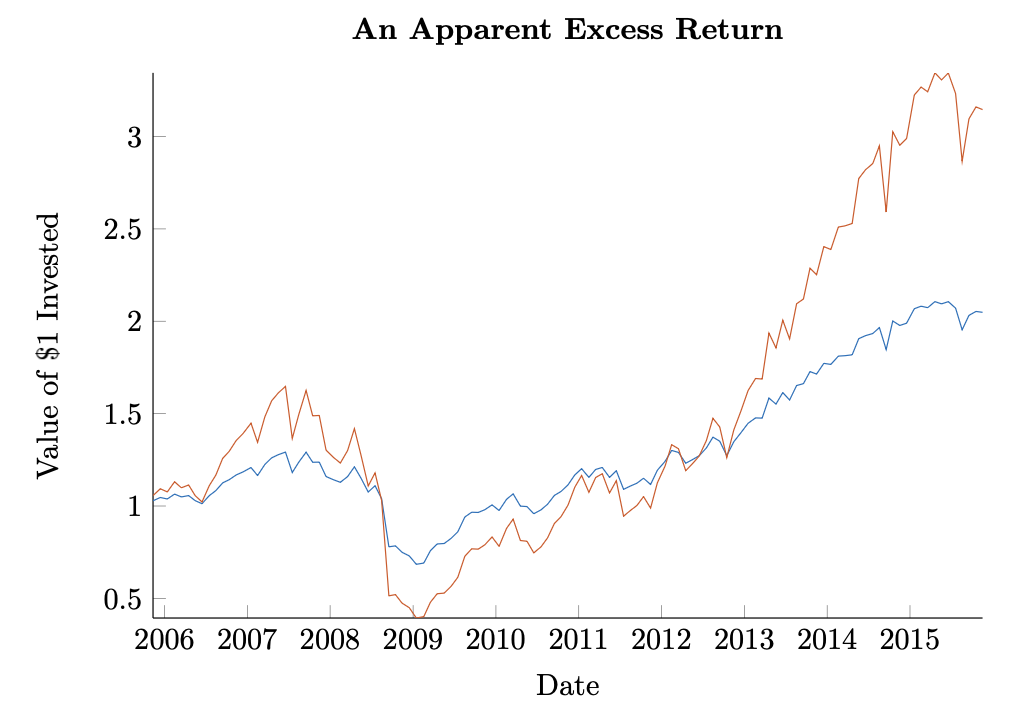

Consider, for example, the chart in Figure 1. Here a one dollar investment is made in each of two securities; the blue line being a market proxy, Standard and Poor's S&P 500 Index. At the end of a ten year period, there is clear 'excess.' But to what end? There is, even without a proper statistical analysis something unappealing in the wild swings and variations in the purported winner. Our sense of caution is not wrong, either, since in this case the winner is simply a leveraged version of the market—returns are \(2\times\) the un-levered version. This surely can't be what is meant by the impossibility of generating excess returns, and in fact it isn't.

However, a somewhat technical treatment is needed. Referring to the figure once more, there is something riskier in the leveraged series. Defining risk and its requisite properties has become a seminal issue over the past few decades. At the outset, volatility was tantamount to risk. And as we will see in subsequent chapters, much of the theoretical machinations supporting the efficient market hypothesis will imply that excess returns are a positive function of risk (read volatility). The example above notwithstanding, this is antithetical to what is observed empirically and what will be shown in subsequent chapters. In fact we will exhibit that the market, rather than compensating for risk in the equity markets, punishes risk-holders across almost every measurement.

Figure 1.1. Value of $1 invested in the S&P 500, including dividends and corporate actions, over a ten year period in blue. The red line shows the value of $1 invested in a related security. Performance is superior, but not without drawbacks.

Figure 1.1. Value of $1 invested in the S&P 500, including dividends and corporate actions, over a ten year period in blue. The red line shows the value of $1 invested in a related security. Performance is superior, but not without drawbacks.

Staying within the confines of the EMH world, then, what should one do if choosing a good stock is proscribed by theoretical fiat? Buy them all, of course! One of the pivotal contributions to the field in fact belies this very statement. At its heart is a treatment of systemic and idiosyncratic risk which culminates in a familiar refrain: you can't beat the market, and diversification is the key to reducing idiosyncratic risk.

Please create in this directory a file for formatting instructions that I can refer to when preparing other dialogs for future chapters. It should include:

- Replace "$" (slash-dollar_sign) with $ (the escape doesn't work, maybe because of KATEX interference)

- Local figure paths like fig/1.1.png should be replaced with https://raw.githubusercontent.com/dangoldner/bemis/main/fig/1.1.png (the local path isn't accessible for the published version of the dialog)

create(path=./formatting_instructions.md, file_text=# Formatting Instructions for Chapter Dialogs

Whe…)

{

"id": "toolu_01Q136uzRu4oF4LLUtepZBwi",

"call": {

"function": "create",

"arguments": {

"path": "./formatting_instructions.md",

"file_text": "# Formatting Instructions for Chapter Dialogs\n\nWhen preparing dialogs for future chapters, apply these transformations:\n\n1. **Dollar signs in LaTeX**: Replace `\\$` (backslash-dollar) with `$` \u2014 the escape doesn't render correctly, possibly due to KaTeX interference.\n\n2. **Figure paths**: Replace local paths like `fig/1.1.png` with the GitHub raw URL:\n ```\n https://raw.githubusercontent.com/dangoldner/bemis/main/fig/1.1.png\n ```\n The local path isn't accessible in the published version of the dialog."

}

},

"result": "'Created file formatting_instructions.md.'"

}

Done — created formatting_instructions.md in this directory with both rules.

Good question. Chris is foreshadowing here — he'll define these properly later. But the intuition is simple:

- Systemic (or systematic) risk: risk that affects the whole market (recessions, interest rate changes, pandemics). You can't diversify it away.

- Idiosyncratic risk: risk specific to one company (bad CEO, factory fire, lawsuit). Diversification does eliminate this.

The punchline he's hinting at: if you hold many stocks, the idiosyncratic risks cancel out (some companies do badly, others do well), leaving only the systemic/market risk. That's why "buy them all" is the EMH-recommended strategy.

Does that help clarify the passage, or would you like to dig deeper into either type?

Well then what's meant by "One of the pivotal contributions to the field belies this very statement"? That the pivotal contribution basically rejects EMH?

Ah, I think Chris may have used "belies" loosely here. Strictly, "belies" means "contradicts" or "gives a false impression of" — which would be confusing in context.

Reading the rest of the sentence, he seems to mean the opposite: that the pivotal contribution (likely Markowitz's Modern Portfolio Theory, 1952) actually supports the "buy them all" logic by formalizing how diversification eliminates idiosyncratic risk. He likely meant something like "underlies" or "embodies." The contribution doesn't reject EMH; it provides the mathematical foundation for why diversification works within the EMH framework.

On the one hand, then, we have a yet-to-be defined model for determining anomalous returns, and on the other, an again yet-to-be defined model to reduce risk across a set of securities.

We will often attempt to gain a deeper understanding of the central themes of modern finance through the lens of the so-called value anomaly. We do this for two reasons: first, we choose to take most market standard models as providing a taxonomy rather than as prescriptive of actual market behavior; and second, while much of our work centers on using and utilizing statistical features of past price performance, we lean towards a belief that these do not encapsulate all of the information in the market. To quote Andy Redleaf, the founder of Whitebox Advisors, in his book, Panic [26]:

A market in which traders can predict future price moves solely on the basis of past price moves is pathetic. Traders can only do this because public financial markets are price-obsessed and price-paranoid. Financial markets are awash in price information (how many times a second does some price on some board somewhere change?) and relatively devoid of knowledge about value and cost to compare it to. Traders are desperate to know what everyone else is paying precisely because there is so little information about what anyone should be paying.

The Focus of This Work: Statistics and Optimization

We arrive next at the focus of the present work: statistics and optimization, with an emphasis on interpretation and allocation. The formulation of the efficient market hypothesis in this book will be a statistical one, with some modification of the standard literature. While we will focus primarily on Merton and Sharpe's Capital Asset Pricing Model [22, 31] and the subsequent Fama-French three factor model [10], we will often eschew their language of risk. Instead, we will use these two models much as the Black-Scholes' option formula is in practice: for an interpretive tool to understand asset allocation and for uniform comparisons.

The Capital Asset Pricing Model (CAPM)

The Capital Asset Pricing Model (or simply CAPM) is, in essence, simply a relationship between a given stock's returns and the market's returns (modulo something called the risk-free rate). Formally it says

\[r_t - r_f = \beta(m_t - r_f) + \epsilon_t,\]

or that the excess return for a given asset at time \(t\), \(r_t\), over the risk free rate, \(r_f\), is a constant multiple \(\beta\) times the excess return of the market, \(m_t\), over the risk free rate, with some allowance for random variation, \(\epsilon_t\). There are several theoretical consequences to the model, many of which will be discussed later. Presently, though, we obtain that the model gives a single variable by which to compare assets.

This confuses me a little because I'd always heard \(\beta\) describes as market returns, contrasted with \(\alpha\), which I understood to be the delta between the asset's return and the market's (\(\alpha = r_t-r_m\)). Did I just have it wrong or is there a reason I might have thought that?

You're close but not quite. Let me write the fuller form of CAPM:

\[r_t - r_f = \alpha + \beta(m_t - r_f) + \epsilon_t\]

-

β is the sensitivity to market returns — how much the asset moves per unit of market move. β=1 means it tracks the market; β=2 means it amplifies (like the leveraged example in Figure 1.1).

-

α is the intercept — the return beyond what β would predict. It's not simply \(r_t - m_t\), but rather the residual after accounting for market exposure.

So if β=1.5 and the market returns 10%, CAPM predicts 15%. If the asset actually returns 18%, that extra 3% is α.

Chris's equation above has α=0 implicitly — which is exactly what EMH claims: no stock should have persistent α, because any "edge" gets priced away. Active managers are trying to find α; the EMH says they can't.

Does that reconcile with what you'd heard?

Returning to our first example of a leveraged market portfolio outperforming the market portfolio, we may, without calculations conclude that the former had a \(\beta\) of 2, while the latter had a \(\beta\) equal to 1, capturing quite nicely the intuition used to construct the example. The effects of leverage, correlation and risk-as-volatility are summarily presented in one metric, allowing uniform comparisons and a common language across any number of securities.

Caveats and Non-Stationarity

Caveats abound in the model above, however. Notably that equity returns are not stationary; i.e., the statistical properties needed to obtain a quantity like \(\beta\) above are not the same over various time slices. Calculating a stock's \(\beta\) from 1990 to 2000 will be different than from 2000 to 2010. Or worse, six months prior to, say, the financial crisis of 2008 will differ from six months after. Worse yet, even as we will develop the framework for understanding the statistical distribution of a variable like \(\beta\) (noting that in fact most financial data should be taken as ranges and not point estimates), the movements of the market can violate the assumptions for well-behaved estimators. Put another way, even if we make an expected range for \(\beta\), the market's moves could go outside this range in a heartbeat.

This makes me wonder if there's any point to calculating \(\beta\), even to compare two assets on the same timeframe. How informative is it? Or put another way, am I generally able to get any \(\beta\) I want for any given asset just by picking the right time frame?

Sharp question. The answer is: β isn't arbitrary, but it's noisy.

You can't get any β you want — a utility company won't show β=3 over any reasonable window, and a volatile tech stock won't show β=0.2. There's real structure there. But yes, you can shift β meaningfully by choosing windows that include or exclude volatile periods.

This is exactly why Chris emphasizes using CAPM as an interpretive tool rather than a predictive one. Comparing two assets over the same timeframe is still informative — it tells you their relative market sensitivity during that period. What's dubious is using a historical β to predict future behavior, or treating it as a stable property of the asset.

Think of it like a correlation coefficient in oceanography: meaningful for describing a relationship in a given dataset, but you wouldn't assume it holds in a different regime.

As David Viniar, the CFO at Goldman Sachs during the Quant Crisis of August 2007 said, "[We saw] things that were 25-standard deviation moves, several days in a row." [15] We aren't yet to the point where we can evaluate the sheer magnitude of this statement of improbability, but suffice it to say, it'll defy comprehension on a universal scale.

The empirical facts of the last thirty years drive our emphasis of interpretive abilities rather than on the normative modeling that has been a feature of mathematical finance since its inception. To quote Karl Popper:

No amount of observations of white swans can allow the conclusion that all swans are white, but the observation of a single black swan is sufficient to refute the conclusion.

There have been a few black swans of note, to the point that they deserve primacy in our applications of financial models. The trend within the field seems to be to accommodate new findings in ever expanding complexity, however.

So the claim is that instead of explicitly acknowledging wide uncertainty, the field has chosen to "explain" outliers with increasing detail -- overfitting, essentially

Exactly right. Rather than saying "our models have fundamental limits and markets exhibit irreducible uncertainty," the field keeps adding epicycles — more factors, more complex distributions, regime-switching models — to explain away each new anomaly.

Chris is pushing back: use simple models (CAPM, Fama-French) for comparison and interpretation, but don't mistake the map for the territory. The 25-sigma events aren't bugs in the model to be patched; they're evidence that the model's assumptions (stationarity, thin tails) are wrong at a foundational level.

The Fama-French Extension

An example—but one that is meritus—is the Fama-French extension to CAPM [10]. Here, finding that small companies outperform larger companies, and that higher value stocks outperform lower, they expand CAPM to

\[r_t - r_f = \beta_m(m_t - r_f) + \beta_h h_t + \beta_v v_t + \epsilon_t,\]

with \(h_t\) being the returns to a long-short portfolio that is long small companies and short large, and \(v_t\) similarly constructed from high book-to-price companies versus low book-to-price. Not surprisingly, the anomalies (relative to CAPM) that Fama and French noted are subsumed in their model, and ipso facto, size and value are no longer anomalies.

Ok this might be my layman's misunderstanding again but retail investment marketing is always on about growth vs value stocks. Is this just that -- "growth" = "small" and "value" = high book-to-price? And while I'm asking, what's book to price - net assets to market cap?

Two separate dimensions, actually:

- Size factor (h): small cap vs large cap (by market capitalization)

- Value factor (v): high book-to-price ("value") vs low book-to-price ("growth")

So the retail "growth vs value" maps to the value factor only. "Growth" stocks have low book-to-price — the market prices them high relative to their accounting value, betting on future earnings. "Value" stocks are the opposite: priced cheaply relative to book value.

And yes, you've got book-to-price right: book value (assets minus liabilities, i.e., shareholder equity on the balance sheet) divided by market cap.

An implication in the standard literature is that these new factors are risk factors. Our treatment will not subscribe to this view as there is little empirical evidence to justify the term. Instead, we note the power of understanding an asset or a weighted portfolio of assets in terms of highly meaningful and understandable exposures within the market. We will also see that models like Fama-French and CAPM will lend themselves readily to portfolio allocation models where understanding the co-movements of assets is rife with estimation error.

Not avoid entirely, but dramatically reduce what you need to estimate.

Direct approach: for N assets, you need N(N-1)/2 pairwise correlations. With 500 stocks, that's ~125,000 correlations — each estimated noisily.

Factor model approach: estimate each asset's β to a few factors (1 for CAPM, 3 for Fama-French). The correlations between assets are then implied by their shared factor exposures. Two high-β stocks will be correlated because they both move with the market — you don't estimate their correlation directly.

So you go from O(N²) parameters to O(N×k), where k is small. Much less room for estimation error to creep in.

Mean-Variance Optimization and Modern Portfolio Theory

Once we have gained an understanding of several statistical methods through the lens of mathematical finance, we will be in a position to study portfolio optimization. After developing some of the main tools for unconstrained and constrained optimization, we will find a direct connection between CAPM and Modern Portfolio Theory: Merton's 1952 Mean-Variance Optimization (MVO) [22],

\[\begin{aligned}\min_w & \frac{1}{2} w' \Sigma w \\ & \mu' w = \mu^* \\ & 1' w = 1.\end{aligned}\]

where \(w\) is a vector of portfolio weights, \(\Sigma\) is the covariance of returns for the assets in question, \(\mu\) is their expected return, and \(\mu^*\) is some required return.

The third line just says the weights add to 1 (conservation of money: you can't invest what you don't have). The second line is the salient constraint, you have to have your weighted return above the "requirement". The first line is looking for the weights that maximally de-correlate the portfolio (and so reduce "risk", to be defined?) subject to the constraint? Don't be afraid to tell me if my reading is off, it's been a while since I did much math.

Your reading is close — two small refinements:

-

The return constraint (\(\mu'w = \mu^*\)) is an equality, not a lower bound. You're asking: "give me exactly this return." You then solve the problem repeatedly for different values of \(\mu^*\) to trace out the efficient frontier — the curve of minimum-variance portfolios at each return level.

-

The objective \(w'\Sigma w\) is portfolio variance — it captures both individual asset variances and their covariances. Minimizing it doesn't decorrelate per se; it finds the combination of weights that yields the lowest total variance. That might mean tilting toward low-variance assets, or exploiting negative correlations, or both.

So the intuition: "For a given return target, find the least-volatile way to get there."

No parameters are given from on high, however, so that while \(\Sigma\) and \(\mu\) are neatly expressed above, obtaining reasonable estimates that are stable through a future time horizon is no easy task. Rather, for most models presented, the necessary stationarity requirements are assumed, in contrast to what is observed in the markets.

A proper treatment of the allocation problem in modern portfolio theory therefore necessitates a statistical treatment as well – as has already been alluded to vis a vis the previous model discussions. Here, understanding the structure of the covariance of returns becomes paramount, and we will visit and revisit this topic throughout the text.

With a solid underpinning of statistics and Modern Portfolio Theory in place, we will also consider more recent developments in portfolio optimization; namely, coherent measures of risk, and, as will be the tone of the text, generally, develop tools for implementing several such measures in practice.

Our approach in every topic will be to arduously highlight axioms before obtaining results, with the belief that implemented models require the practitioner to understand exactly which of the underlying assumptions are wrong (but perhaps useful), and which are dangerous, and we strive to develop the tools to do just this in the pages that follow.

Onward. Please write a short note about me and what I'm doing here and about the book structure and resources like spoiler.md that I can paste at the top of a new dialog to set context for reading the next chapter.

Here's a context note for your next chapter:

# Context

I'm Dan — trained in physical oceanography, experienced in state-space modeling, finite difference methods, and data science, but new to investment theory. I'm working through Chris Bemis's *Portfolio Statistics and Optimization* (August 2023) to build understanding.

**Book structure**: Chapters are split into separate dialogs (ch_1.ipynb, ch_2.ipynb, etc.). Also available:

- `./spoiler.md` — short digest of each chapter's point and how they form the book's arc

- `./bib.ipynb` — bibliography

**Arc**: The book builds from statistical foundations (distributions, covariance) through regression and optimization theory to Mean-Variance Optimization and coherent risk measures. Chris emphasizes using CAPM and Fama-French as *interpretive* tools rather than prescriptive models, given non-stationarity and fat tails in real markets.

**Ch 1 covered**: EMH, systemic vs idiosyncratic risk, CAPM (β as market sensitivity, α as excess return), Fama-French (adding size and value factors), and MVO setup. Key theme: models are useful for interpretation and comparison, but their stationarity assumptions are violated in practice.